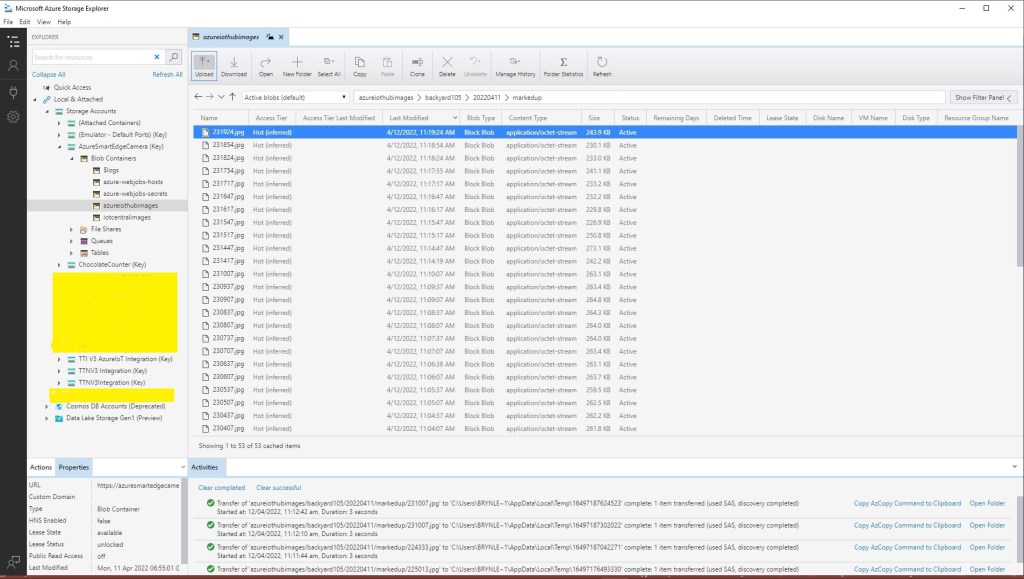

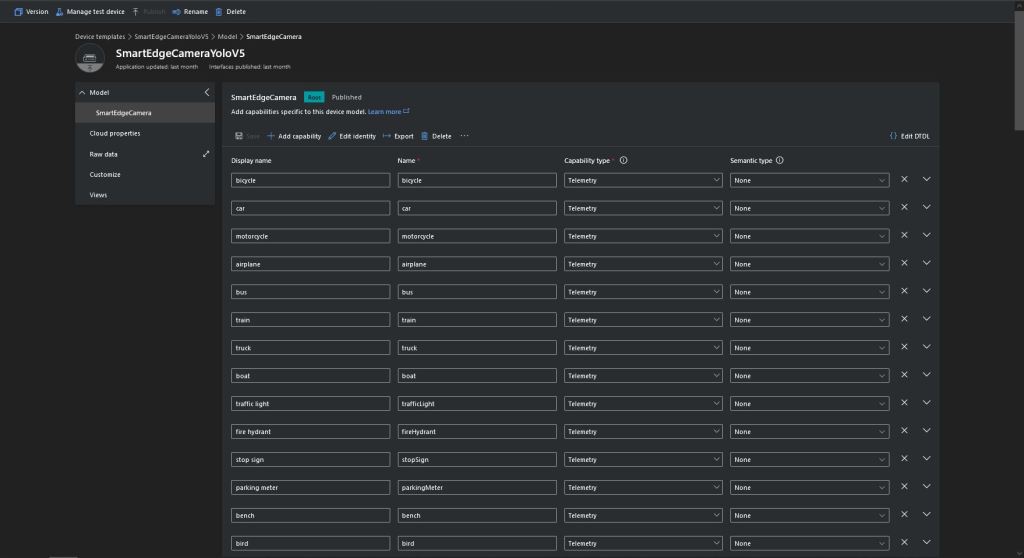

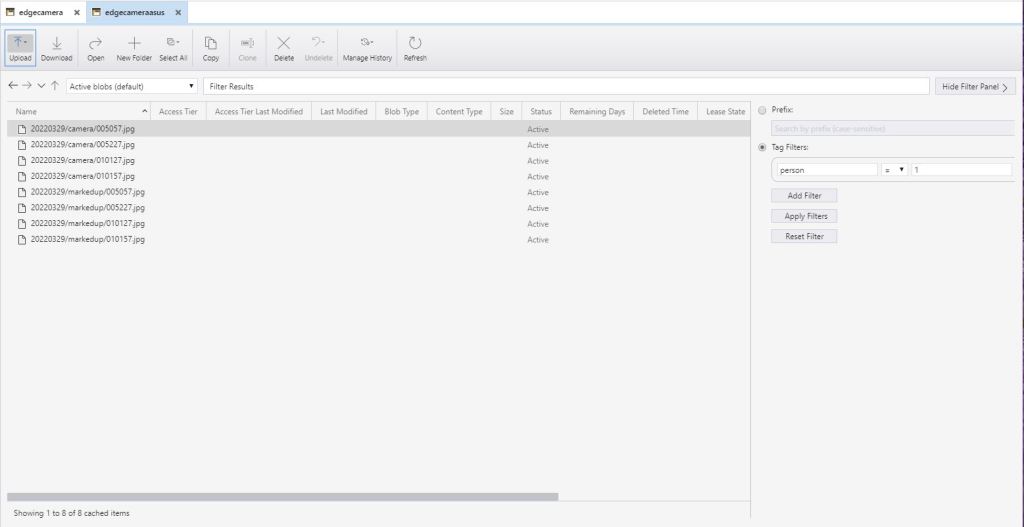

The SmartEdgeCameraAzureStorageService uploads images with “tags” so it is easier to search for images that may need reviewing. When I added the same tagging functionality to the SmartEdgeCameraAzureIoTService which uploads images to the Storage Account associated with my Azure IoT Hub it failed.

[16:39:30.66]fail: devMobile.IoT.MachineLearning.SmartEdgeCameraAzureIoTService.Worker[0]

Camera image download, post processing, or telemetry failed

Azure.RequestFailedException: This request is not authorized to perform this operation using this permission.

RequestId:7a1747db-e01e-0019-484c-5c0499000000

Time:2022-04-30T04:39:31.2050951Z

Status: 403 (This request is not authorized to perform this operation using this permission.)

ErrorCode: AuthorizationPermissionMismatch

Content:

<?xml version="1.0" encoding="utf-8"?><Error><Code>AuthorizationPermissionMismatch</Code><Message>This request is not authorized to perform this operation using this permission.

RequestId:7a1747db-e01e-0019-484c-5c0499000000

Time:2022-04-30T04:39:31.2050951Z</Message></Error>

Headers:

Server: Windows-Azure-Blob/1.0,Microsoft-HTTPAPI/2.0

x-ms-request-id: 7a1747db-e01e-0019-484c-5c0499000000

x-ms-client-request-id: d0e8eb36-9e01-4eac-a522-f84b9deafa32

x-ms-version: 2021-04-10

x-ms-error-code: AuthorizationPermissionMismatch

Date: Sat, 30 Apr 2022 04:39:30 GMT

Content-Length: 279

Content-Type: application/xml

at Azure.Storage.Blobs.BlockBlobRestClient.UploadAsync(Int64 contentLength, Stream body, Nullable`1 timeout, Byte[] transactionalContentMD5, String blobContentType, String blobContentEncoding, String blobContentLanguage, Byte[] blobContentMD5, String blobCacheControl, IDictionary`2 metadata, String leaseId, String blobContentDisposition, String encryptionKey, String encryptionKeySha256, Nullable`1 encryptionAlgorithm, String encryptionScope, Nullable`1 tier, Nullable`1 ifModifiedSince, Nullable`1 ifUnmodifiedSince, String ifMatch, String ifNoneMatch, String ifTags, String blobTagsString, Nullable`1 immutabilityPolicyExpiry, Nullable`1 immutabilityPolicyMode, Nullable`1 legalHold, CancellationToken cancellationToken)

at Azure.Storage.Blobs.Specialized.BlockBlobClient.UploadInternal(Stream content, BlobHttpHeaders blobHttpHeaders, IDictionary`2 metadata, IDictionary`2 tags, BlobRequestConditions conditions, Nullable`1 accessTier, BlobImmutabilityPolicy immutabilityPolicy, Nullable`1 legalHold, IProgress`1 progressHandler, String operationName, Boolean async, CancellationToken cancellationToken)

at Azure.Storage.Blobs.Specialized.BlockBlobClient.<>c__DisplayClass62_0.<<GetPartitionedUploaderBehaviors>b__0>d.MoveNext()

--- End of stack trace from previous location ---

at Azure.Storage.PartitionedUploader`2.UploadInternal(Stream content, Nullable`1 expectedContentLength, TServiceSpecificData args, IProgress`1 progressHandler, Boolean async, CancellationToken cancellationToken)

at Azure.Storage.Blobs.Specialized.BlockBlobClient.UploadAsync(Stream content, BlobUploadOptions options, CancellationToken cancellationToken)

at devMobile.IoT.MachineLearning.SmartEdgeCameraAzureIoTService.Worker.UploadImage(List`1 predictions, String filepath, String blobpath) in C:\Users\BrynLewis\source\repos\AzureMLNetSmartEdgeCamera\SmartEdgeCameraAzureIoTService\Worker.cs:line 581

at devMobile.IoT.MachineLearning.SmartEdgeCameraAzureIoTService.Worker.UploadImage(List`1 predictions, String filepath, String blobpath) in C:\Users\BrynLewis\source\repos\AzureMLNetSmartEdgeCamera\SmartEdgeCameraAzureIoTService\Worker.cs:line 606

at devMobile.IoT.MachineLearning.SmartEdgeCameraAzureIoTService.Worker.ImageUpdateTimerCallback(Object state) in C:\Users\BrynLewis\source\repos\AzureMLNetSmartEdgeCamera\SmartEdgeCameraAzureIoTService\Worker.cs:line 394

[16:39:30.72]info: devMobile.IoT.MachineLearning.SmartEdgeCameraAzureIoTService.Worker[0]

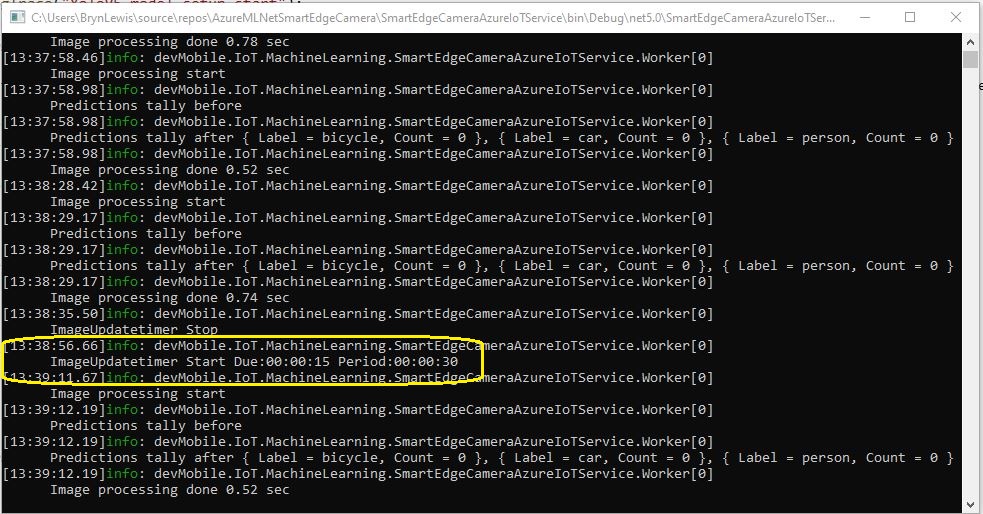

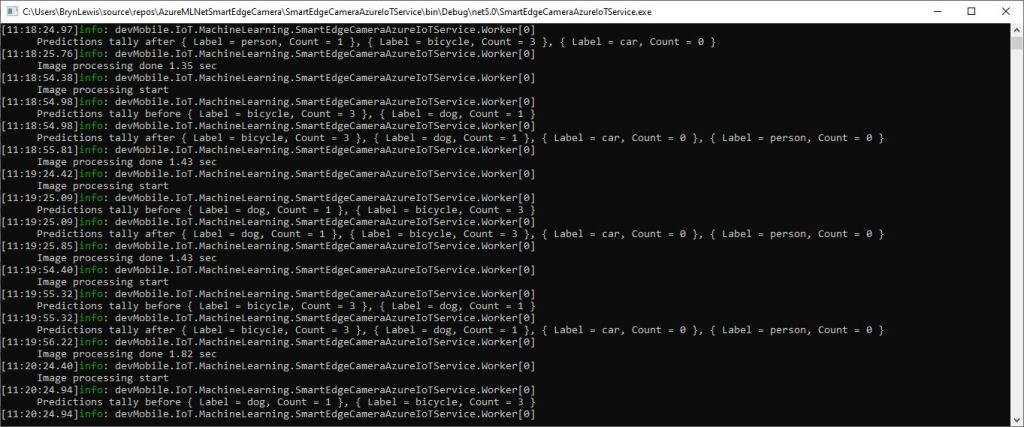

try

{

FileUploadSasUriResponse sasUri = await _deviceClient.GetFileUploadSasUriAsync(fileUploadSasUriRequest);

var blockBlobClient = new BlockBlobClient(sasUri.GetBlobUri());

...

var blockBlobClient = new BlockBlobClient(uploadUri);

BlobUploadOptions blobUploadOptions = new BlobUploadOptions()

{

Tags = new Dictionary<string, string>()

};

foreach (var prediction in predictionsTally)

{

blobUploadOptions.Tags.Add(prediction.Label, prediction.Count.ToString());

}

await blockBlobClient.UploadAsync(fileStreamSource, blobUploadOptions);

...

}

catch (Exception ex)

{

...

}

There were no relevant search results(April 2022) so I submitted a Microsoft Azure IoT SDK for .NET issue “UploadAsync fails when Tags added to blob uploading to Storage Account associated with an IoT Hub” which has been triaged and moved to “discussion”.