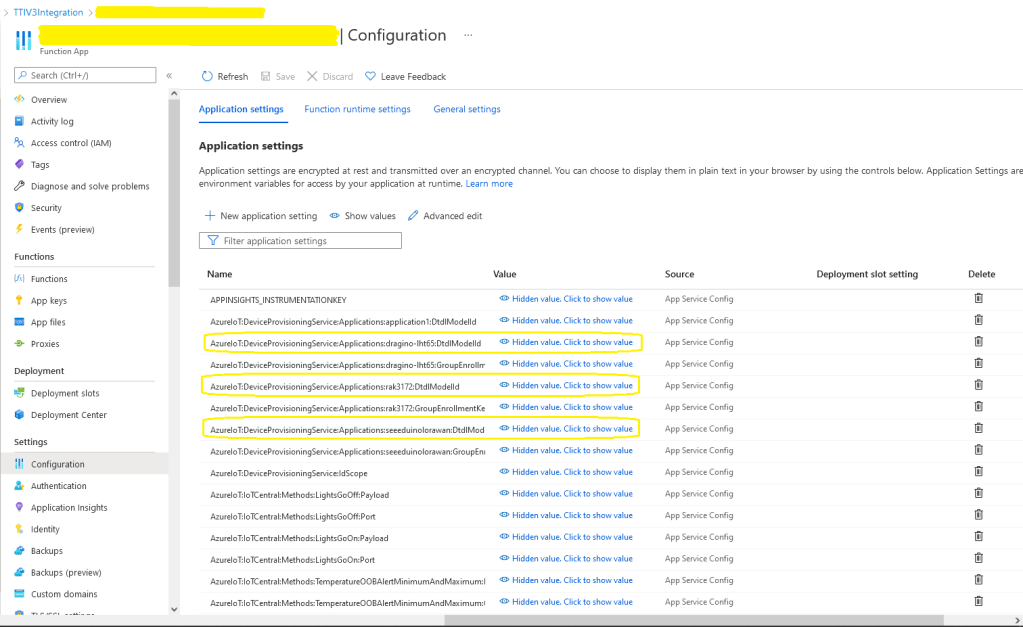

The Myriota connector supports the use of Digital Twin Definition Language(DTDL) for Azure IoT Hub Connection Strings and the Azure IoT Hub Device Provisioning Service(DPS).

{

"ConnectionStrings": {

"ApplicationInsights": "...",

"UplinkQueueStorage": "...",

"PayloadFormattersStorage": "..."

},

"AzureIoT": {

...

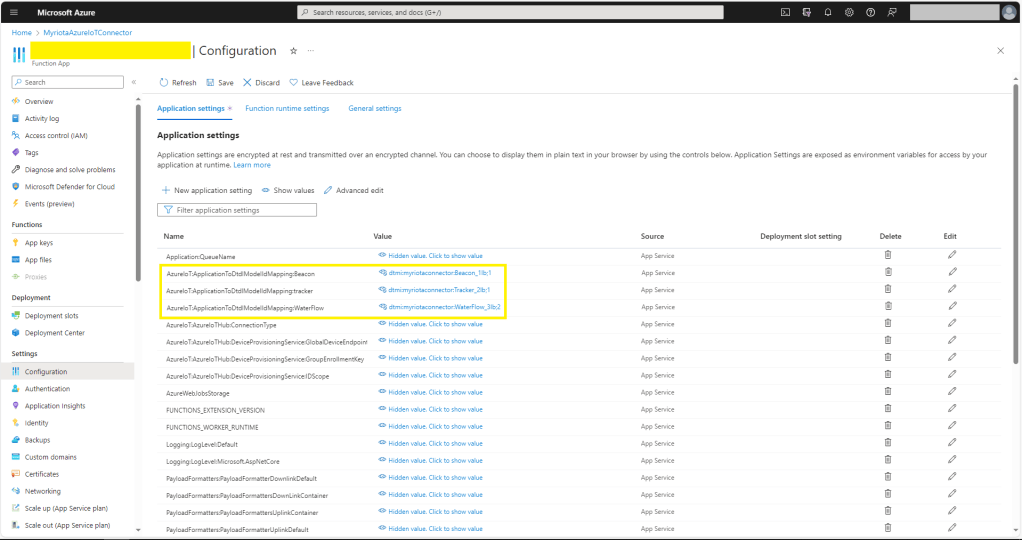

"ApplicationToDtdlModelIdMapping": {

"tracker": "dtmi:myriotaconnector:Tracker_2lb;1",

}

}

...

}

The Digital Twin Definition Language(DTDL) configuration used when a device is provisioned or when it connects is determined by the payload application which is based on the Myriota Destination endpoint.

BEWARE – They application in ApplicationToDtdlModelIdMapping is case sensitive!

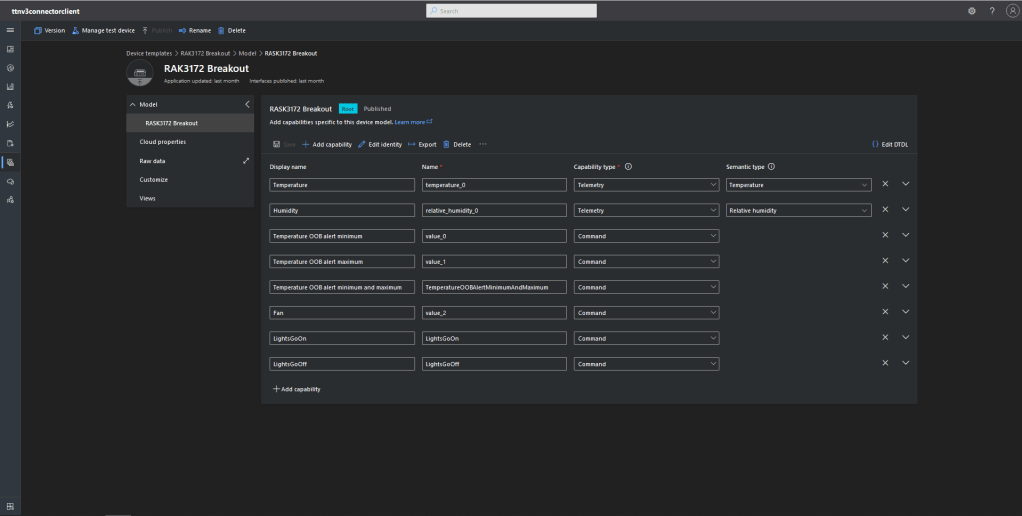

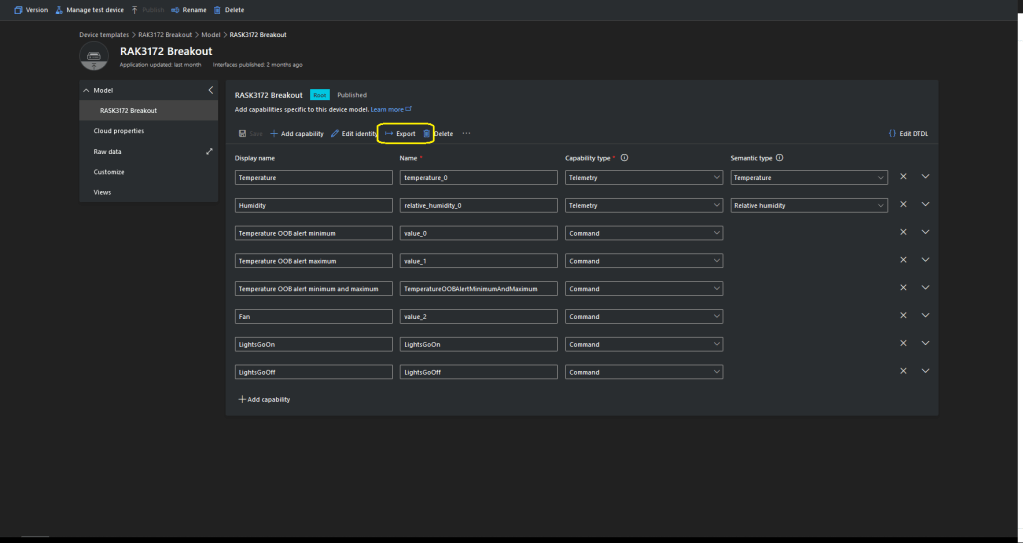

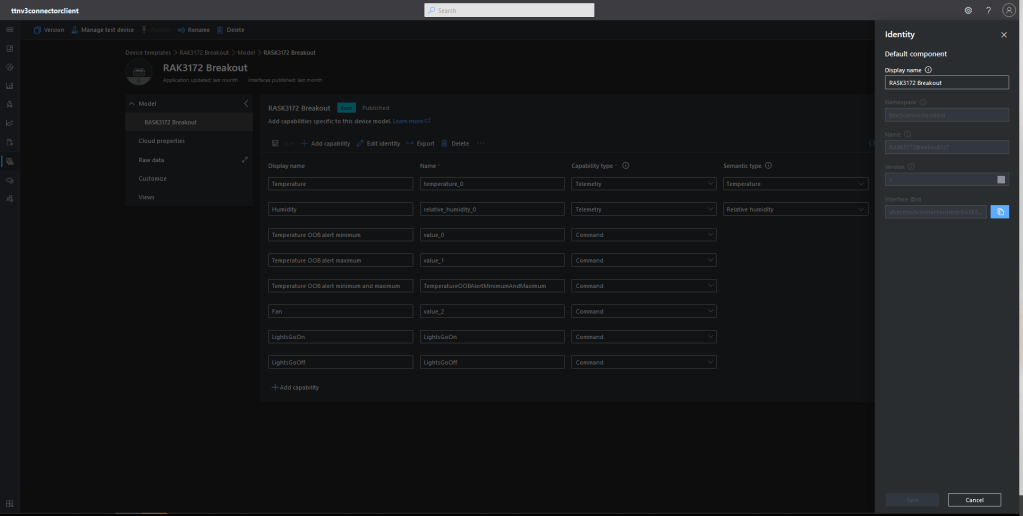

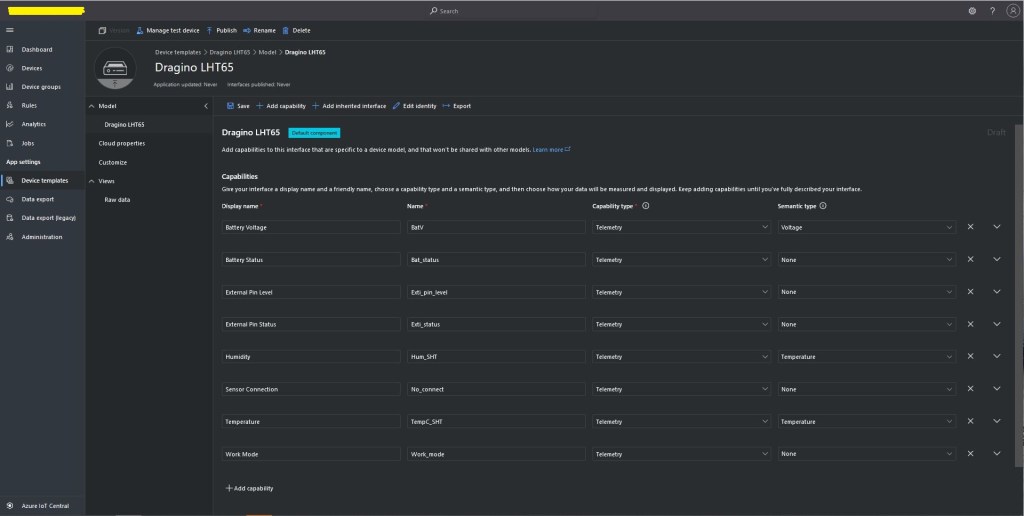

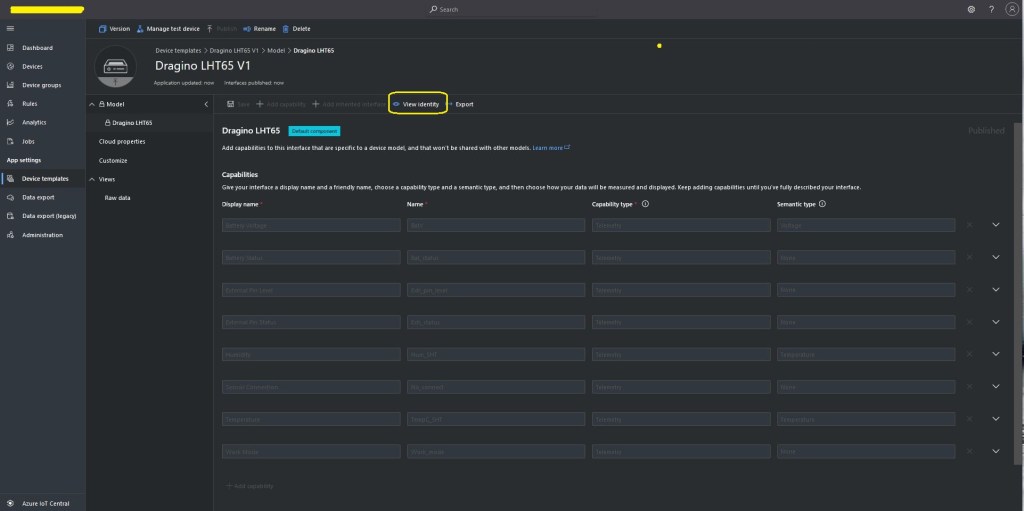

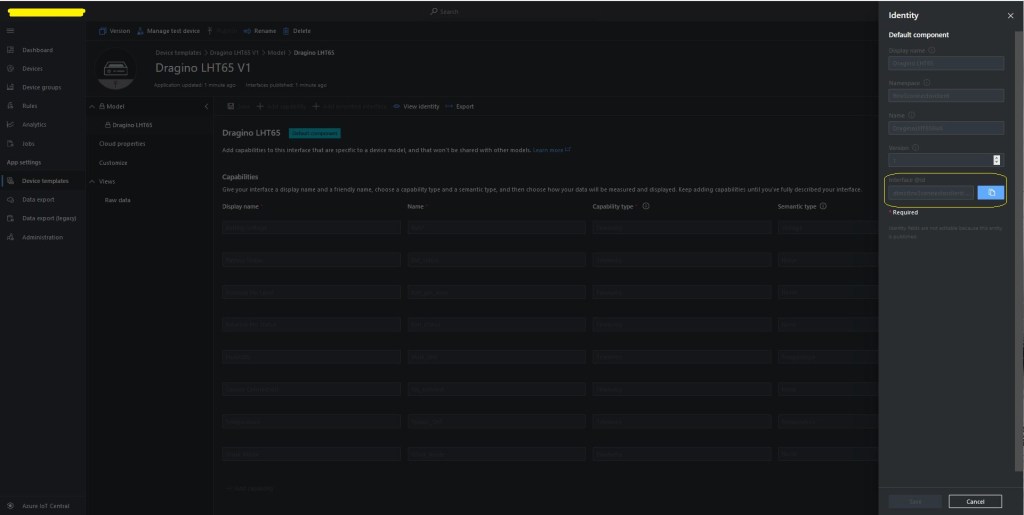

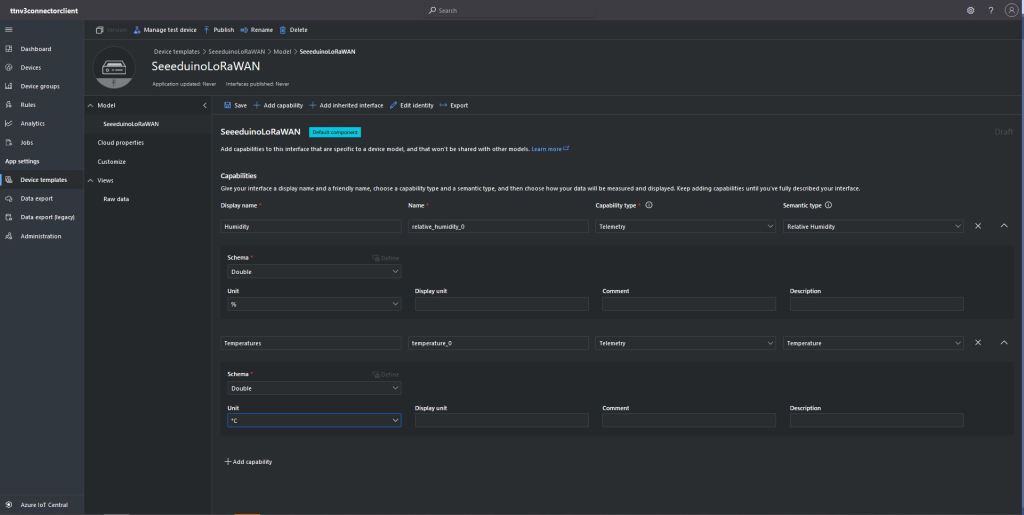

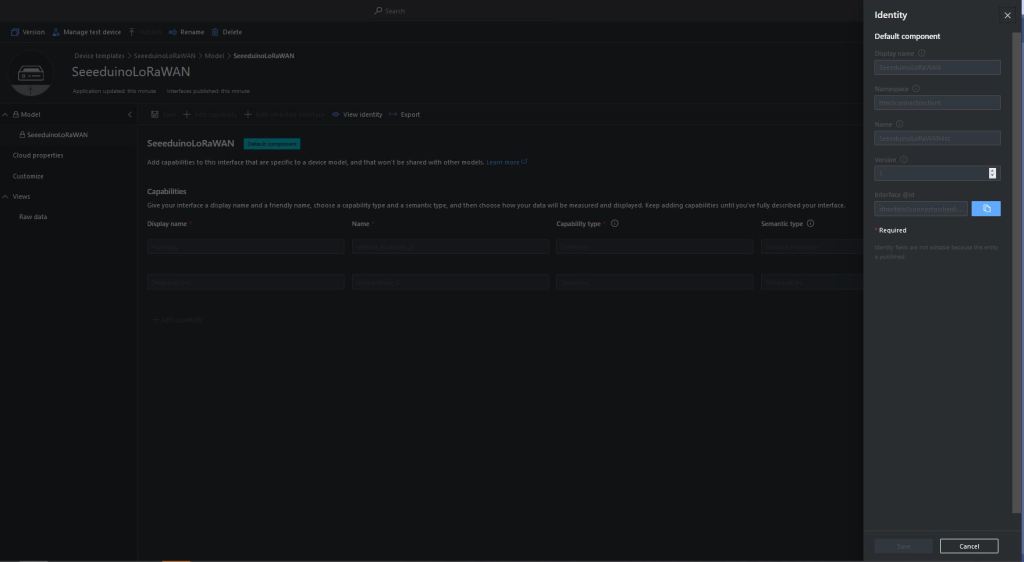

I used Azure IoT Central Device Template functionality to create my Azure Digital Twin definitions.

Azure IoT Hub Device Connection String

The DeviceClient CreateFromConnectionString method has an optional ClientOptions parameter which specifies the DTLDL model ID for the duration of the connection.

private async Task<DeviceClient> AzureIoTHubDeviceConnectionStringConnectAsync(string terminalId, string application, object context)

{

DeviceClient deviceClient;

if (_azureIoTSettings.ApplicationToDtdlModelIdMapping.TryGetValue(application, out string? modelId))

{

ClientOptions clientOptions = new ClientOptions()

{

ModelId = modelId

};

deviceClient = DeviceClient.CreateFromConnectionString(_azureIoTSettings.AzureIoTHub.ConnectionString, terminalId, TransportSettings, clientOptions);

}

else

{

deviceClient = DeviceClient.CreateFromConnectionString(_azureIoTSettings.AzureIoTHub.ConnectionString, terminalId, TransportSettings);

}

await deviceClient.OpenAsync();

return deviceClient;

}

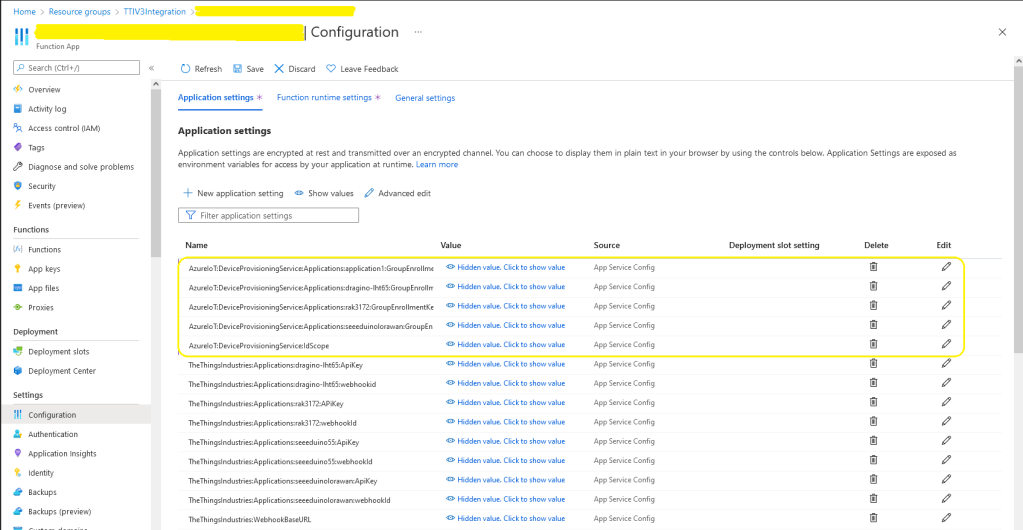

Azure IoT Hub Device Provisioning Service

The ProvisioningDeviceClient RegisterAsync method has an optional ProvisionRegistrationAdditionalData parameter. The PnpConnection CreateDpsPayload is used to generate the JsonData property which specifies the DTLDL model ID used when the device is initially provisioned.

private async Task<DeviceClient> AzureIoTHubDeviceProvisioningServiceConnectAsync(string terminalId, string application, object context)

{

DeviceClient deviceClient;

string deviceKey;

using (var hmac = new HMACSHA256(Convert.FromBase64String(_azureIoTSettings.AzureIoTHub.DeviceProvisioningService.GroupEnrollmentKey)))

{

deviceKey = Convert.ToBase64String(hmac.ComputeHash(Encoding.UTF8.GetBytes(terminalId)));

}

using (var securityProvider = new SecurityProviderSymmetricKey(terminalId, deviceKey, null))

{

using (var transport = new ProvisioningTransportHandlerAmqp(TransportFallbackType.TcpOnly))

{

DeviceRegistrationResult result;

ProvisioningDeviceClient provClient = ProvisioningDeviceClient.Create(

_azureIoTSettings.AzureIoTHub.DeviceProvisioningService.GlobalDeviceEndpoint,

_azureIoTSettings.AzureIoTHub.DeviceProvisioningService.IdScope,

securityProvider,

transport);

if (_azureIoTSettings.ApplicationToDtdlModelIdMapping.TryGetValue(application, out string? modelId))

{

ClientOptions clientOptions = new ClientOptions()

{

ModelId = modelId

};

ProvisioningRegistrationAdditionalData provisioningRegistrationAdditionalData = new ProvisioningRegistrationAdditionalData()

{

JsonData = PnpConvention.CreateDpsPayload(modelId)

};

result = await provClient.RegisterAsync(provisioningRegistrationAdditionalData);

}

else

{

result = await provClient.RegisterAsync();

}

if (result.Status != ProvisioningRegistrationStatusType.Assigned)

{

_logger.LogWarning("Uplink-DeviceID:{0} RegisterAsync status:{1} failed ", terminalId, result.Status);

throw new ApplicationException($"Uplink-DeviceID:{0} RegisterAsync status:{1} failed");

}

IAuthenticationMethod authentication = new DeviceAuthenticationWithRegistrySymmetricKey(result.DeviceId, (securityProvider as SecurityProviderSymmetricKey).GetPrimaryKey());

deviceClient = DeviceClient.Create(result.AssignedHub, authentication, TransportSettings);

}

}

await deviceClient.OpenAsync();

return deviceClient;

}

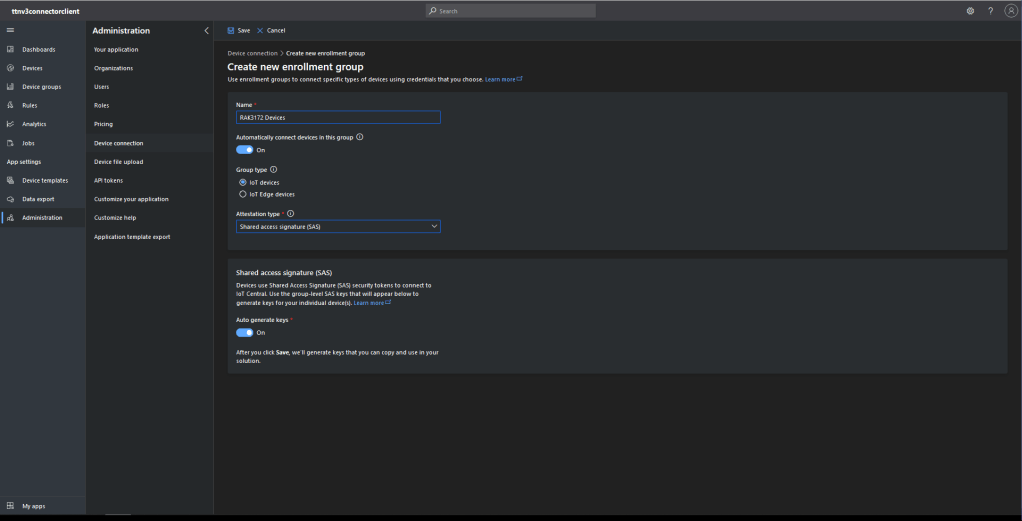

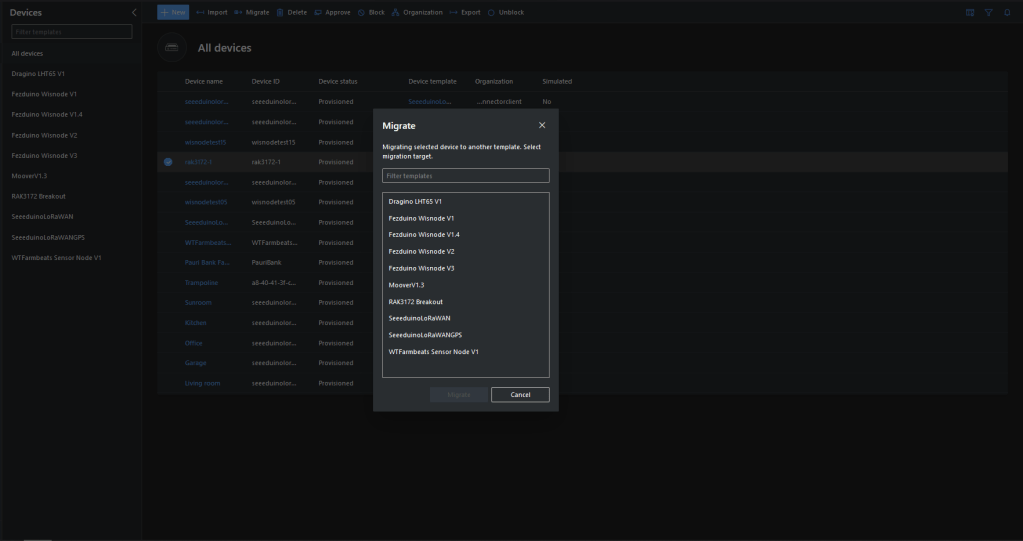

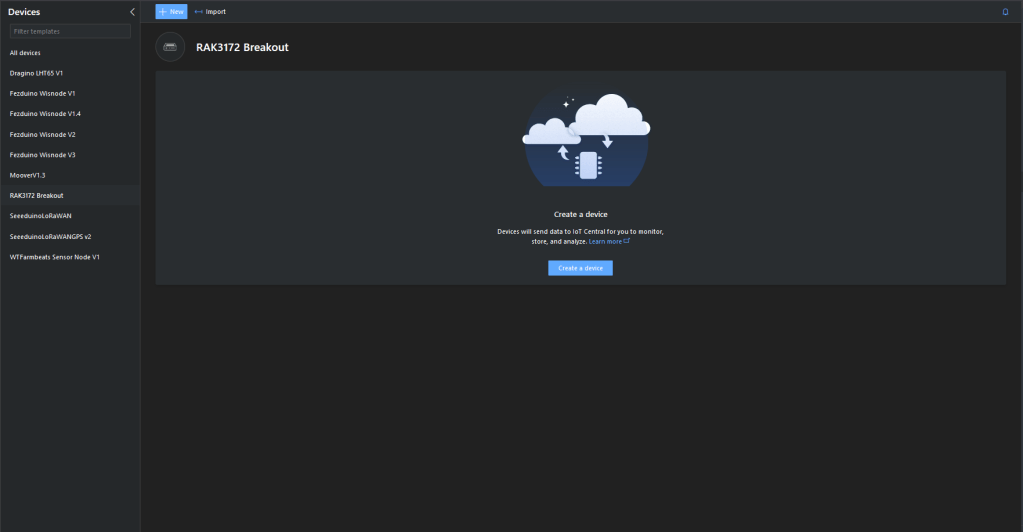

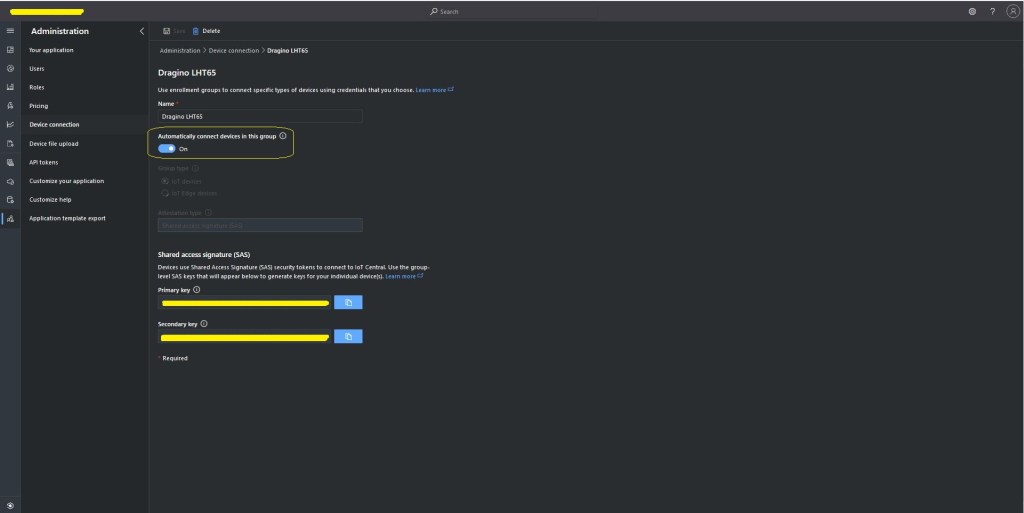

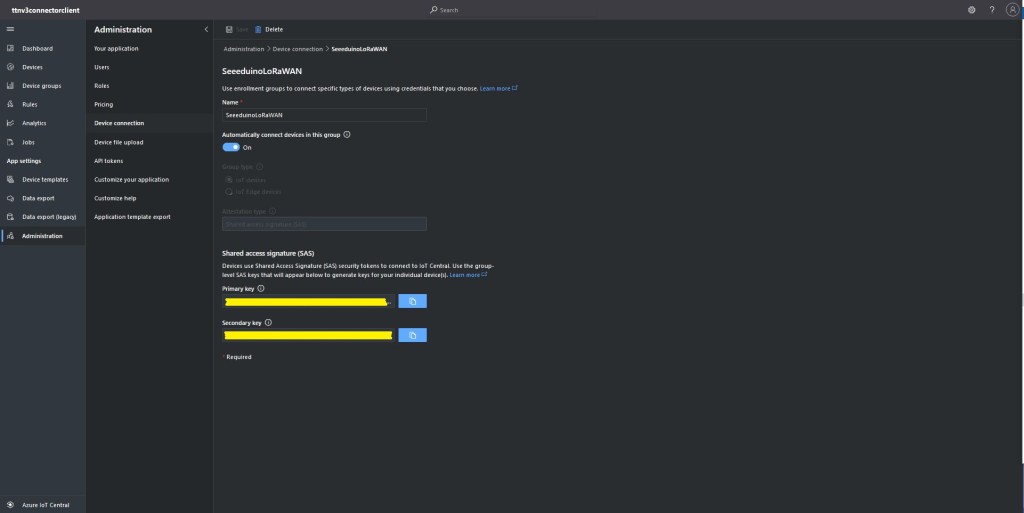

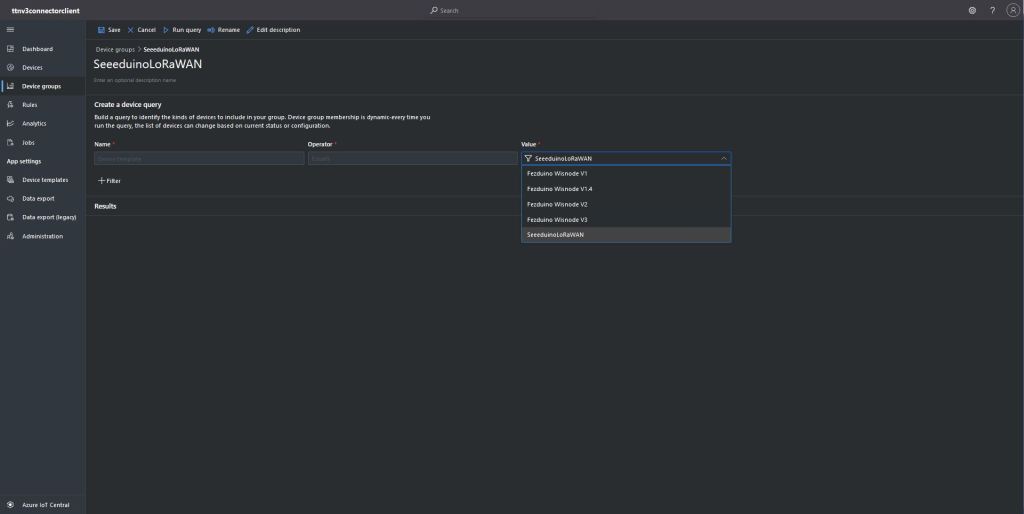

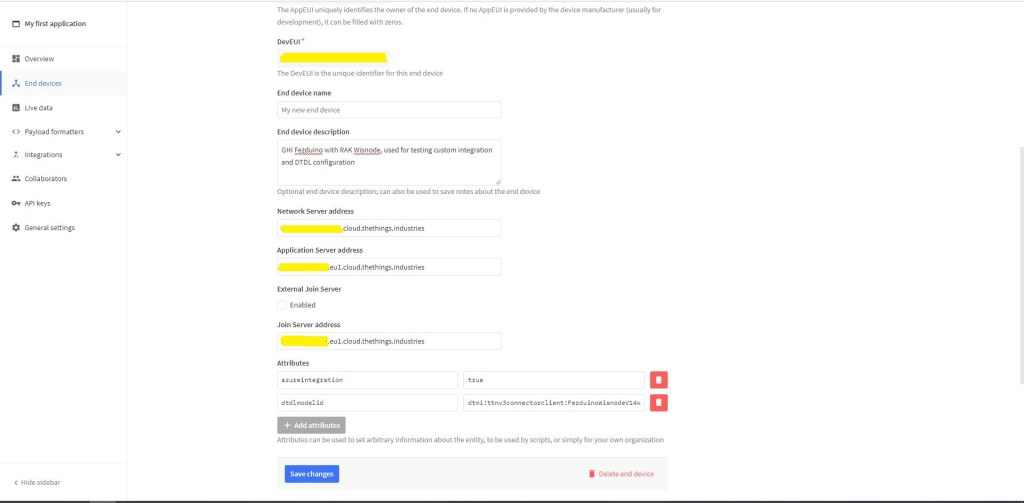

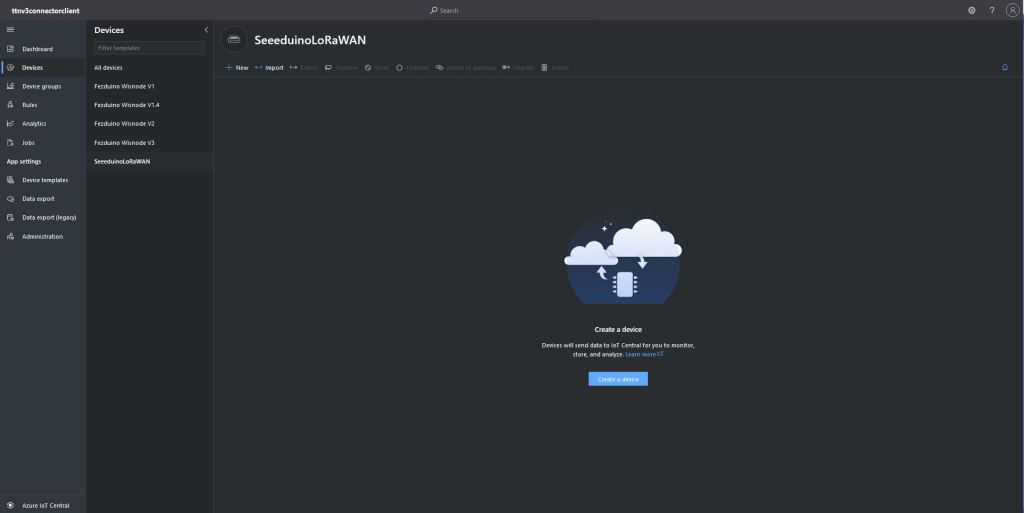

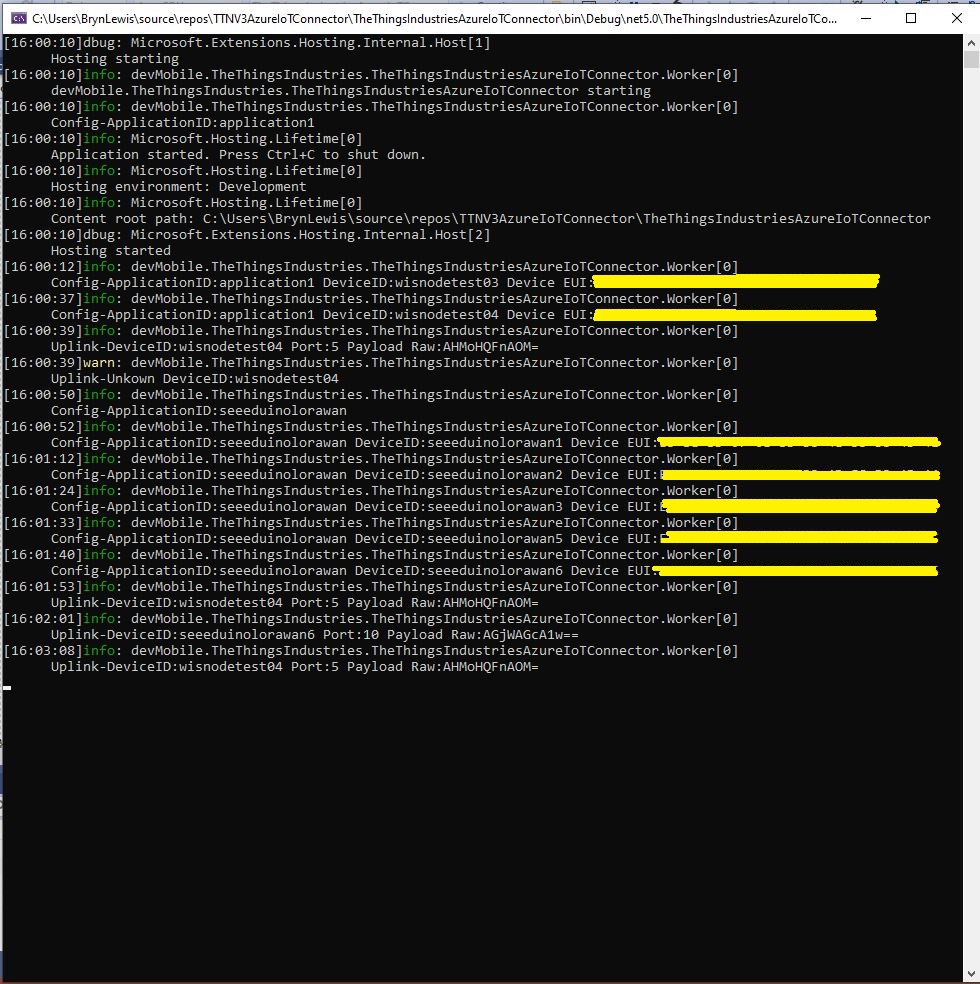

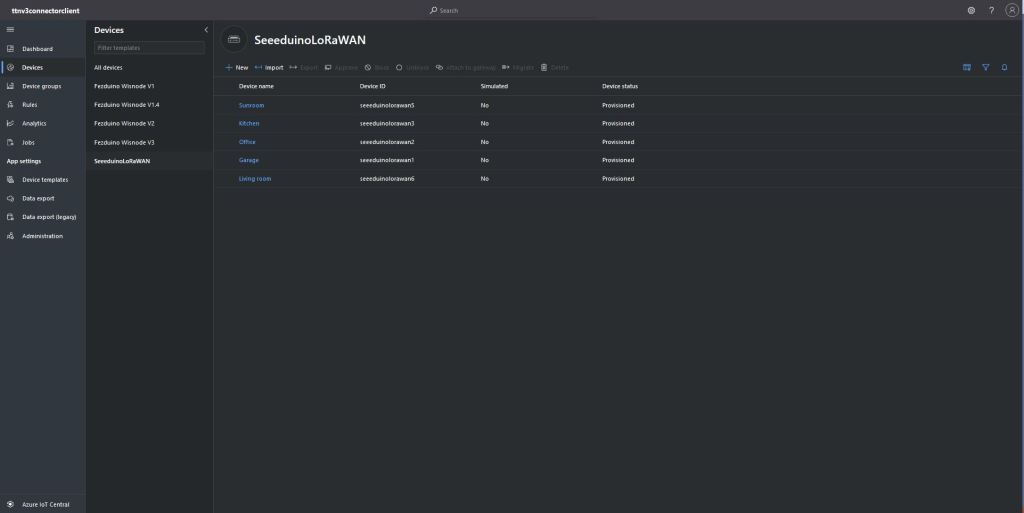

An Azure IoT Central Device connection groups can be configured to “automagically” provision devices.