This post builds on Smartish Edge Camera – Azure Hub Part 1 using the Azure IoT Hub Device Provisioning Service(DPS) to connect to Azure IoT Central.

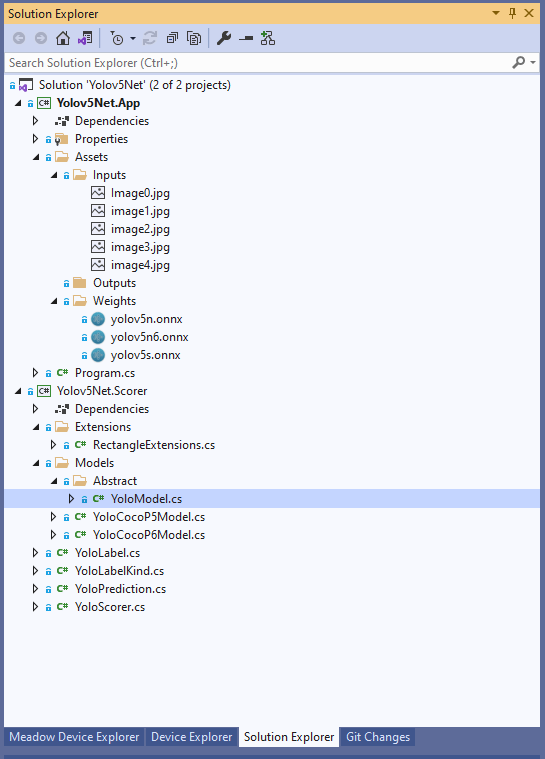

The list of object classes is in the YoloCocoP5Model.cs file in the mentalstack/yolov5-net repository.

public override List<YoloLabel> Labels { get; set; } = new List<YoloLabel>()

{

new YoloLabel { Id = 1, Name = "person" },

new YoloLabel { Id = 2, Name = "bicycle" },

new YoloLabel { Id = 3, Name = "car" },

new YoloLabel { Id = 4, Name = "motorcycle" },

new YoloLabel { Id = 5, Name = "airplane" },

new YoloLabel { Id = 6, Name = "bus" },

new YoloLabel { Id = 7, Name = "train" },

new YoloLabel { Id = 8, Name = "truck" },

new YoloLabel { Id = 9, Name = "boat" },

new YoloLabel { Id = 10, Name = "traffic light" },

new YoloLabel { Id = 11, Name = "fire hydrant" },

new YoloLabel { Id = 12, Name = "stop sign" },

new YoloLabel { Id = 13, Name = "parking meter" },

new YoloLabel { Id = 14, Name = "bench" },

new YoloLabel { Id = 15, Name = "bird" },

new YoloLabel { Id = 16, Name = "cat" },

new YoloLabel { Id = 17, Name = "dog" },

new YoloLabel { Id = 18, Name = "horse" },

new YoloLabel { Id = 19, Name = "sheep" },

new YoloLabel { Id = 20, Name = "cow" },

new YoloLabel { Id = 21, Name = "elephant" },

new YoloLabel { Id = 22, Name = "bear" },

new YoloLabel { Id = 23, Name = "zebra" },

new YoloLabel { Id = 24, Name = "giraffe" },

new YoloLabel { Id = 25, Name = "backpack" },

new YoloLabel { Id = 26, Name = "umbrella" },

new YoloLabel { Id = 27, Name = "handbag" },

new YoloLabel { Id = 28, Name = "tie" },

new YoloLabel { Id = 29, Name = "suitcase" },

new YoloLabel { Id = 30, Name = "frisbee" },

new YoloLabel { Id = 31, Name = "skis" },

new YoloLabel { Id = 32, Name = "snowboard" },

new YoloLabel { Id = 33, Name = "sports ball" },

new YoloLabel { Id = 34, Name = "kite" },

new YoloLabel { Id = 35, Name = "baseball bat" },

new YoloLabel { Id = 36, Name = "baseball glove" },

new YoloLabel { Id = 37, Name = "skateboard" },

new YoloLabel { Id = 38, Name = "surfboard" },

new YoloLabel { Id = 39, Name = "tennis racket" },

new YoloLabel { Id = 40, Name = "bottle" },

new YoloLabel { Id = 41, Name = "wine glass" },

new YoloLabel { Id = 42, Name = "cup" },

new YoloLabel { Id = 43, Name = "fork" },

new YoloLabel { Id = 44, Name = "knife" },

new YoloLabel { Id = 45, Name = "spoon" },

new YoloLabel { Id = 46, Name = "bowl" },

new YoloLabel { Id = 47, Name = "banana" },

new YoloLabel { Id = 48, Name = "apple" },

new YoloLabel { Id = 49, Name = "sandwich" },

new YoloLabel { Id = 50, Name = "orange" },

new YoloLabel { Id = 51, Name = "broccoli" },

new YoloLabel { Id = 52, Name = "carrot" },

new YoloLabel { Id = 53, Name = "hot dog" },

new YoloLabel { Id = 54, Name = "pizza" },

new YoloLabel { Id = 55, Name = "donut" },

new YoloLabel { Id = 56, Name = "cake" },

new YoloLabel { Id = 57, Name = "chair" },

new YoloLabel { Id = 58, Name = "couch" },

new YoloLabel { Id = 59, Name = "potted plant" },

new YoloLabel { Id = 60, Name = "bed" },

new YoloLabel { Id = 61, Name = "dining table" },

new YoloLabel { Id = 62, Name = "toilet" },

new YoloLabel { Id = 63, Name = "tv" },

new YoloLabel { Id = 64, Name = "laptop" },

new YoloLabel { Id = 65, Name = "mouse" },

new YoloLabel { Id = 66, Name = "remote" },

new YoloLabel { Id = 67, Name = "keyboard" },

new YoloLabel { Id = 68, Name = "cell phone" },

new YoloLabel { Id = 69, Name = "microwave" },

new YoloLabel { Id = 70, Name = "oven" },

new YoloLabel { Id = 71, Name = "toaster" },

new YoloLabel { Id = 72, Name = "sink" },

new YoloLabel { Id = 73, Name = "refrigerator" },

new YoloLabel { Id = 74, Name = "book" },

new YoloLabel { Id = 75, Name = "clock" },

new YoloLabel { Id = 76, Name = "vase" },

new YoloLabel { Id = 77, Name = "scissors" },

new YoloLabel { Id = 78, Name = "teddy bear" },

new YoloLabel { Id = 79, Name = "hair drier" },

new YoloLabel { Id = 80, Name = "toothbrush" }

};

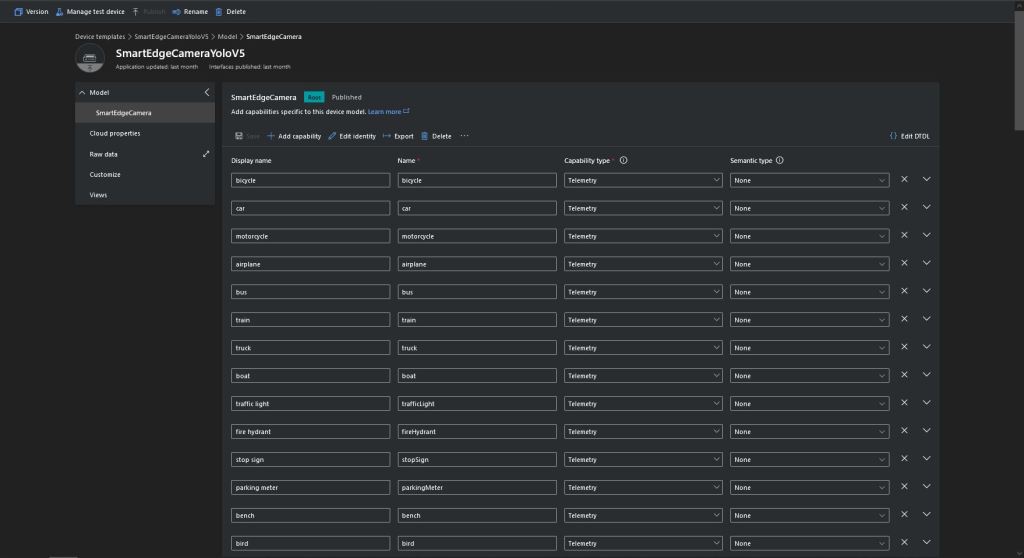

Some of the label choices seem a bit arbitrary(frisbee, surfboard) and American(fire hydrant, baseball bat, baseball glove) It was quite tedious configuring the 80 labels in my Azure IoT Central template.

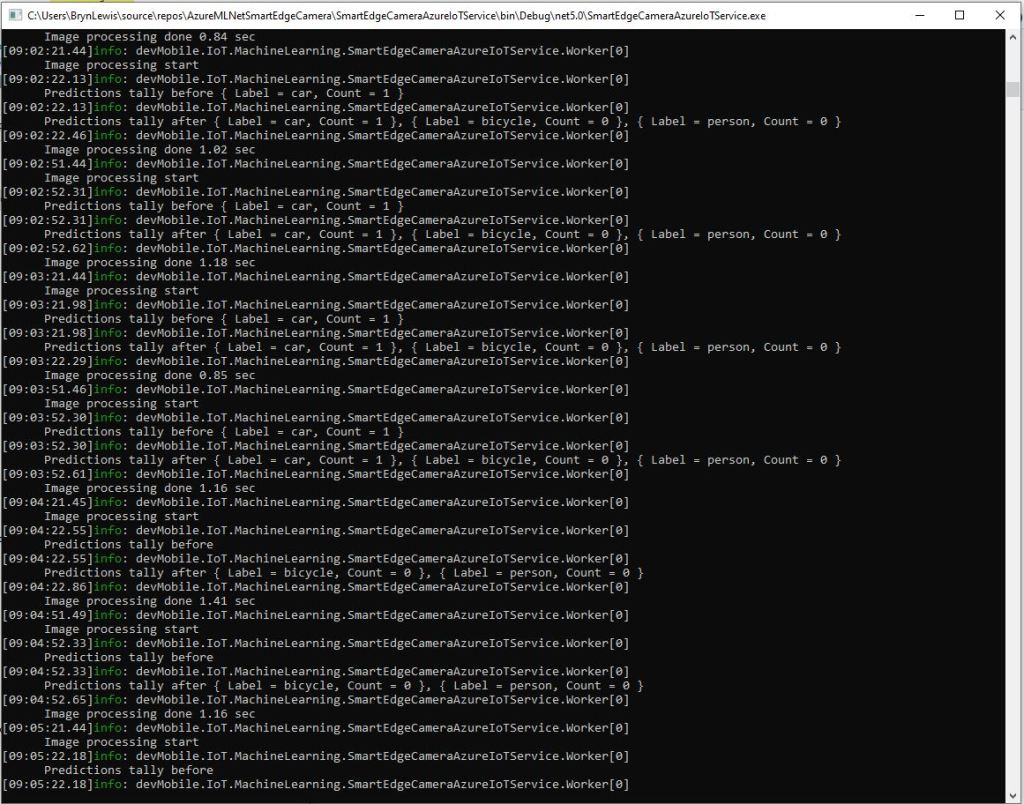

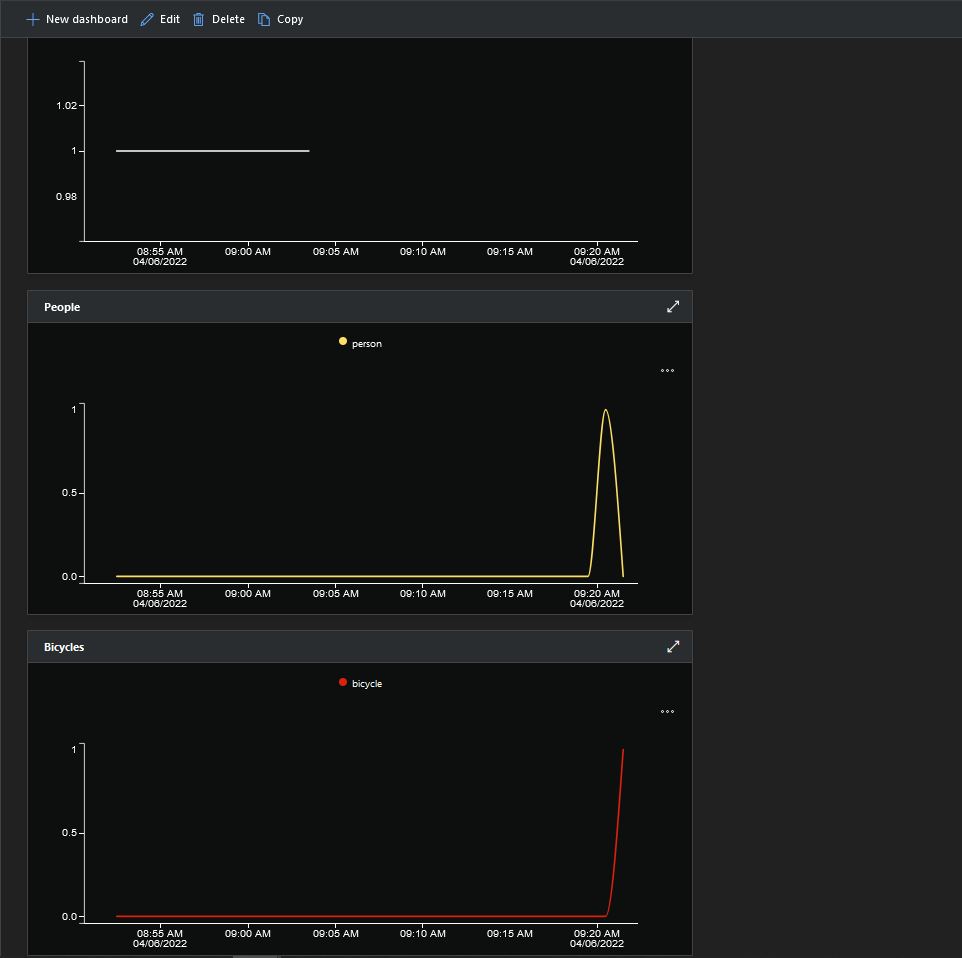

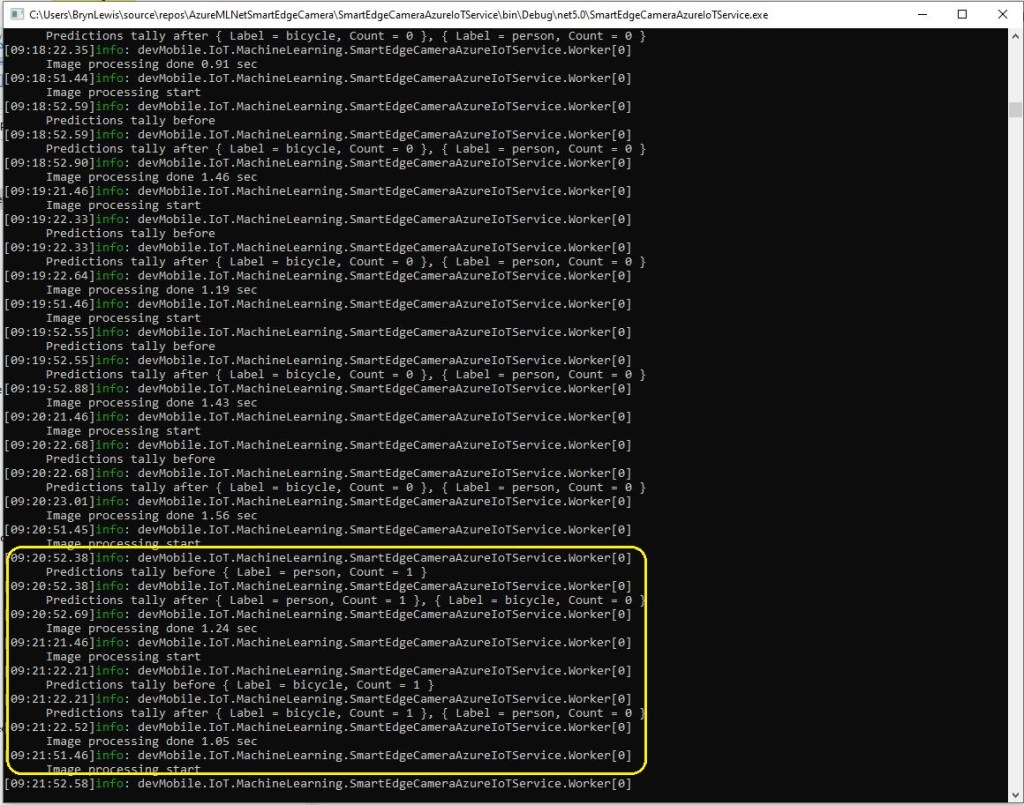

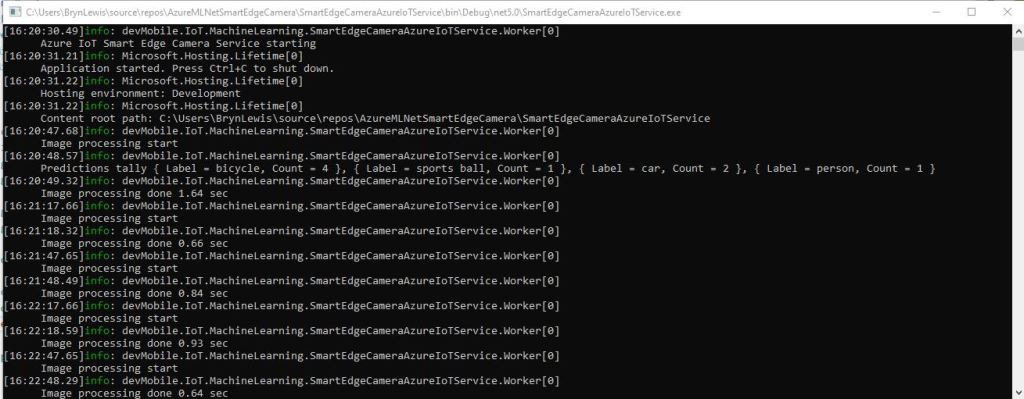

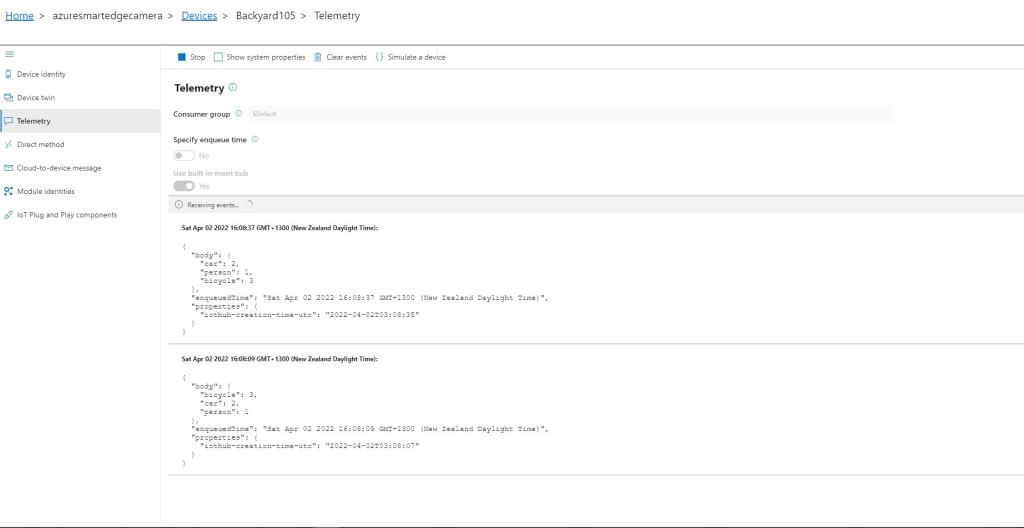

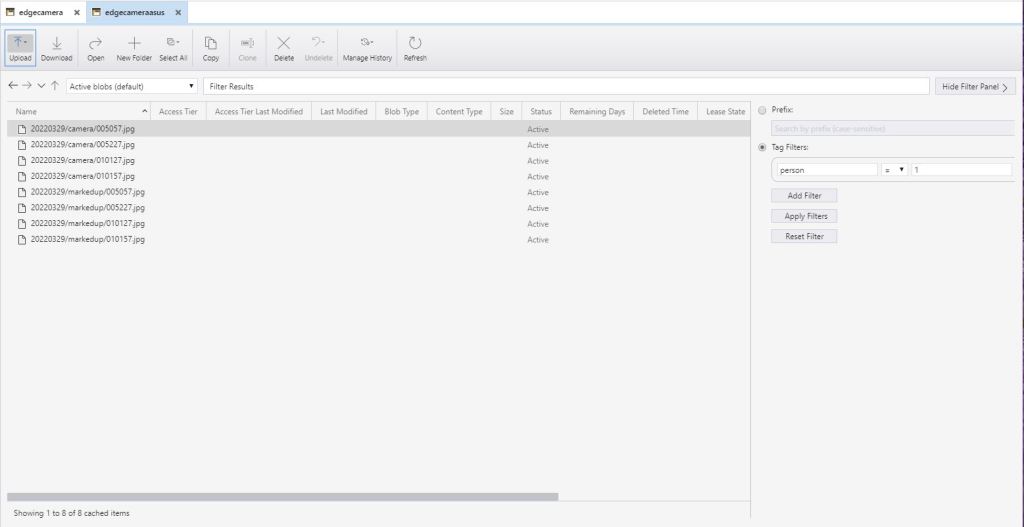

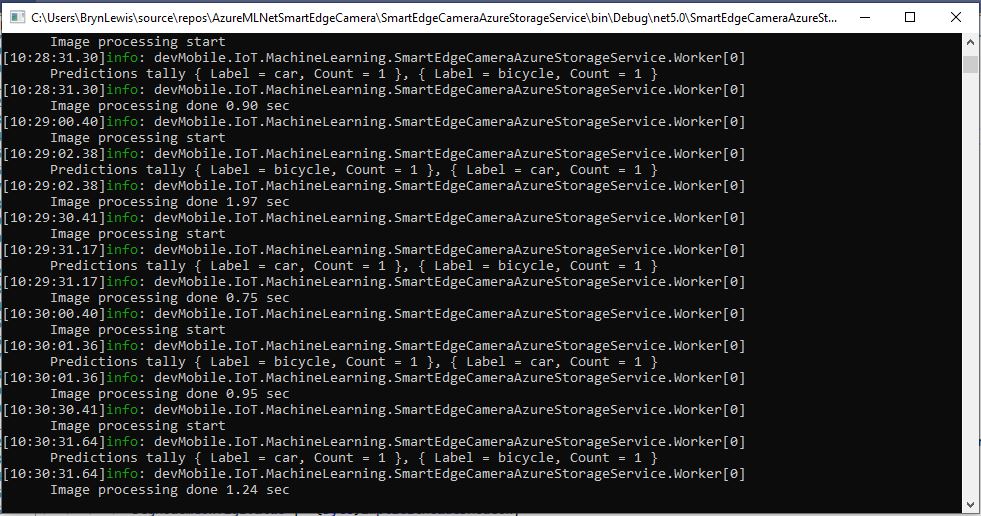

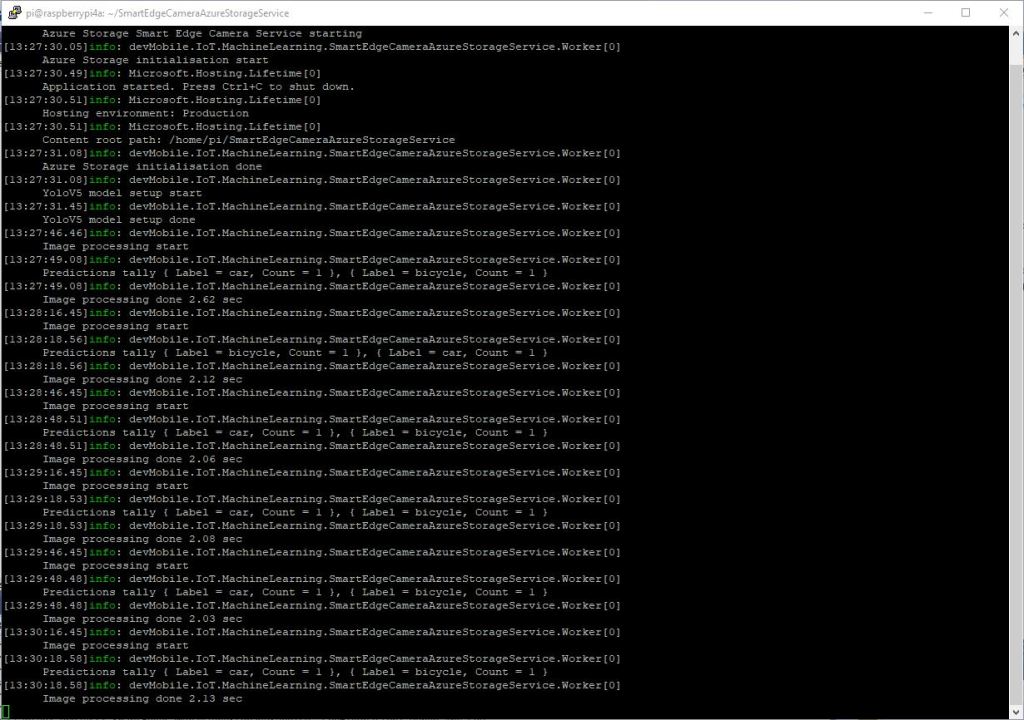

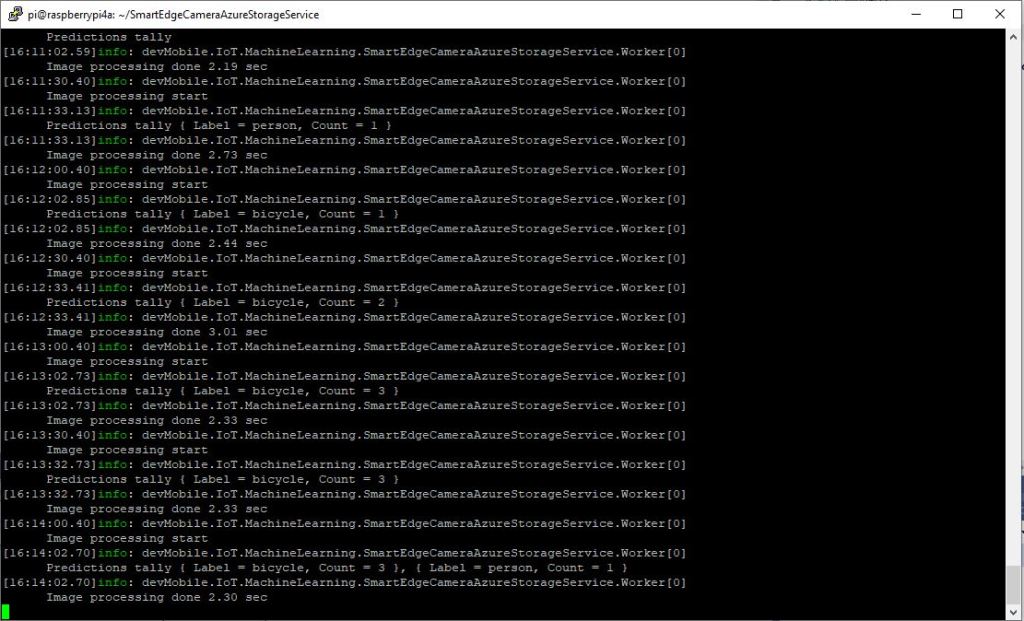

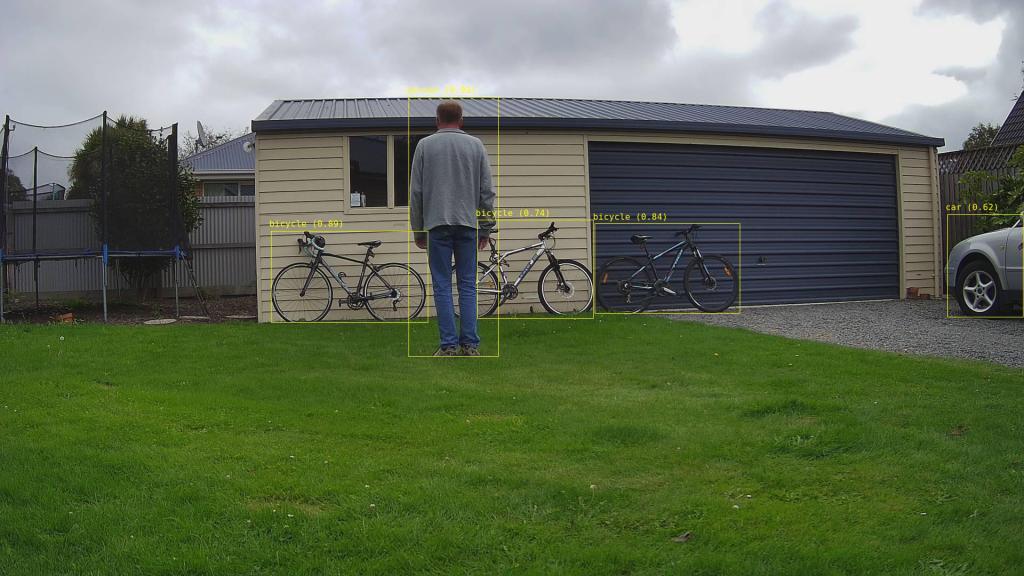

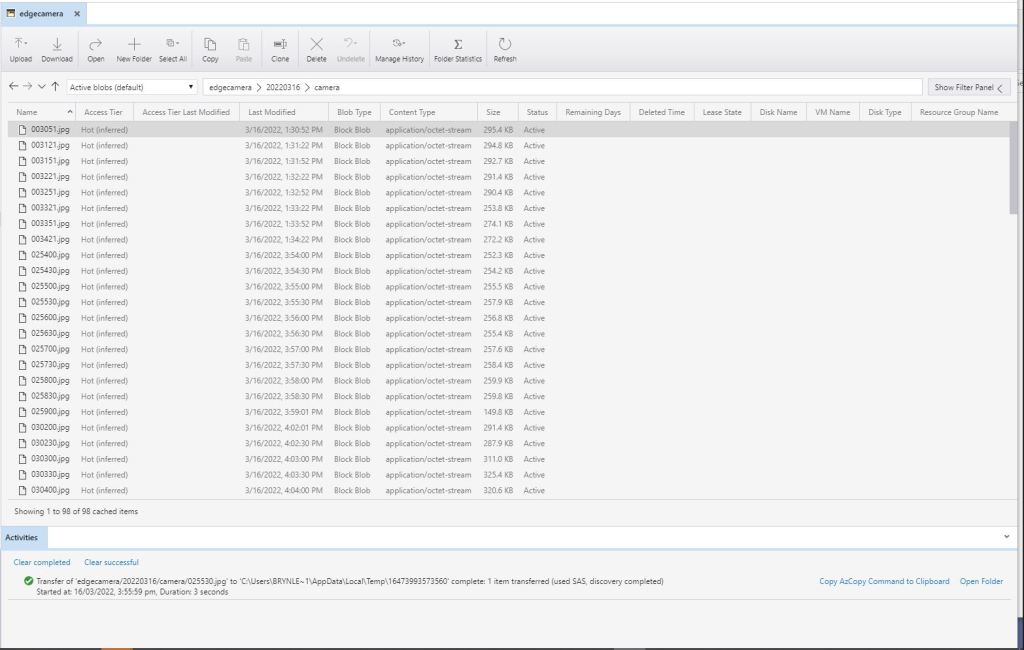

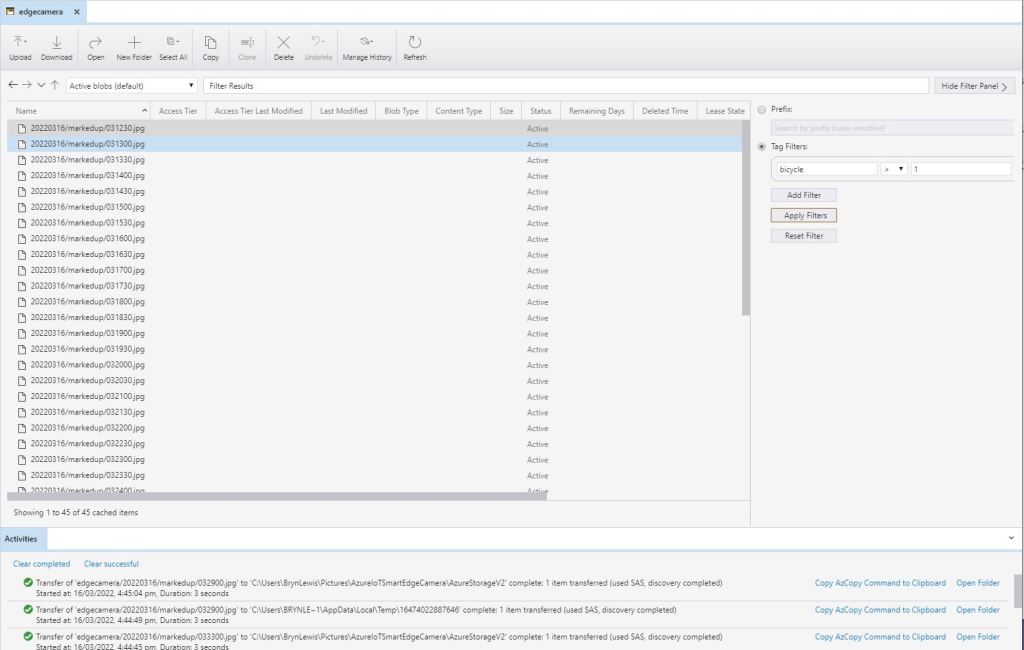

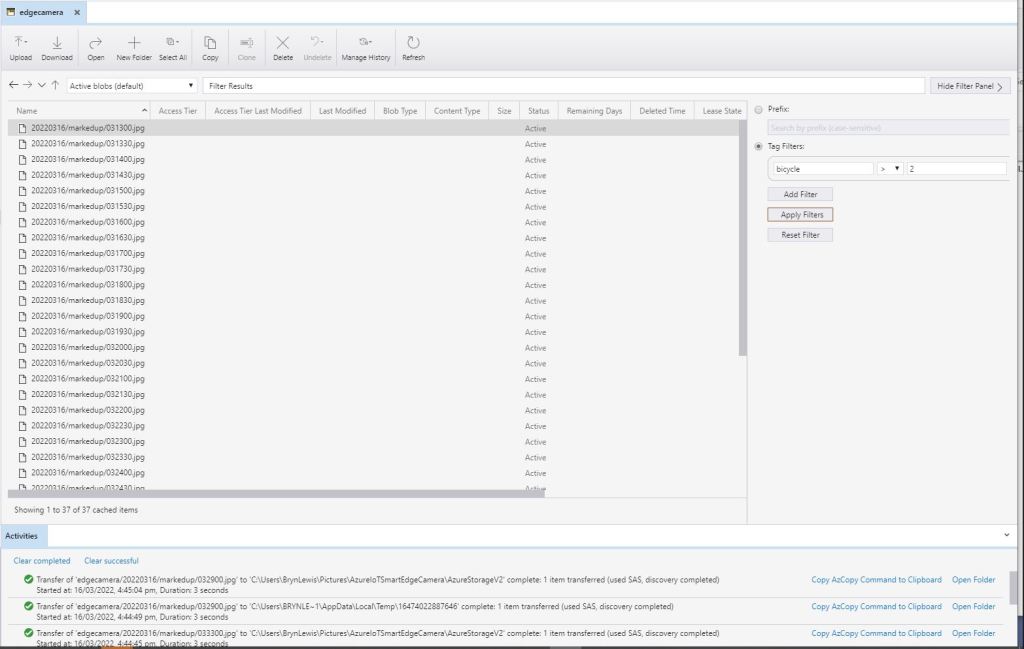

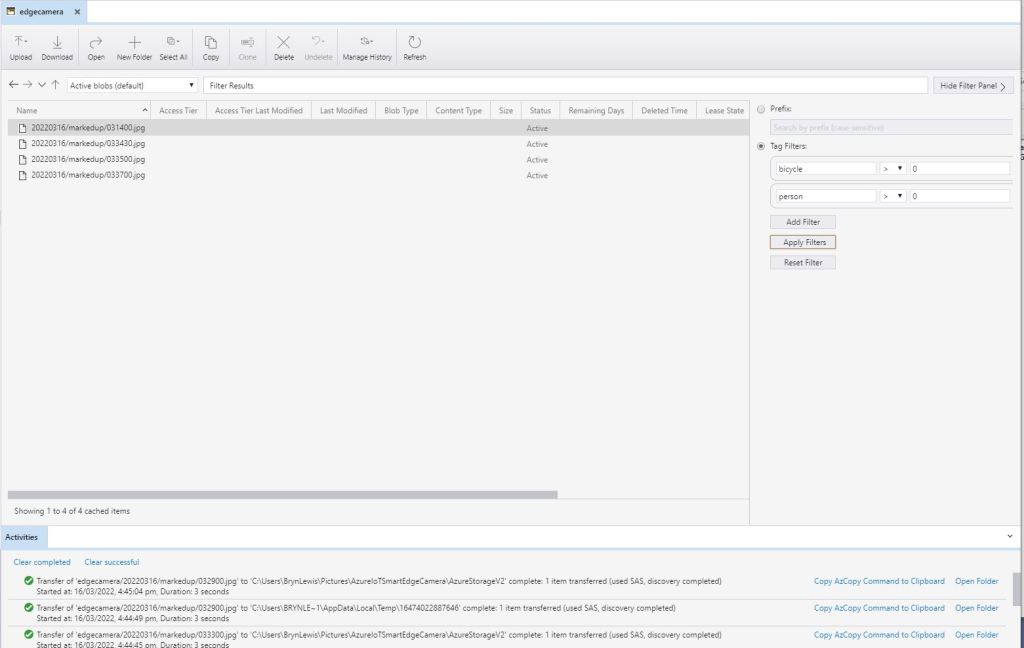

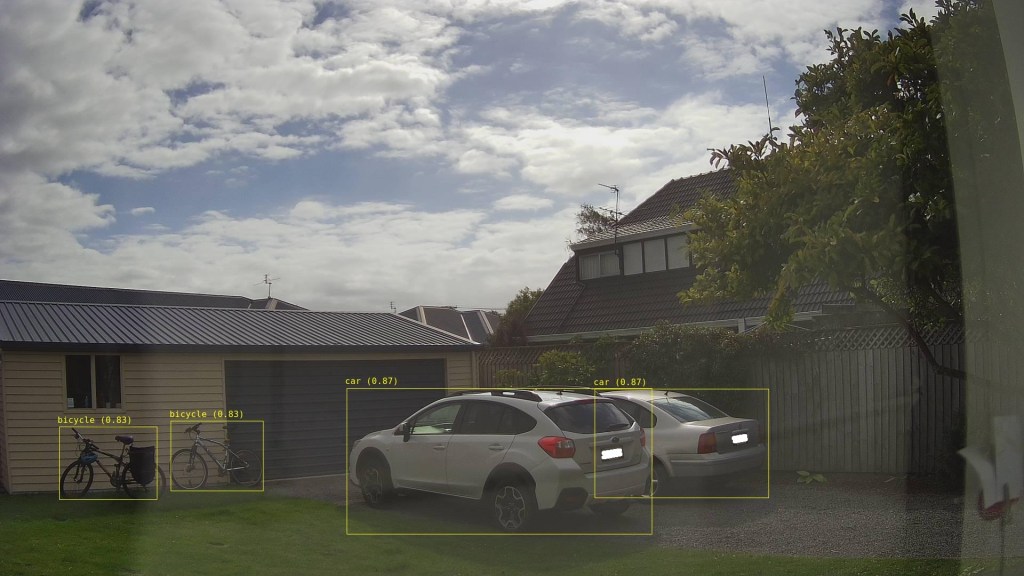

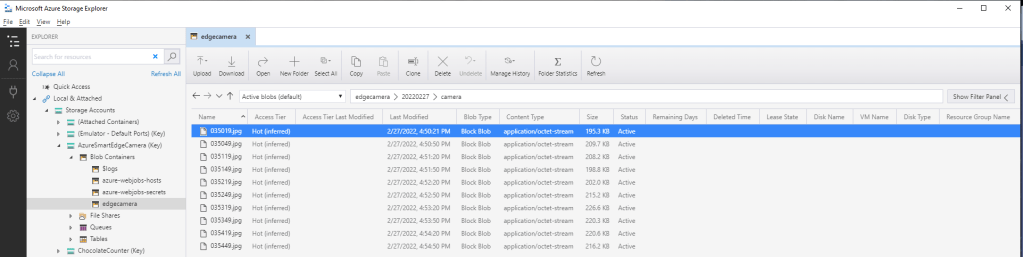

If there is an object with a label in the PredictionLabelsOfInterest list, a tally of each of the different object classes in the image is sent to an Azure IoT Hub/ Azure IoT Central.

"Application": {

"DeviceID": "",

"ImageTimerDue": "0.00:00:15",

"ImageTimerPeriod": "0.00:00:30",

"ImageCameraFilepath": "ImageCamera.jpg",

"ImageMarkedUpFilepath": "ImageMarkedup.jpg",

"YoloV5ModelPath": "YoloV5/yolov5s.onnx",

"PredictionScoreThreshold": 0.7,

"PredictionLabelsOfInterest": [

"bicycle",

"person"

],

"PredictionLabelsMinimum": [

"bicycle",

"car",

"person"

]

}

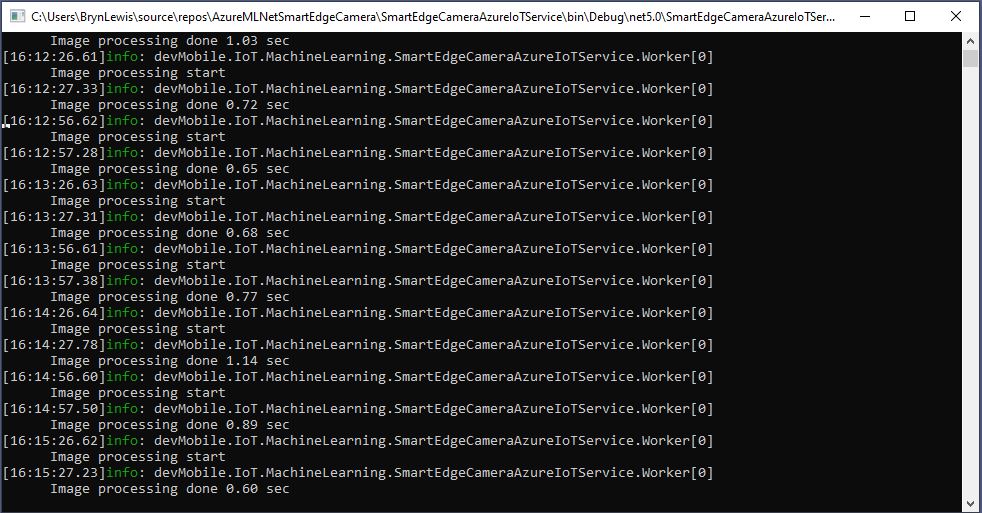

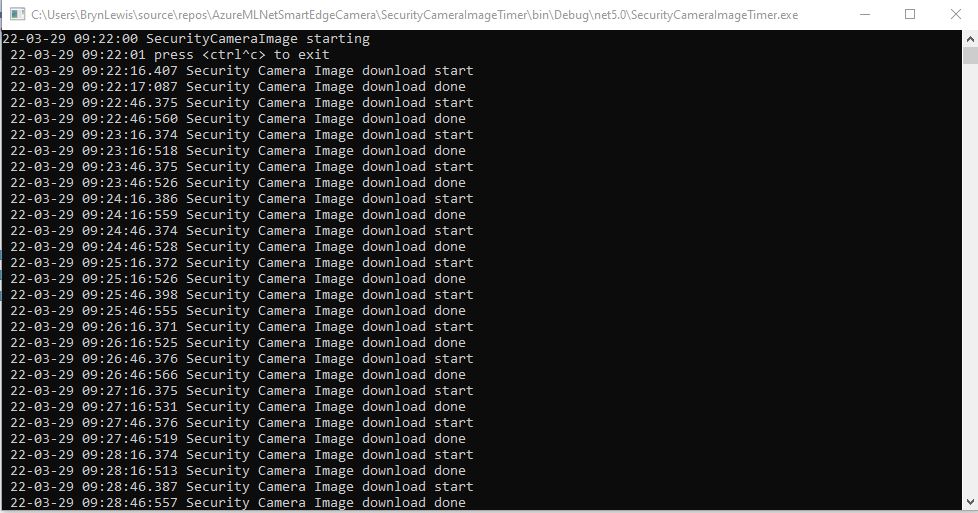

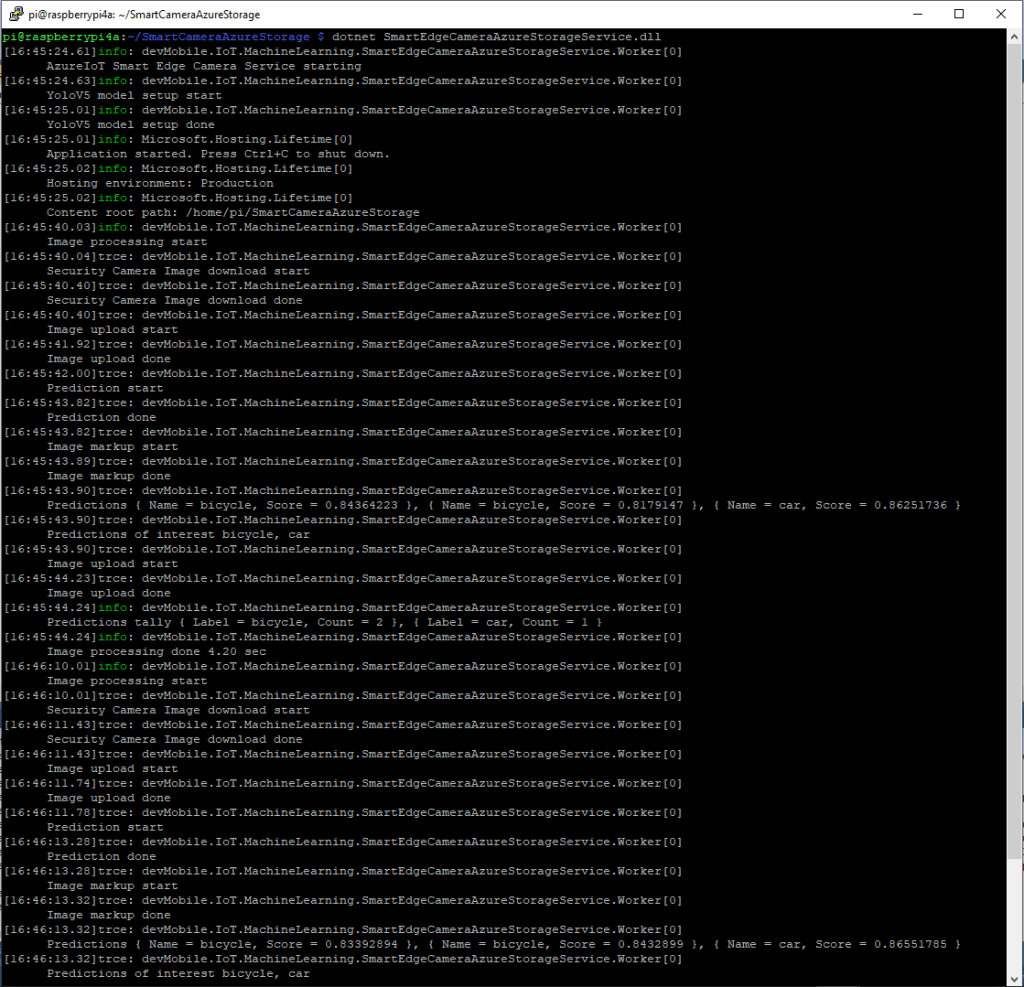

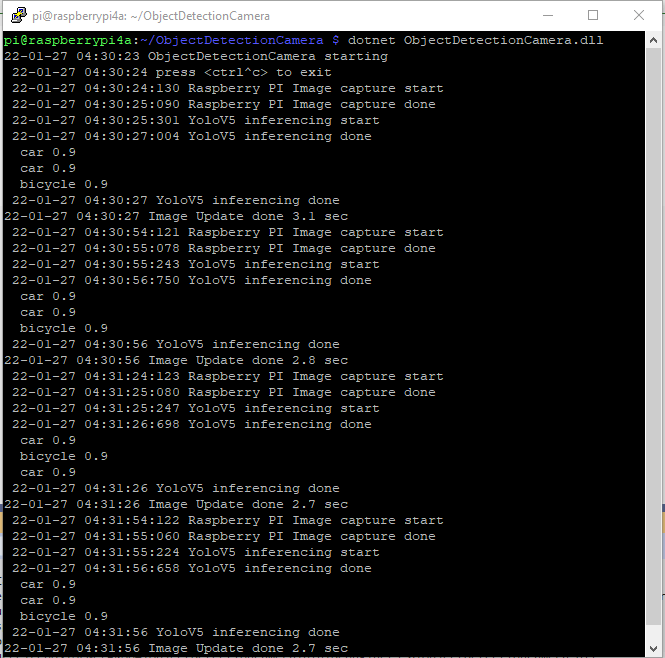

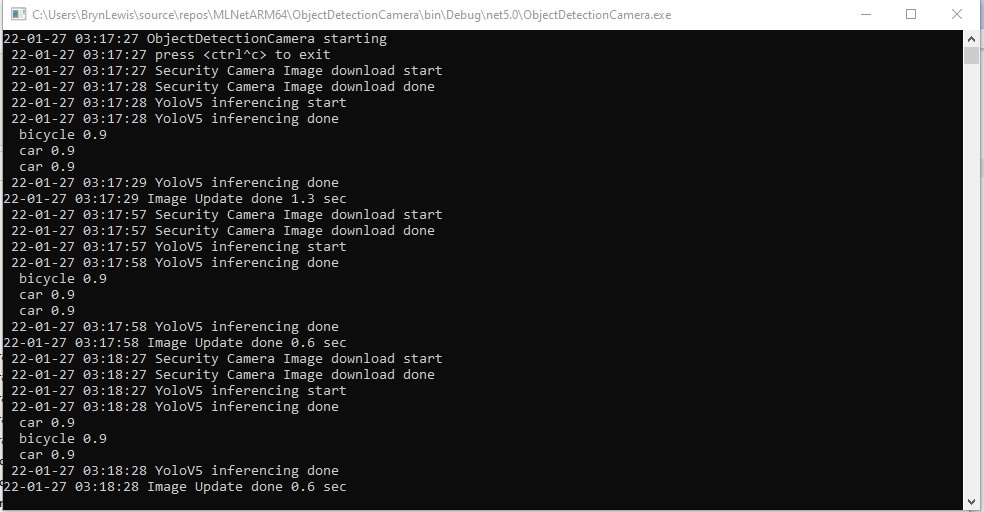

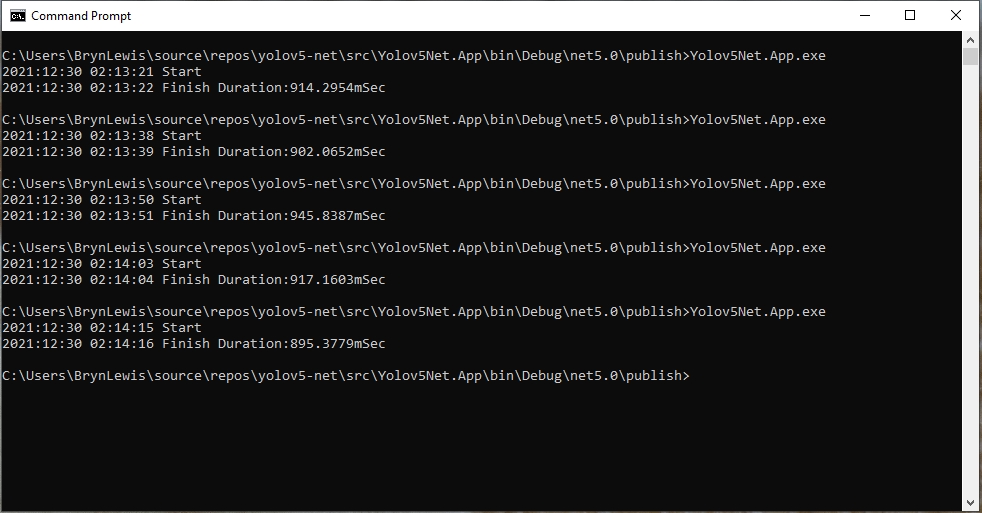

After the You Only Look Once(YOLOV5)+ML.Net+Open Neural Network Exchange(ONNX) plumbing has loaded a timer with a configurable due time and period is started.

private async void ImageUpdateTimerCallback(object state)

{

DateTime requestAtUtc = DateTime.UtcNow;

// Just incase - stop code being called while photo already in progress

if (_cameraBusy)

{

return;

}

_cameraBusy = true;

_logger.LogInformation("Image processing start");

try

{

#if CAMERA_RASPBERRY_PI

RaspberryPIImageCapture();

#endif

#if CAMERA_SECURITY

SecurityCameraImageCapture();

#endif

List<YoloPrediction> predictions;

using (Image image = Image.FromFile(_applicationSettings.ImageCameraFilepath))

{

_logger.LogTrace("Prediction start");

predictions = _scorer.Predict(image);

_logger.LogTrace("Prediction done");

OutputImageMarkup(image, predictions, _applicationSettings.ImageMarkedUpFilepath);

}

if (_logger.IsEnabled(LogLevel.Trace))

{

_logger.LogTrace("Predictions {0}", predictions.Select(p => new { p.Label.Name, p.Score }));

}

var predictionsValid = predictions.Where(p => p.Score >= _applicationSettings.PredictionScoreThreshold).Select(p => p.Label.Name);

// Count up the number of each class detected in the image

var predictionsTally = predictionsValid.GroupBy(p => p)

.Select(p => new

{

Label = p.Key,

Count = p.Count()

});

if (_logger.IsEnabled(LogLevel.Information))

{

_logger.LogInformation("Predictions tally before {0}", predictionsTally.ToList());

}

// Add in any missing counts the cloudy side is expecting

if (_applicationSettings.PredictionLabelsMinimum != null)

{

foreach( String label in _applicationSettings.PredictionLabelsMinimum)

{

if (!predictionsTally.Any(c=>c.Label == label ))

{

predictionsTally = predictionsTally.Append(new {Label = label, Count = 0 });

}

}

}

if (_logger.IsEnabled(LogLevel.Information))

{

_logger.LogInformation("Predictions tally after {0}", predictionsTally.ToList());

}

if ((_applicationSettings.PredictionLabelsOfInterest == null) || (predictionsValid.Select(c => c).Intersect(_applicationSettings.PredictionLabelsOfInterest, StringComparer.OrdinalIgnoreCase).Any()))

{

JObject telemetryDataPoint = new JObject();

foreach (var predictionTally in predictionsTally)

{

telemetryDataPoint.Add(predictionTally.Label, predictionTally.Count);

}

using (Message message = new Message(Encoding.ASCII.GetBytes(JsonConvert.SerializeObject(telemetryDataPoint))))

{

message.Properties.Add("iothub-creation-time-utc", requestAtUtc.ToString("s", CultureInfo.InvariantCulture));

await _deviceClient.SendEventAsync(message);

}

}

}

catch (Exception ex)

{

_logger.LogError(ex, "Camera image download, post processing, or telemetry failed");

}

finally

{

_cameraBusy = false;

}

TimeSpan duration = DateTime.UtcNow - requestAtUtc;

_logger.LogInformation("Image processing done {0:f2} sec", duration.TotalSeconds);

}

Using some Language Integrated Query (LINQ) code any predictions with a score < PredictionScoreThreshold are discarded. A count of the instances of each class is generated with some more LINQ code.

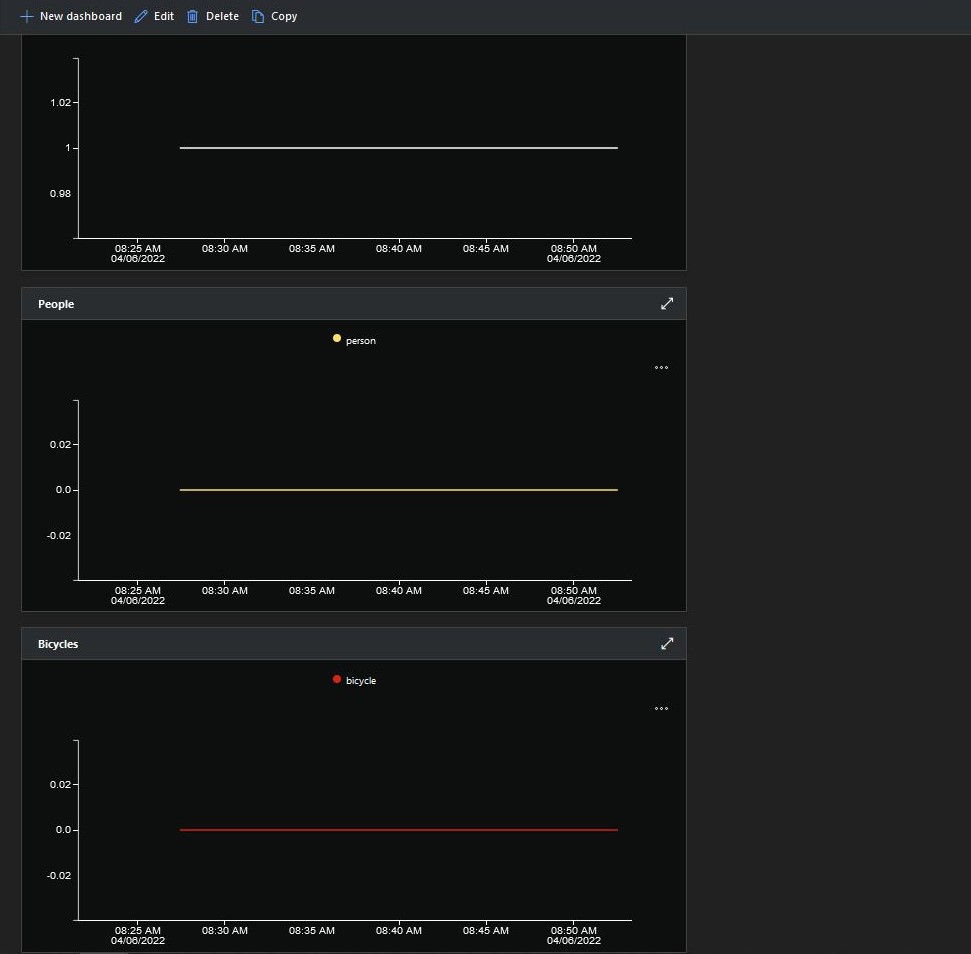

The PredictionLabelsMinimum(optional) is then used to add additional labels with a count of 0 to PredictionsTally so there are no missing datapoints. This is specifically for Azure IoT Central Dashboard so the graph lines are continuous.

If any of the list of valid predictions labels is in the PredictionLabelsOfInterest list (if the PredictionLabelsOfInterest is empty any label is a label of interest) the list of prediction class counts is used to populate a Newtonsoft JObject which is serialised to generate a Java Script Object Notation(JSON) Azure IoT Hub message payload.

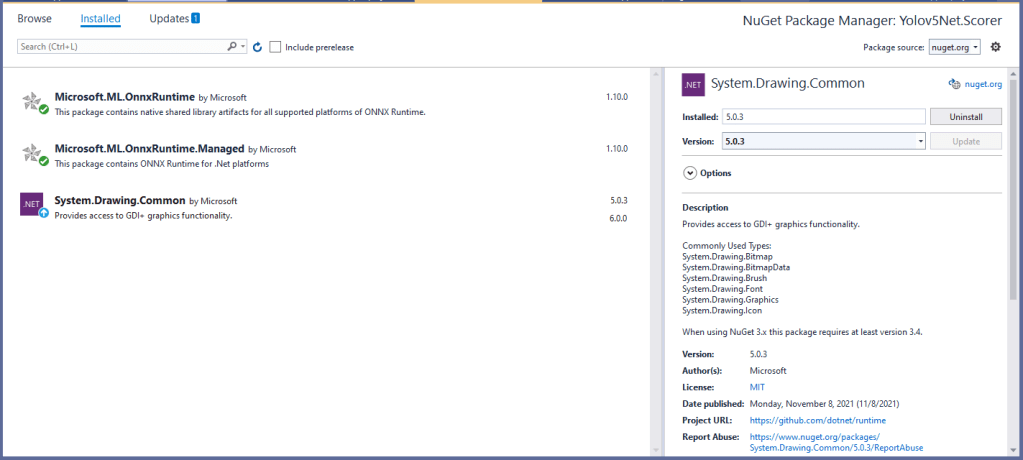

The mentalstack/yolov5-net and NuGet have been incredibly useful and MentalStack team have done a marvelous job building and supporting this project.

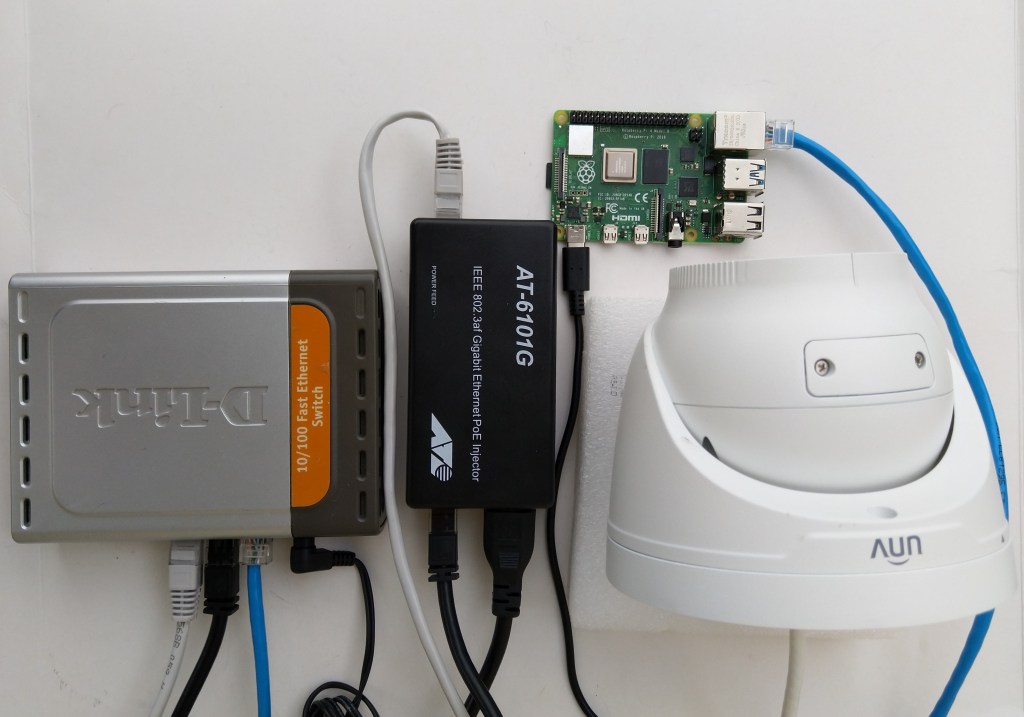

The test-rig consisted of a Unv ADZK-10 Security Camera, Power over Ethernet(PoE) and my HP Prodesk 400G4 DM (i7-8700T).