Introduction

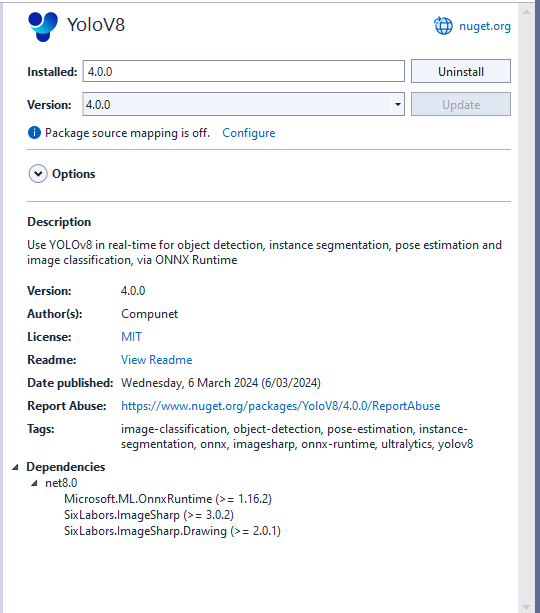

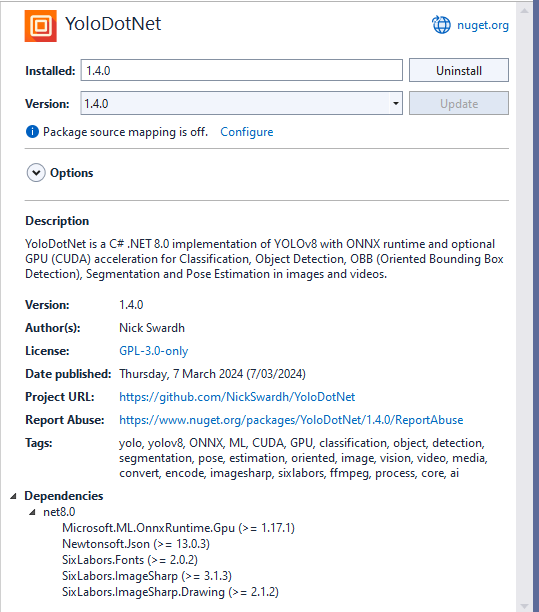

For this post I have used Copilot prompts to generate code which uses Ultralytics YoloV8 and YoloDoNet by NickSwardh for object detection, object classification, and pose estimation.

Object Detection

static void Main(string[] args)

{

string modelPath = "path/to/yolo_model.onnx"; // Update with actual model path

string imagePath = "path/to/image.jpg"; // Update with actual image path

// Load YOLO model

var yolo = new YoloModel(modelPath);

// Load image

using Bitmap image = new Bitmap(imagePath);

// Run classification

var results = yolo.Predict(image);

// Display results

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} with confidence {result.Confidence:P}");

}

}

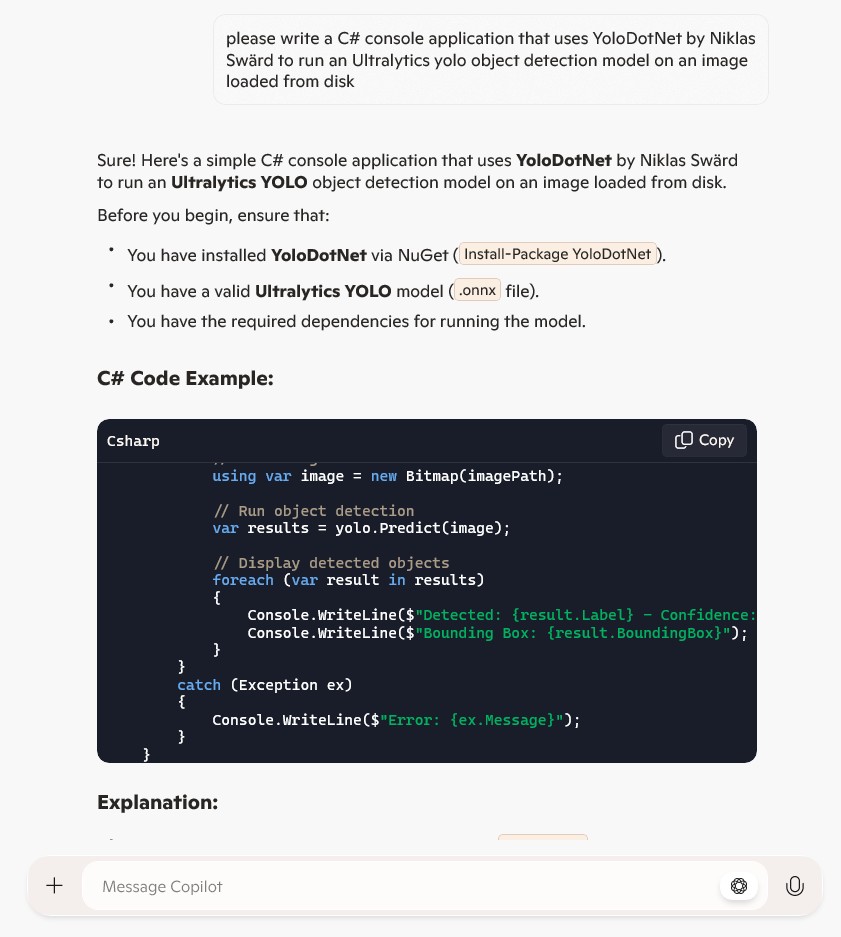

The code didn’t compile so I tried a different prompt

This didn’t compile and looked like it was based on https://github.com/techwingslab/yolov5-net. The generated code also added code to check that the model and image files existed.

static void Main(string[] args)

{

string modelPath = "yolov8.onnx"; // Replace with your actual model path

string imagePath = "image.jpg"; // Replace with your actual image path

if (!File.Exists(modelPath))

{

Console.WriteLine("Error: Model file not found!");

return;

}

if (!File.Exists(imagePath))

{

Console.WriteLine("Error: Image file not found!");

return;

}

try

{

// Load the YOLO model

using var yolo = new Yolo(modelPath);

// Load image from disk

using var image = new Bitmap(imagePath);

// Run object detection

var results = yolo.Predict(image);

// Display detected objects

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} - Confidence: {result.Confidence}");

Console.WriteLine($"Bounding Box: {result.BoundingBox}");

}

}

catch (Exception ex)

{

Console.WriteLine($"Error: {ex.Message}");

}

}

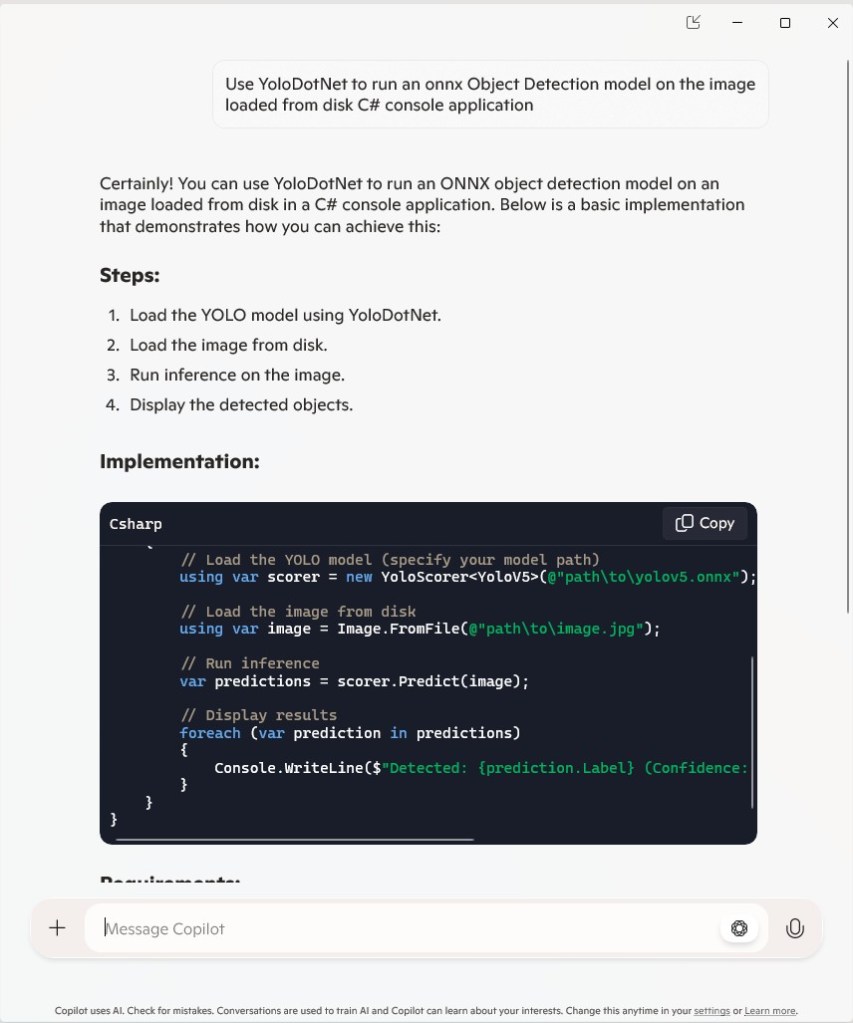

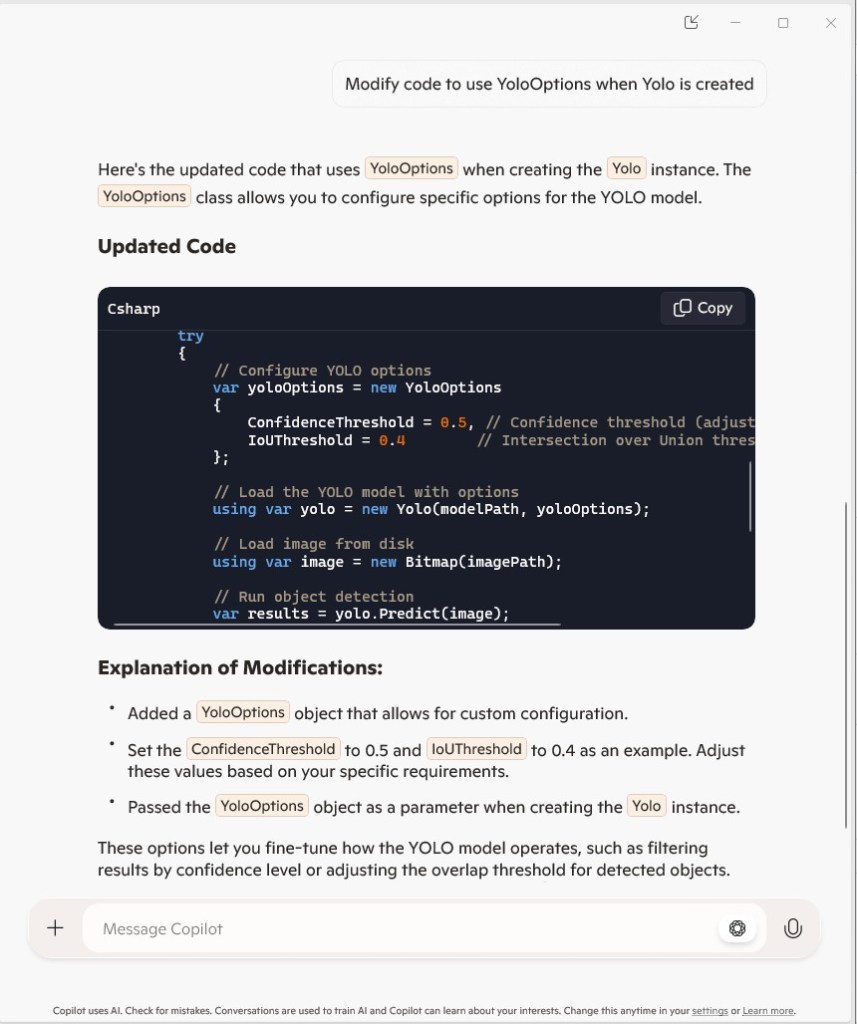

This didn’t compile so tried again

static void Main(string[] args)

{

string modelPath = "yolov8.onnx"; // Replace with your actual model path

string imagePath = "image.jpg"; // Replace with your actual image path

...

try

{

var yoloOptions = new YoloOptions

{

ConfidenceThreshold = 0.5, // Confidence threshold (adjust as needed)

IoUThreshold = 0.4 // Intersection over Union threshold

};

// Load the YOLO model

//using var yolo = new Yolo(modelPath);

using var yolo = new Yolo(yoloOptions);

// Load image from disk

using var image = new Bitmap(imagePath);

// Run object detection

//var results = yolo.Predict(image);

var results = yolo.RunObjectDetection(image);

// Display detected objects

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} - Confidence: {result.Confidence}");

Console.WriteLine($"Bounding Box: {result.BoundingBox}");

}

}

catch (Exception ex)

{

Console.WriteLine($"Error: {ex.Message}");

}

}

This didn’t compile so tried a different approach

I manually modified the code removing ConfidenceThreshold and IoUThreshold, then used intellisense to “discover” then add ModelType & modelPath

static void Main(string[] args)

{

string modelPath = "yolov8.onnx"; // Replace with your actual model path

string imagePath = "image.jpg"; // Replace with your actual image path

...

try

{

var yoloOptions = new YoloOptions

{

ModelType = ModelType.ObjectDetection,

OnnxModel = modelPath

};

// Load the YOLO model

//using var yolo = new Yolo(modelPath);

//using var yolo = new Yolo(yoloOptions);

//using var yolo = new Yolo(modelPath, yoloOptions);

using var yolo = new Yolo(yoloOptions);

// Load image using SkiaSharp

using var skBitmap = SKBitmap.Decode(imagePath);

// Convert SKBitmap to a format YOLO can process

using var skImage = SKImage.FromBitmap(skBitmap);

using var skData = skImage.Encode(SKEncodedImageFormat.Jpeg, 100);

using var memoryStream = new MemoryStream(skData.ToArray());

//var results = yolo.Predict(memoryStream);

var results = yolo.RunObbDetection(skImage);

// Display detected objects

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} - Confidence: {result.Confidence}");

Console.WriteLine($"Bounding Box: {result.BoundingBox}");

}

}

catch (Exception ex)

{

Console.WriteLine($"Error: {ex.Message}");

}

}

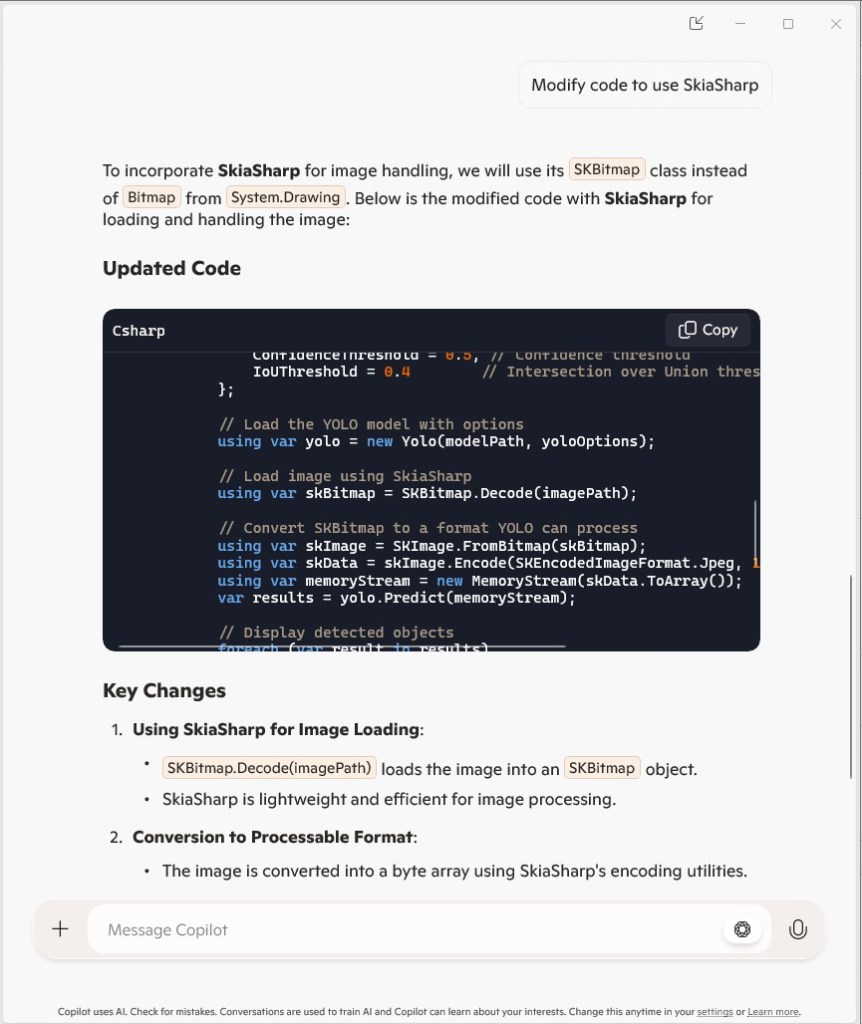

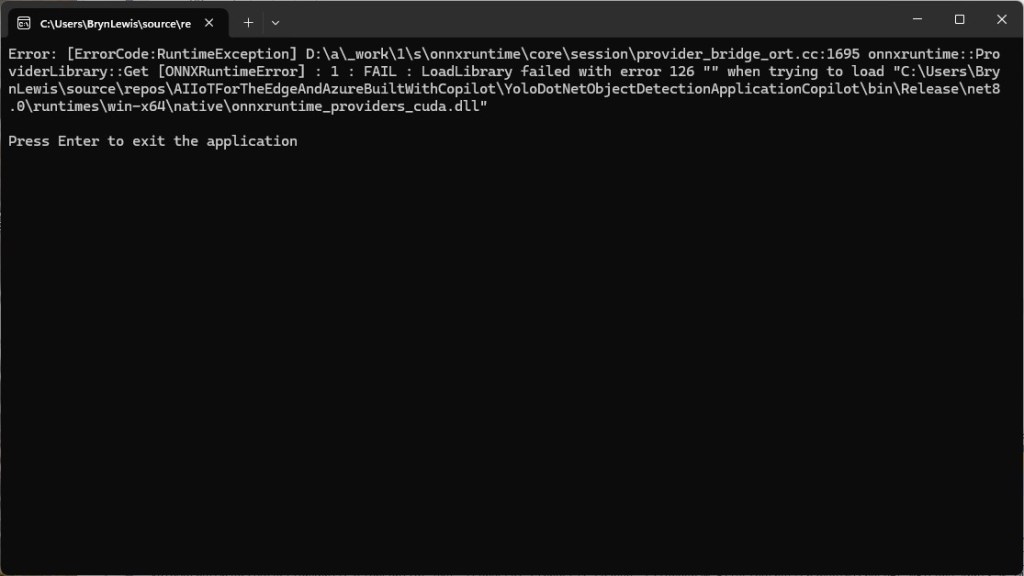

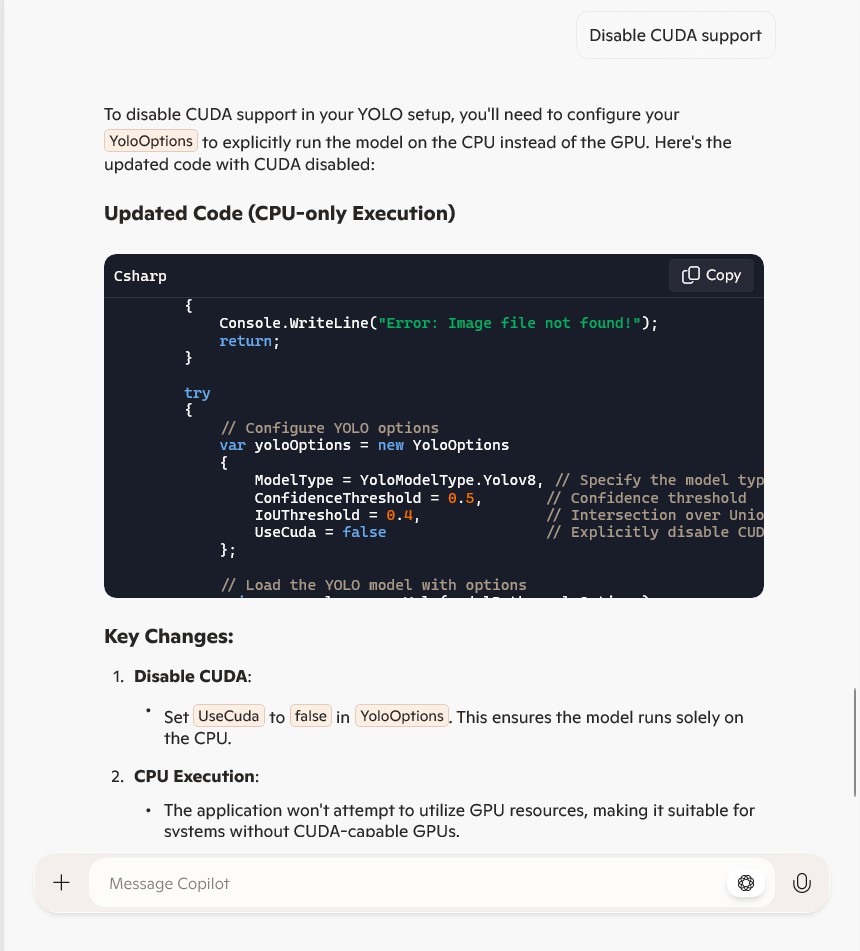

The code compiled and ran but didn’t work because YoloDoNet assumed that my computer had CUDA support

static void Main(string[] args)

{

string modelPath = "yolov8.onnx"; // Replace with your actual model path

string imagePath = "image.jpg"; // Replace with your actual image path

...

try

{

var yoloOptions = new YoloOptions

{

ModelType = ModelType.ObjectDetection,

OnnxModel = modelPath,

Cuda = false

};

// Load the YOLO model

//using var yolo = new Yolo(modelPath);

//using var yolo = new Yolo(yoloOptions);

//using var yolo = new Yolo(modelPath, yoloOptions);

using var yolo = new Yolo(yoloOptions);

// Load image using SkiaSharp

using var skBitmap = SKBitmap.Decode(imagePath);

// Convert SKBitmap to a format YOLO can process

using var skImage = SKImage.FromBitmap(skBitmap);

using var skData = skImage.Encode(SKEncodedImageFormat.Jpeg, 100);

using var memoryStream = new MemoryStream(skData.ToArray());

//var results = yolo.Predict(memoryStream);

var results = yolo.RunObbDetection(skImage);

// Display detected objects

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} - Confidence: {result.Confidence}");

Console.WriteLine($"Bounding Box: {result.BoundingBox}");

}

}

catch (Exception ex)

{

Console.WriteLine($"Error: {ex.Message}");

}

}

}

}

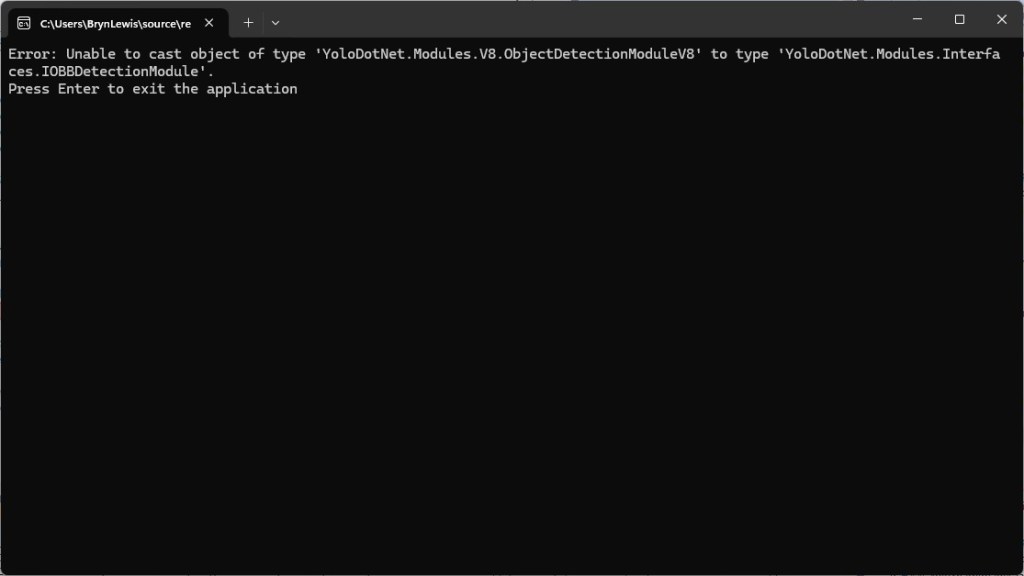

The code compiled and ran but didn’t work because I had accidentally used oriented bounding box rather than the object detection.

I was getting sick of prompts so manually fixed SKImage loading, RunObjectDetection and then removed the “cruft”.

static void Main(string[] args)

{

string modelPath = "yolov8s.onnx"; // Replace with your actual model path

string imagePath = "sports.jpg"; // Replace with your actual image path

...

try

{

var yoloOptions = new YoloOptions

{

ModelType = ModelType.ObjectDetection,

OnnxModel = modelPath,

Cuda = false

};

using var yolo = new Yolo(yoloOptions);

using var skImage = SKImage.FromEncodedData(imagePath);

var results = yolo.RunObjectDetection(skImage);

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} - Confidence: {result.Confidence:F2}");

Console.WriteLine($"Bounding Box: {result.BoundingBox}");

}

}

catch (Exception ex)

{

Console.WriteLine($"Error: {ex.Message}");

}

Console.WriteLine("Press Enter to exit the application");

Console.ReadLine();

}

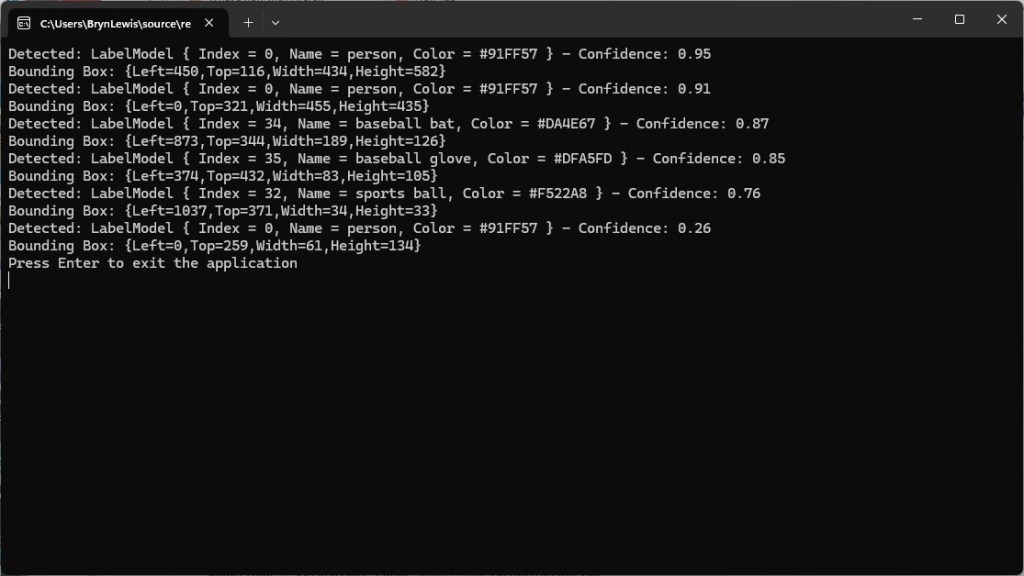

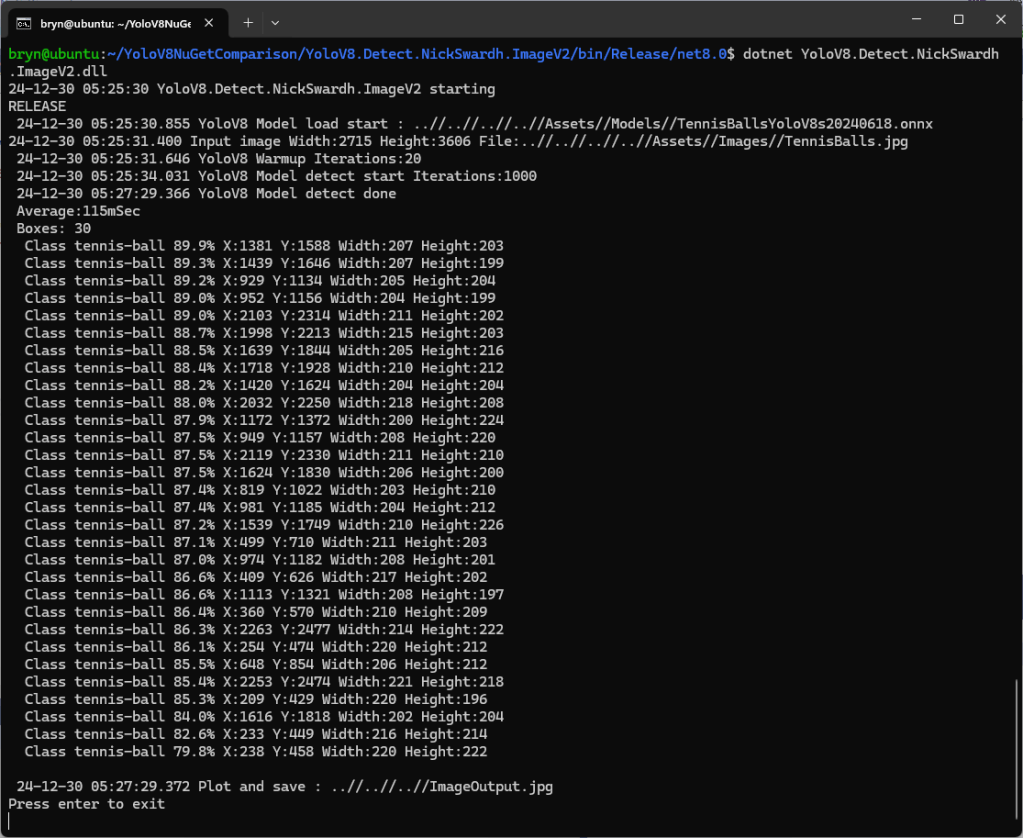

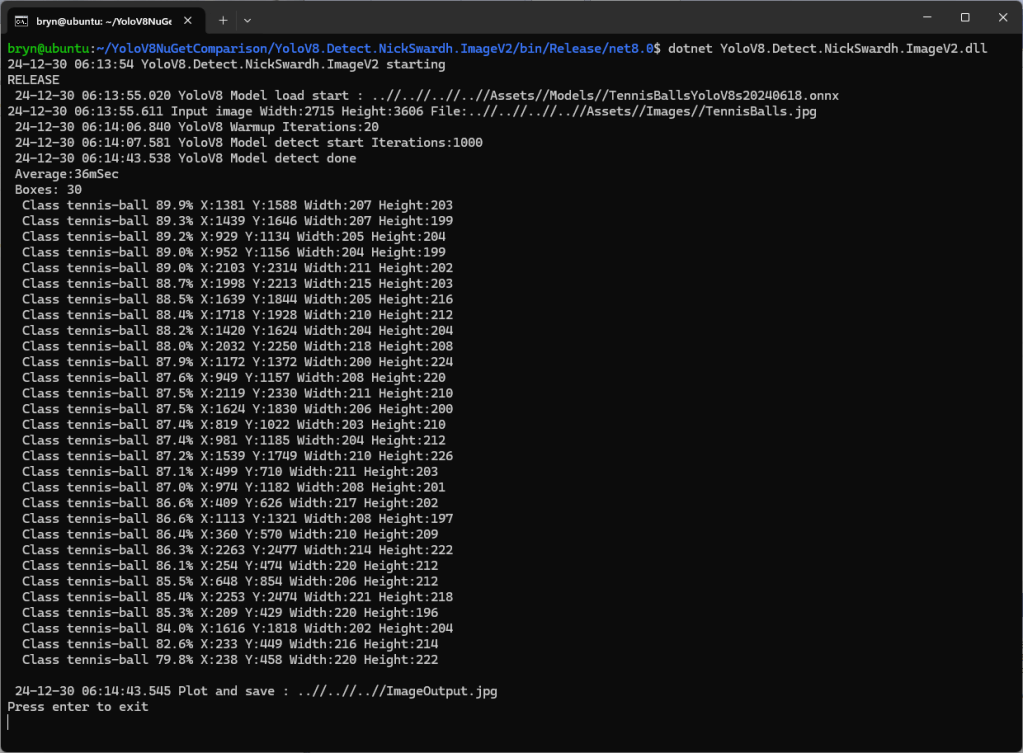

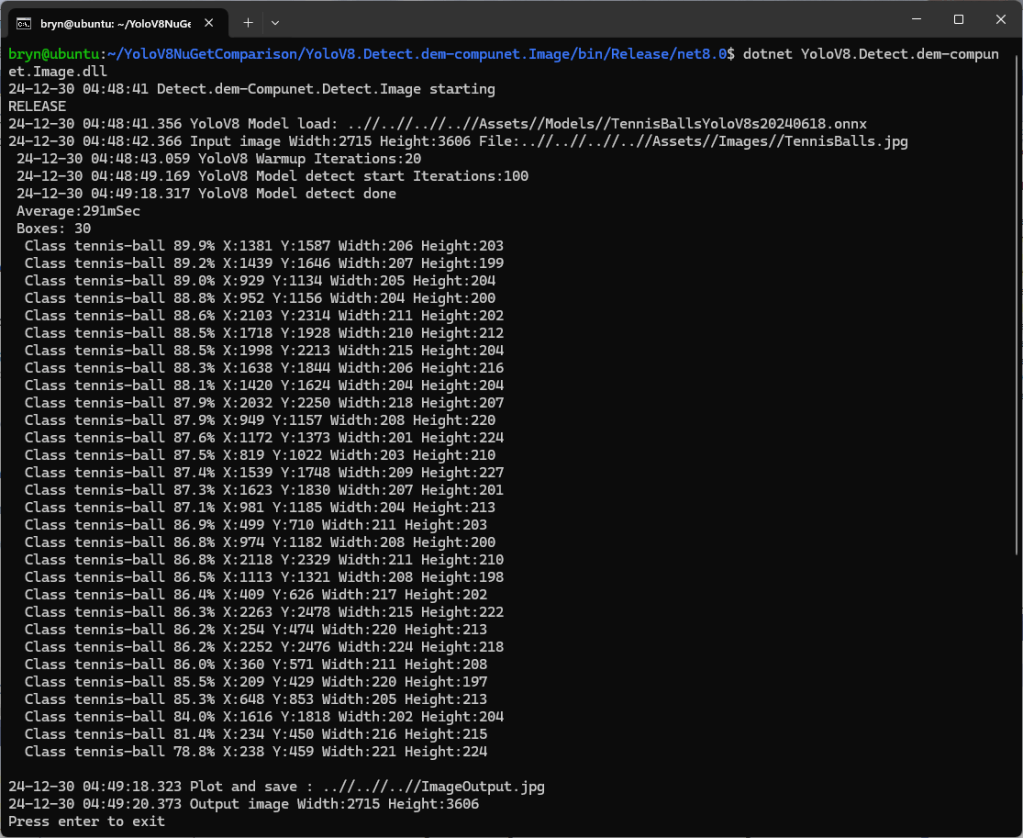

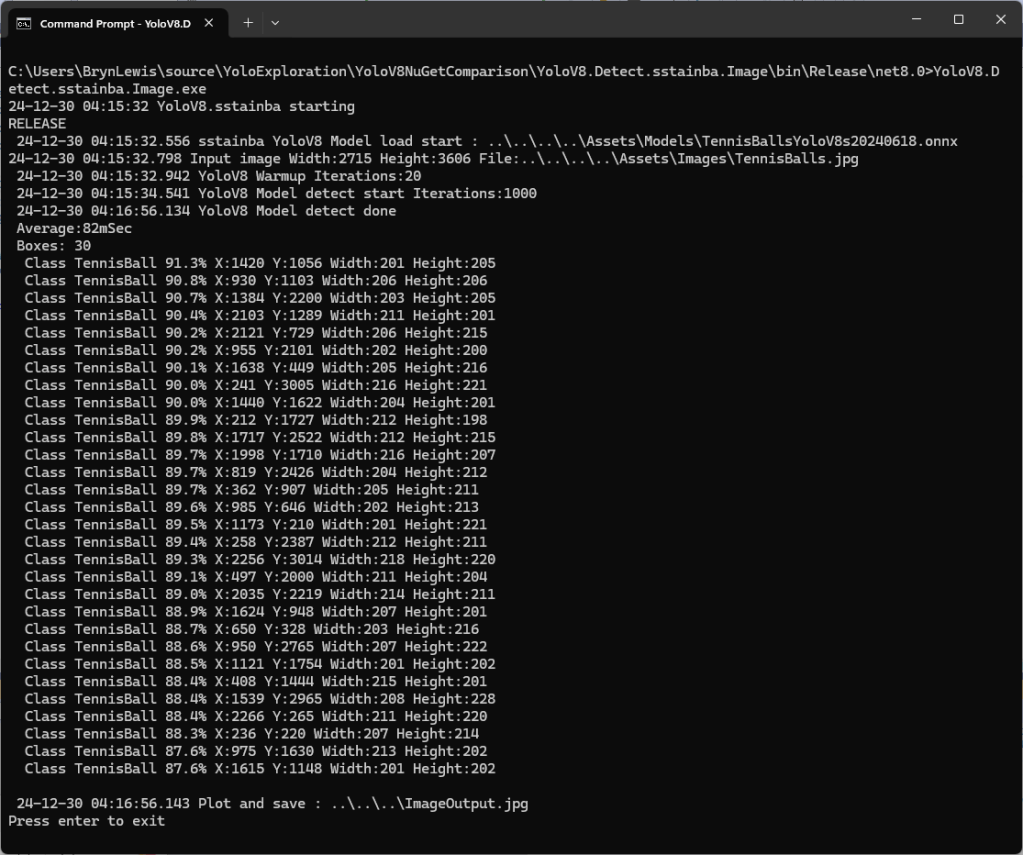

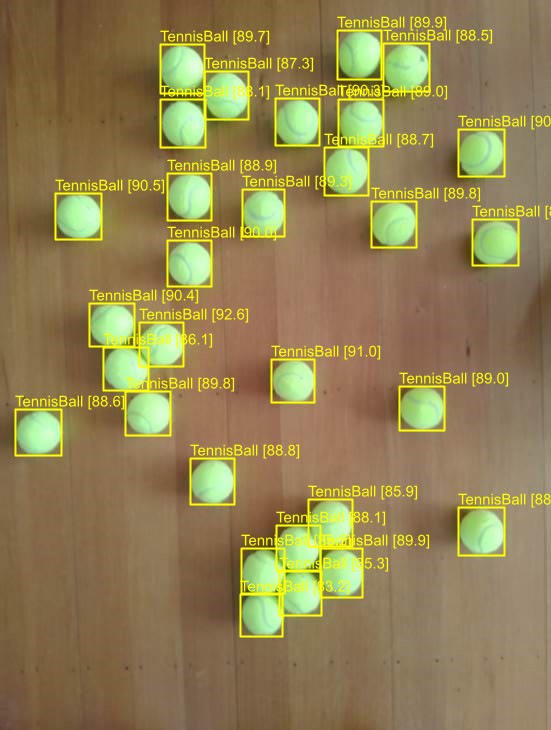

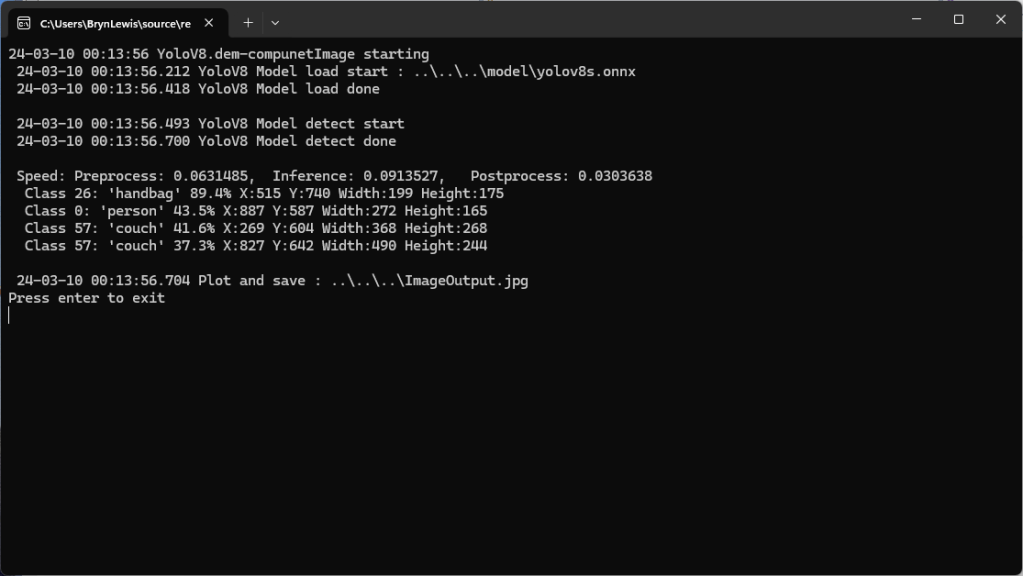

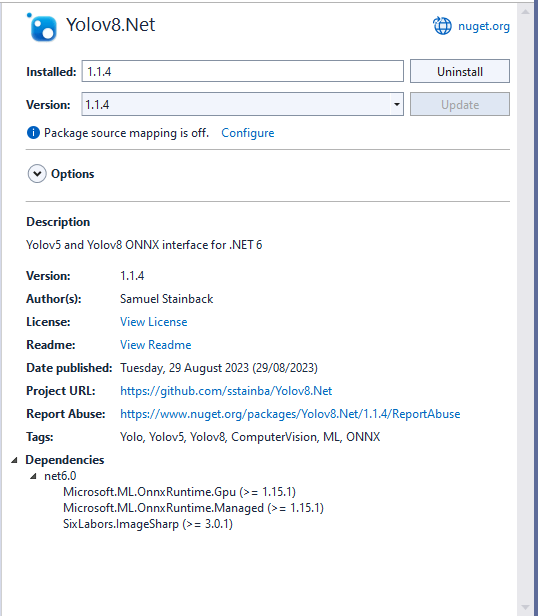

I tested the implementation with sample “sports” image from the YoloSharp Github repository

The console application output looked reasonable

Classification

My initial Copilot prompt

Don’t understand why reference to OpenCV was included

static void Main(string[] args)

{

string modelPath = "path/to/yolo_model.onnx"; // Update with actual model path

string imagePath = "path/to/image.jpg"; // Update with actual image path

// Load YOLO model

var yolo = new YoloModel(modelPath);

// Load image

using Bitmap image = new Bitmap(imagePath);

// Run classification

var results = yolo.Predict(image);

// Display results

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} with confidence {result.Confidence:P}");

}

}

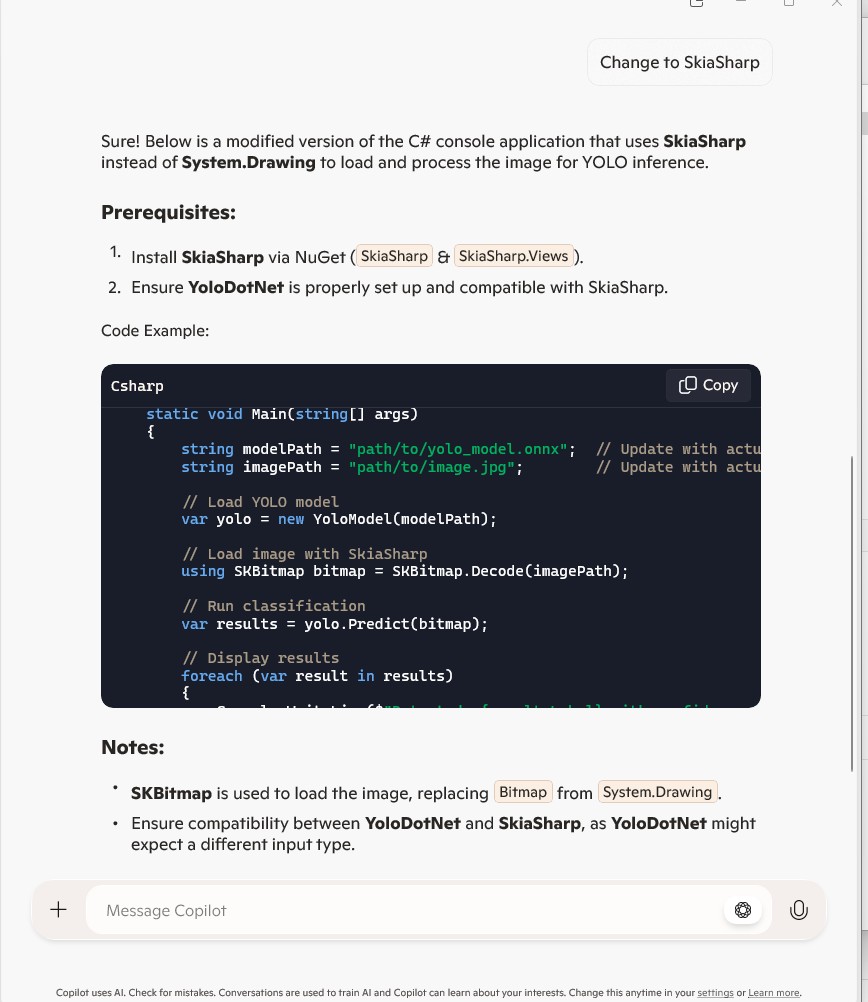

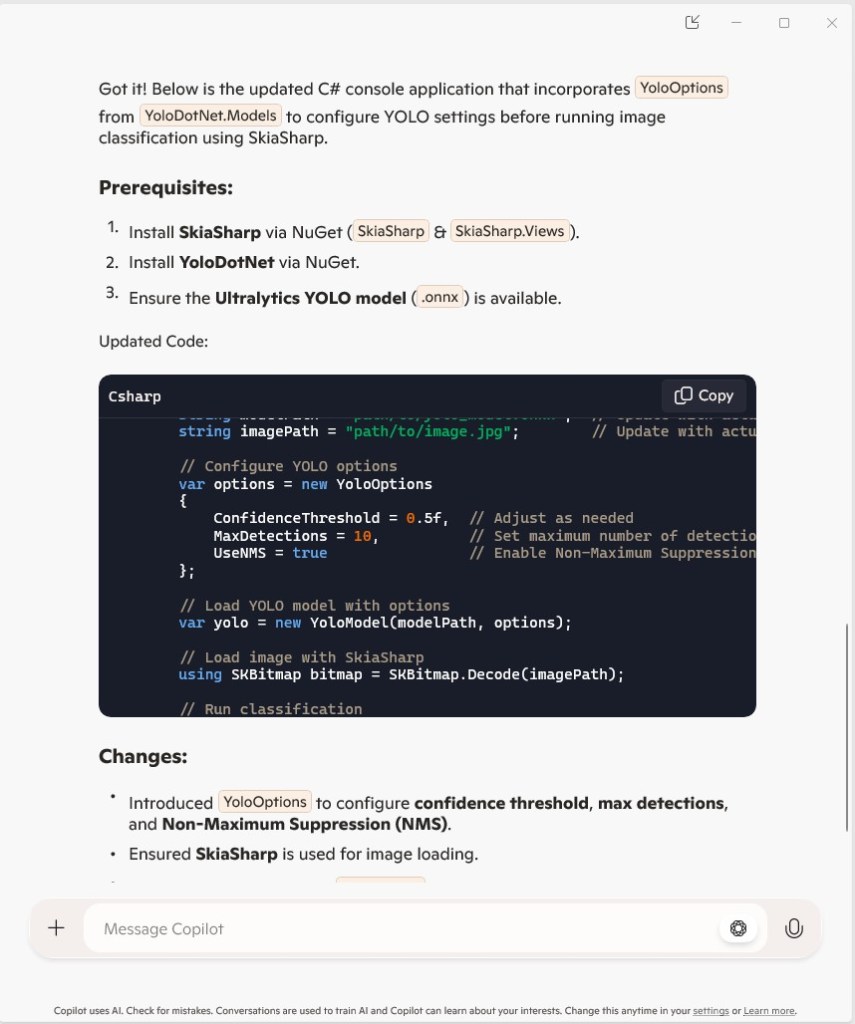

The code didn’t compile so I prompted the code be modified to use SkiaSharp which is used by YoloDoNet

This was a bit strange, so I tried again

I was getting sick of prompts so manually fixed SKImage loading, RunClassification and then removed the “cruft”.

static void Main(string[] args)

{

string modelPath = "yolov8s-cls.onnx"; // Update with actual model path

string imagePath = "pizza.jpg"; // Update with actual image path

var yolo = new Yolo(new YoloOptions()

{

ModelType = ModelType.Classification,

OnnxModel = modelPath,

Cuda = false

});

// Load image

using SKImage image = SKImage.FromEncodedData(imagePath);

// Run classification

var results = yolo.RunClassification(image);

// Display results

foreach (var result in results)

{

Console.WriteLine($"Detected: {result.Label} with confidence {result.Confidence:P}");

}

Console.WriteLine("Press Enter to exit the application");

Console.ReadLine();

}

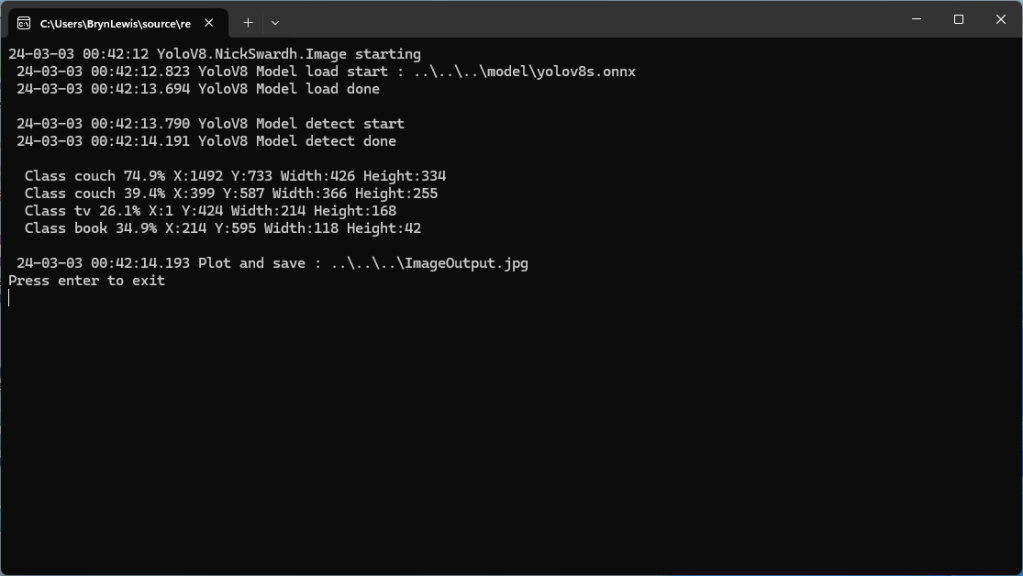

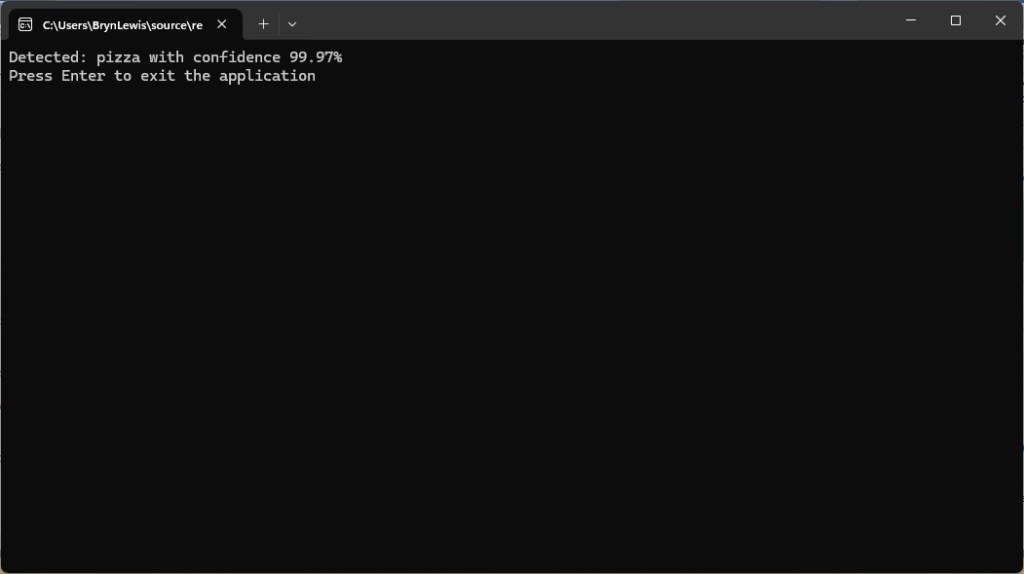

At this point the code compiled and ran

Pretty confident this i a picture of a pizza

Pose

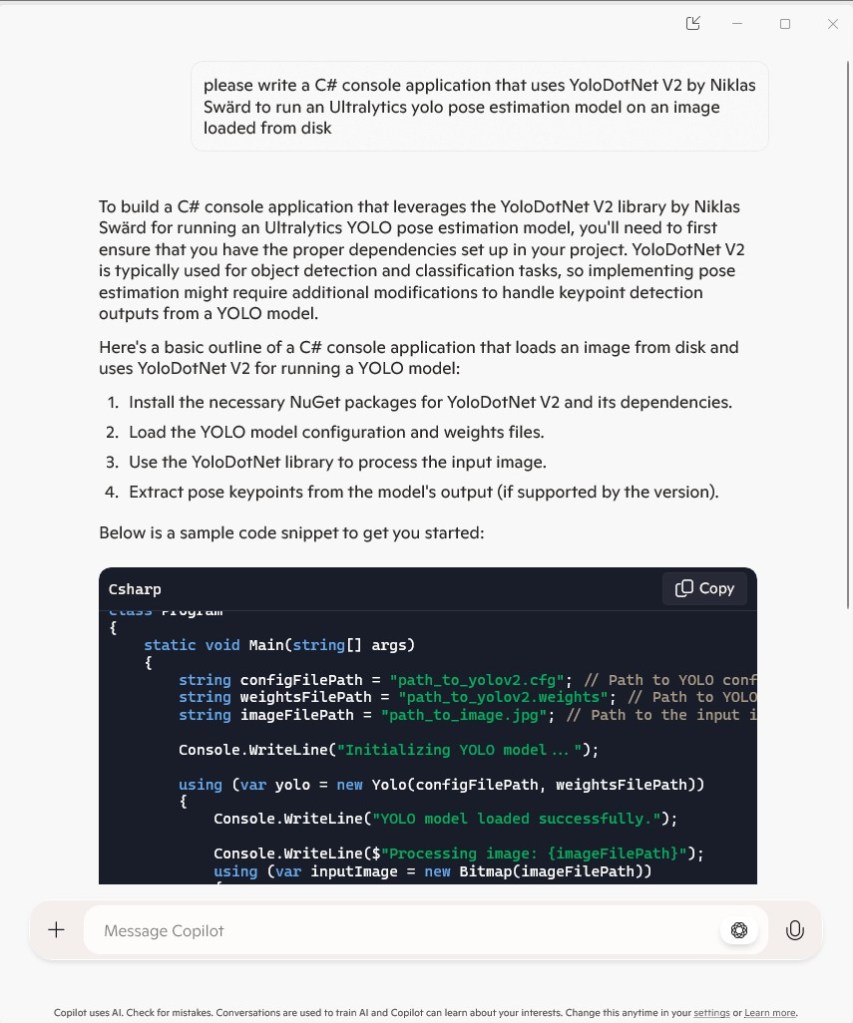

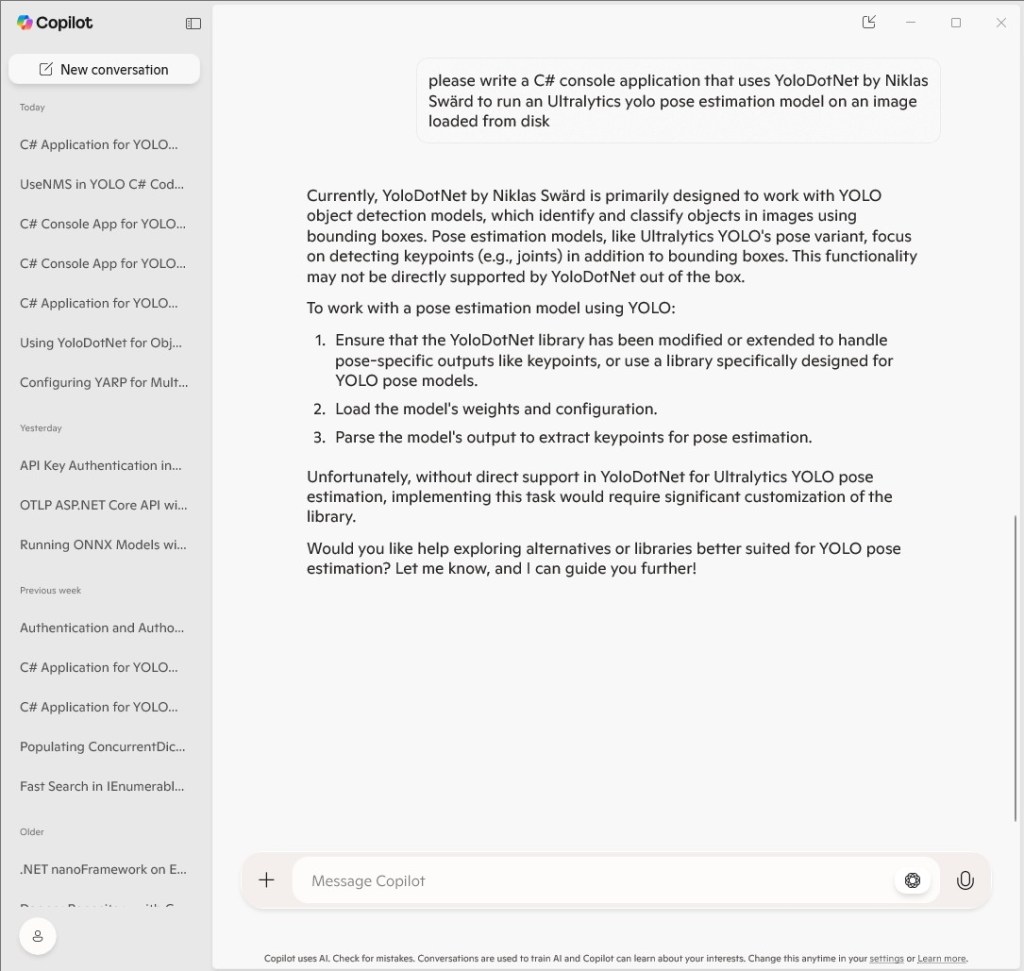

My Copilot prompt

Replace, path/to/yolo_model.onnx, and path/to/image.jpg with the actual paths to your model files and input image

This example assumes that YoloDotNet V2 supports the loaded YOLO model. Verify compatibility with the YOLO ObjectDetection variant.

Copilot had “assumed” I meant Ultralytics Yolo V2 and the code didn’t compile. So, I tried again without V2.

At this point I gave up

Summary

Using Copilot prompts to generate code which uses Ultralytics YoloV8 and YoloDoNet didn’t go well. In addition Visual Studio intellisense often struggled to offer useful options.

Overall, the experience wasn’t great.