Different Approaches…

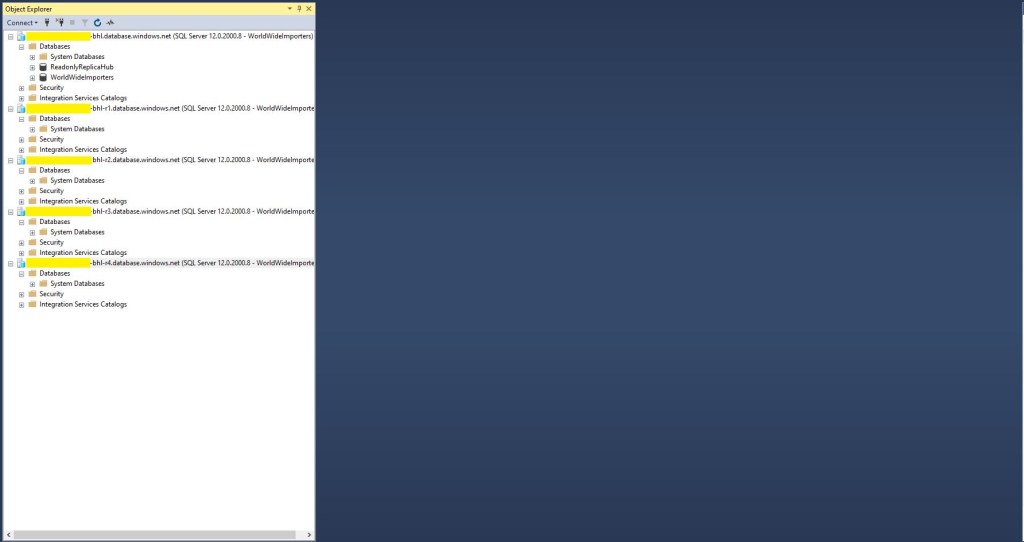

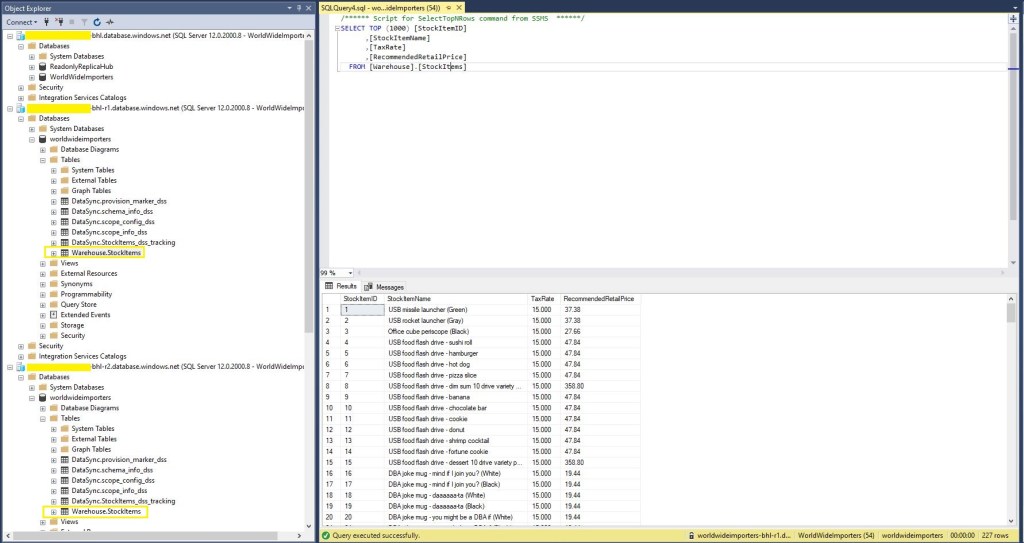

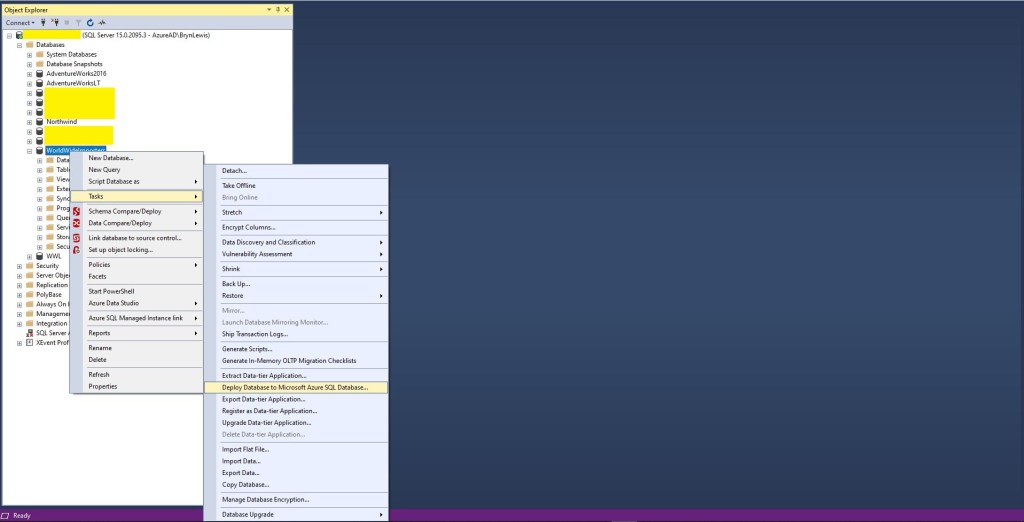

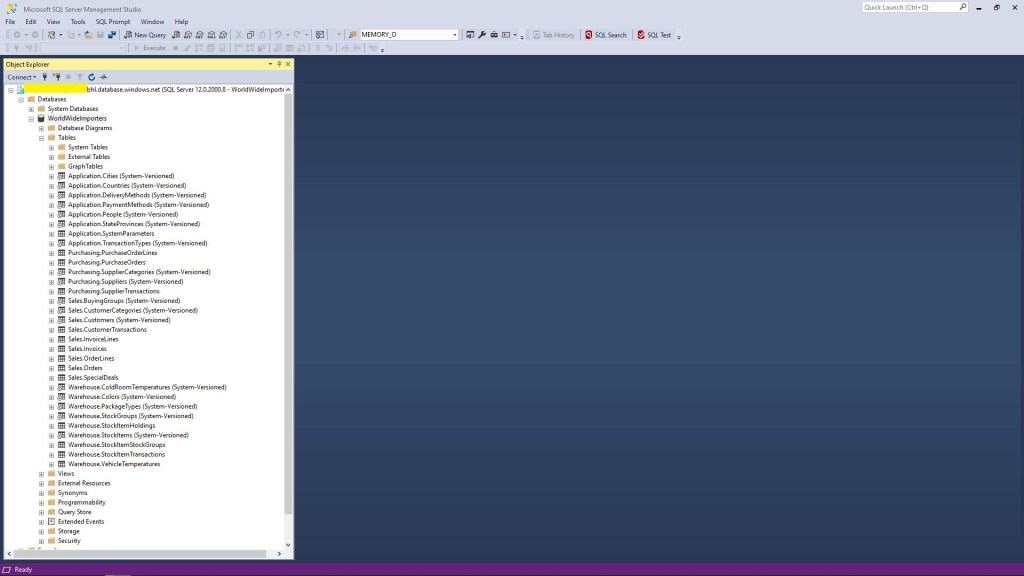

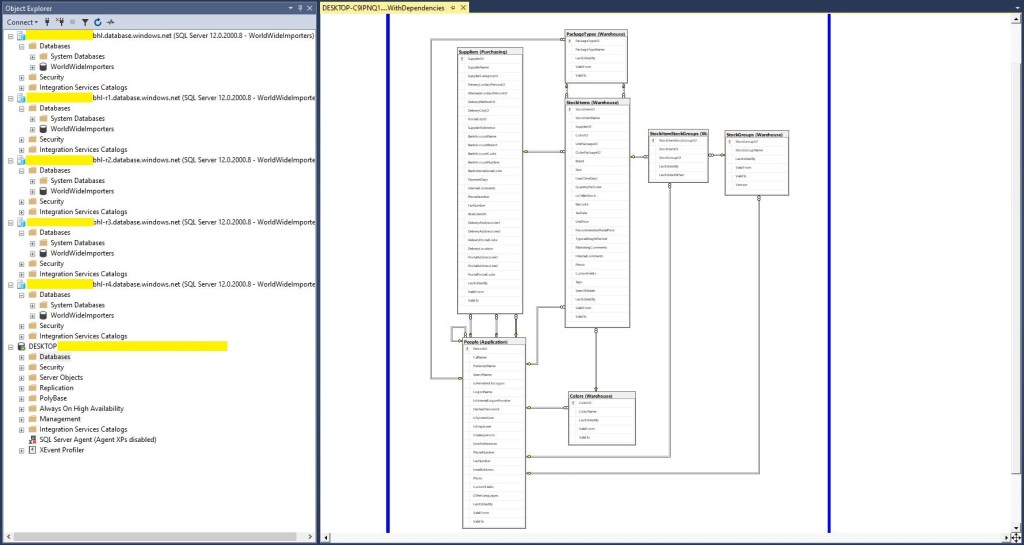

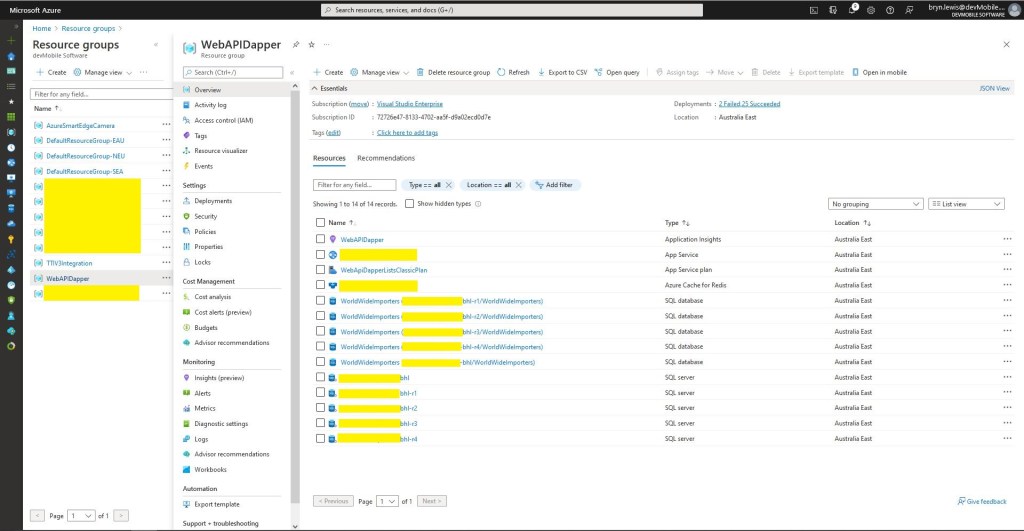

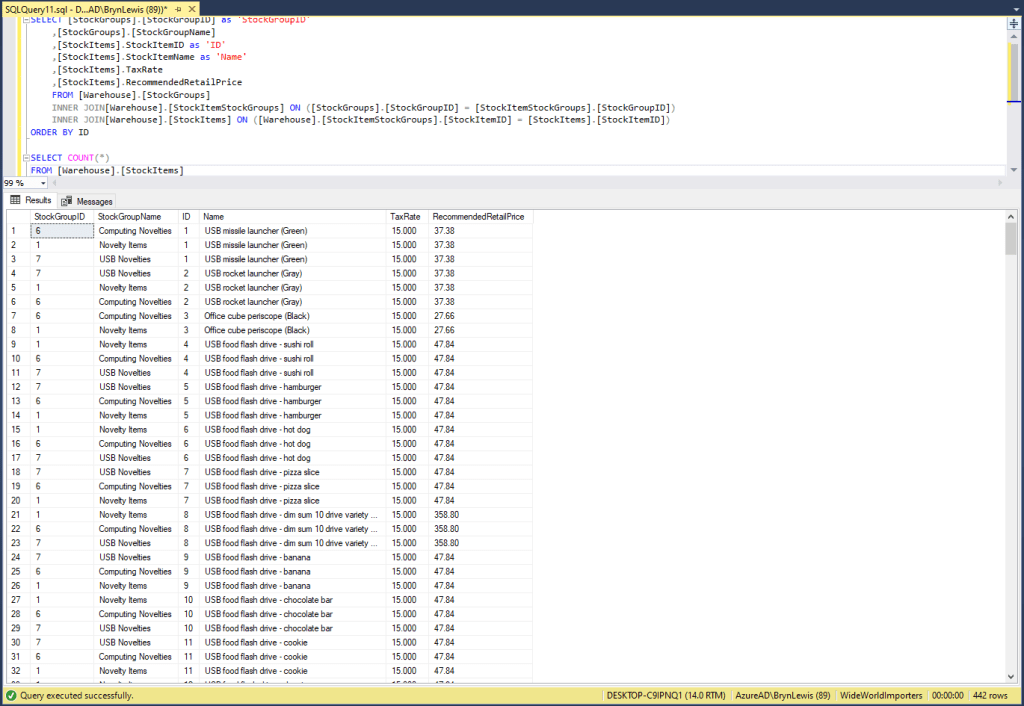

While working on customer ASP.NET Core web API(WebAPI) + Microsoft SQL Server(MSSQL) applications I have encountered several different ways of passing parameters to stored procedures and embedded Structured Query Language(SQL) statements. I have created five examples which query the World Wide Importers database [Warehouse].[StockItems] in the World Wide Importers database to illustrate the different approaches.

A customer with large application which had a lot of ADO.Net code was comfortable Dapper DynamicParameters. Hundreds of stored procedures with input (some output) parameters were used to manage access to data. The main advantage of this approach was “familiarity” and the use of DynamicParameters made mapping of C# variable and stored procedure parameters (with different naming conventions) obvious.

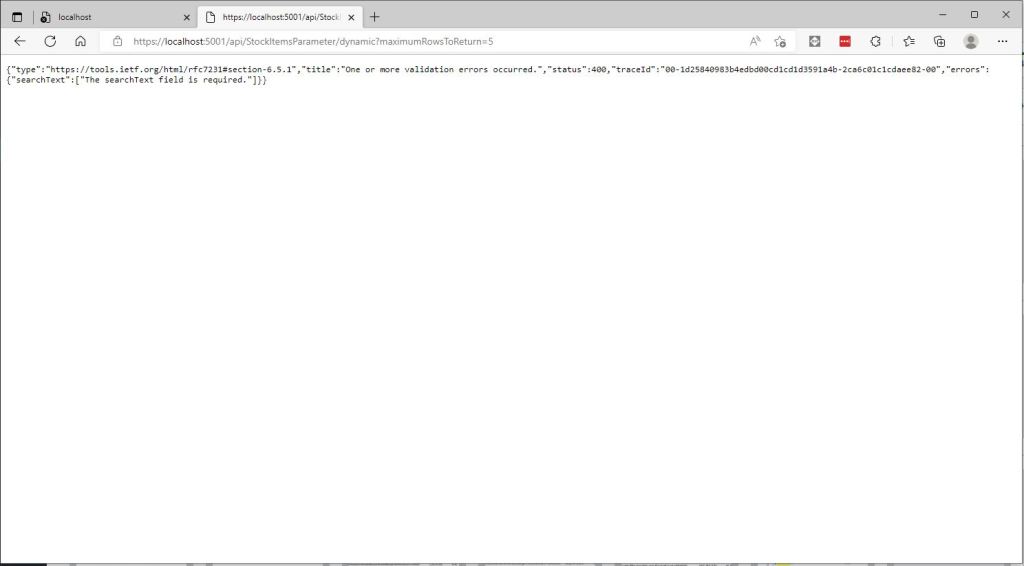

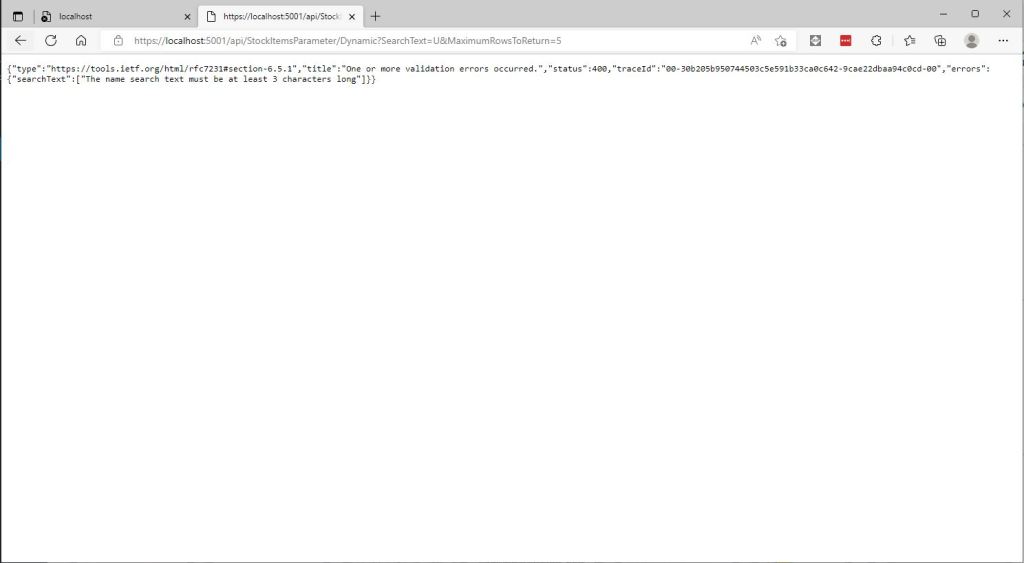

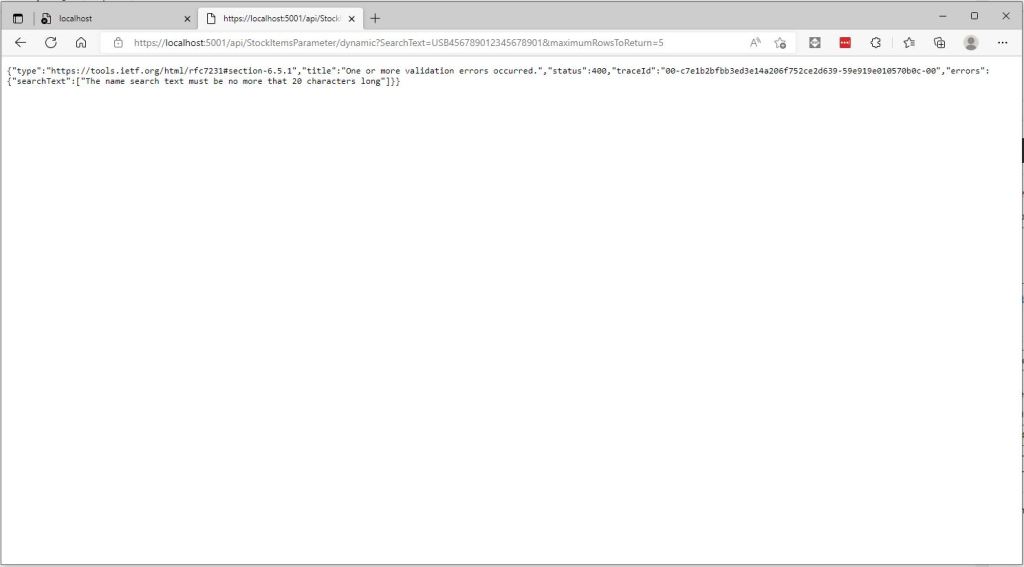

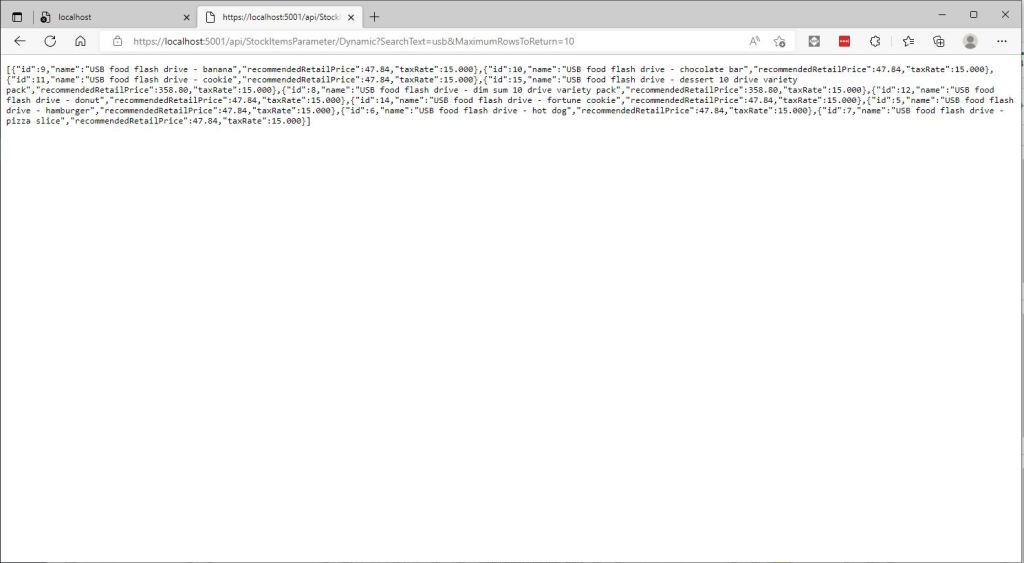

[HttpGet("Dynamic")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetDynamic(

[Required][MinLength(3, ErrorMessage = "The name search text must be at least {1} characters long"), MaxLength(20, ErrorMessage = "The name search text must be no more that {1} characters long")] string searchText,

[Required][Range(1, int.MaxValue, ErrorMessage = "MaximumRowsToReturn must be greater than or equal to {1}")] int maximumRowsToReturn)

{

IEnumerable<Model.StockItemListDtoV1> response = null;

using (SqlConnection db = new SqlConnection(this.connectionString))

{

DynamicParameters parameters = new DynamicParameters();

parameters.Add("MaximumRowsToReturn", maximumRowsToReturn);

parameters.Add("SearchText", searchText);

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: "[Warehouse].[StockItemsNameSearchV1]", param: parameters, commandType: CommandType.StoredProcedure);

}

return this.Ok(response);

}

The developers at another company used anonymous typed variables everywhere. They also had similar C# and stored procedure parameter naming conventions so there was minimal (in the example code only maximumRowsToReturn vs. stockItemsMaximum) mapping required. They found mapping stored procedure output parameters was problematic. For longer parameter lists they struggled with formatting the code in a way which was readable.

[HttpGet("Anonymous")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetAnonymous(

[Required][MinLength(3, ErrorMessage = "The name search text must be at least {1} characters long"), MaxLength(20, ErrorMessage = "The name search text must be no more that {1} characters long")] string searchText,

[Required][Range(1, 100, ErrorMessage = "The maximum number of stock items to return must be greater than or equal to {1} and less then or equal {2}")] int stockItemsMaximum)

{

IEnumerable<Model.StockItemListDtoV1> response = null;

using (SqlConnection db = new SqlConnection(this.connectionString))

{

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: "[Warehouse].[StockItemsNameSearchV1]", new { searchText, maximumRowsToReturn = stockItemsMaximum }, commandType: CommandType.StoredProcedure);

}

return this.Ok(response);

}

At another customer the developers used Data Transfer Objects(DTOs)/Plain Old CLR Objects(POCOs) and they had some control over the naming of the stored procedure/embedded SQL parameters.

public class StockItemNameSearchDtoV1

{

[Required]

[MinLength(3, ErrorMessage = "The name search text must be at least {1} characters long"), MaxLength(20, ErrorMessage = "The name search text must be no more that {1} characters long")]

public string SearchText { get; set; }

[Required]

[Range(1, 100, ErrorMessage = "The maximum number of rows to return must be greater than or equal to {1} and less then or equal {2}")]

public int MaximumRowsToReturn { get; set; }

}

[HttpGet("AutomagicDefault")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetDefault([FromQuery] Model.StockItemNameSearchDtoV1 request)

{

IEnumerable<Model.StockItemListDtoV1> response = null;

using (SqlConnection db = new SqlConnection(this.connectionString))

{

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: "[Warehouse].[StockItemsNameSearchV1]", param: request, commandType: CommandType.StoredProcedure);

}

return this.Ok(response);

}

At another customer the developers used Data Transfer Objects(DTOs)/Plain Old CLR Objects(POCOs) to access the database which had several hundred stored procedures. They had no control over the stored procedure parameter names so they mapped query string parameters to the properties of their POCOs.

This took some experimentation as System.Text.Json/Newtonsoft.Json decorations didn’t work (query string is not Java Script Object Notation(JSON)). They decorated the properties of their DTOs with the [FromQuery] attribute.

public class StockItemNameSearchDtoV2

{

[Required]

[FromQuery(Name = "SearchText")]

[MinLength(3, ErrorMessage = "The name search text must be at least {1} characters long"), MaxLength(20, ErrorMessage = "The name search text must be no more than {1} characters long")]

public string SearchText { get; set; }

[Required]

[FromQuery(Name = "StockItemsMaximum")]

[Range(1, 100, ErrorMessage = "The maximum number of stock items to return must be greater than or equal to {1} and less then or equal {2}")]

public int MaximumRowsToReturn { get; set; }

}

[HttpGet("AutomagicMapped")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetMapperDecorated([FromQuery] Model.StockItemNameSearchDtoV2 request)

{

IEnumerable<Model.StockItemListDtoV1> response = null;

using (SqlConnection db = new SqlConnection(this.connectionString))

{

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: "[Warehouse].[StockItemsNameSearchV1]", param: request, commandType: CommandType.StoredProcedure);

}

return this.Ok(response);

}

I don’t think that [FromQuery] decorations on POCOs is a good idea. If the classes are only used for one method I would consider moving them into the controller file.

//

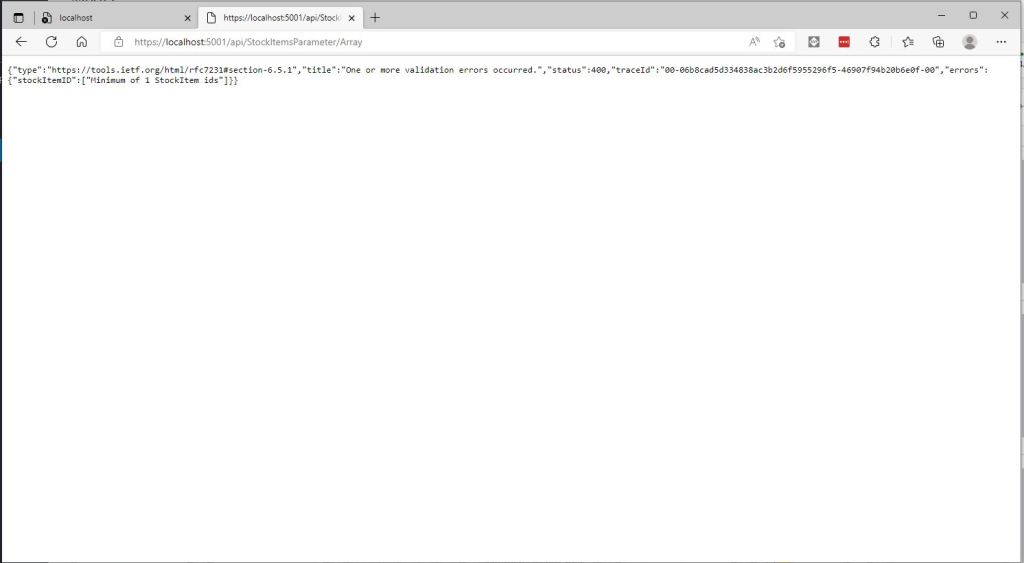

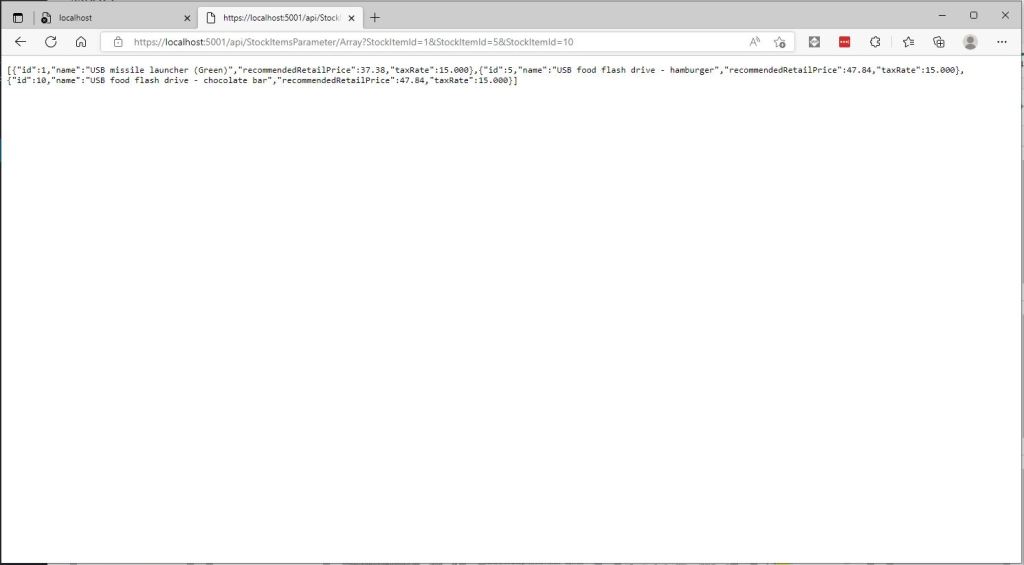

// https://localhost:5001/api/StockItemsParameter/Array?StockItemId=1&StockItemId=5&StockItemId=10

//

[HttpGet("Array")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetArray(

[FromQuery(Name = "stockItemID")][Required(), MinLength(1, ErrorMessage = "Minimum of {1} StockItem id(s)"), MaxLength(100, ErrorMessage = "Maximum {1} StockItem ids")] int[] stockItemIDs)

{

IEnumerable<Model.StockItemListDtoV1> response = null;

using (SqlConnection db = new SqlConnection(this.connectionString))

{

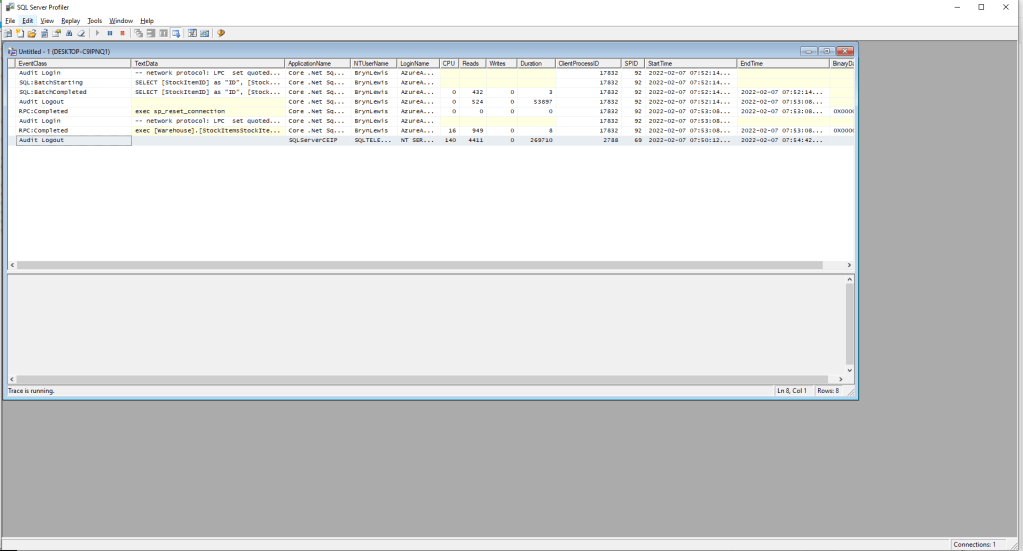

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems] WHERE StockItemID IN @StockItemIds ", new { StockItemIDs = stockItemIDs }, commandType: CommandType.Text);

}

return this.Ok(response);

}

A customer wanted users to be able search for items selected in a multiple selection list so a Dapper WHERE IN value array was used.

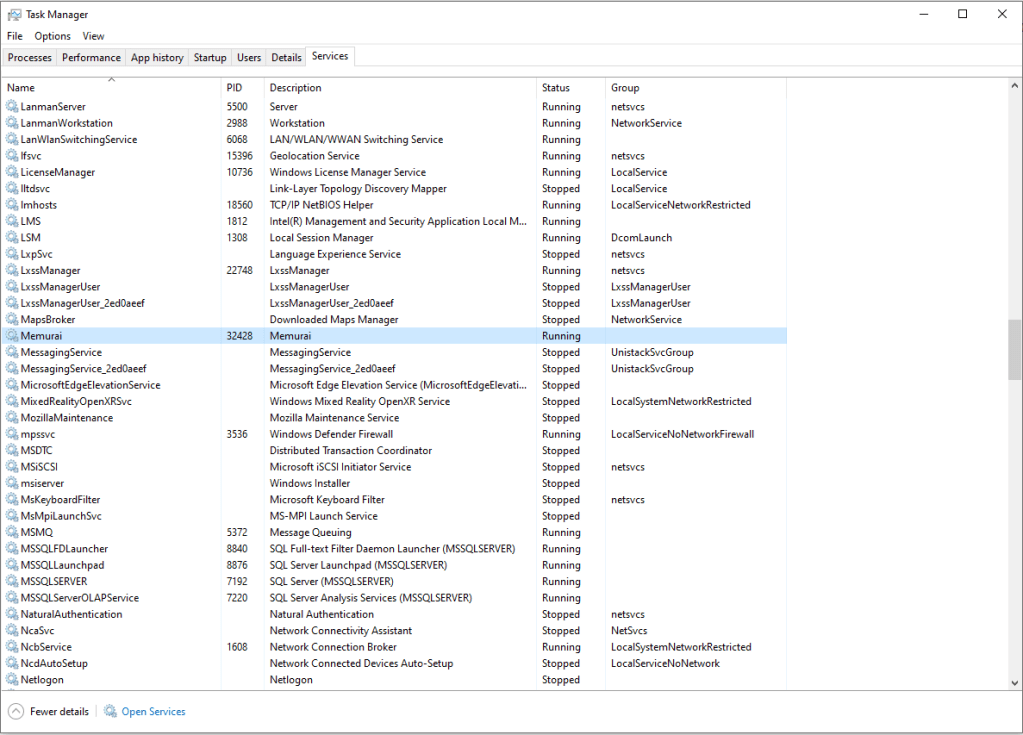

To explore how this worked I downloaded the Dapper source code and reference the project in my solution.

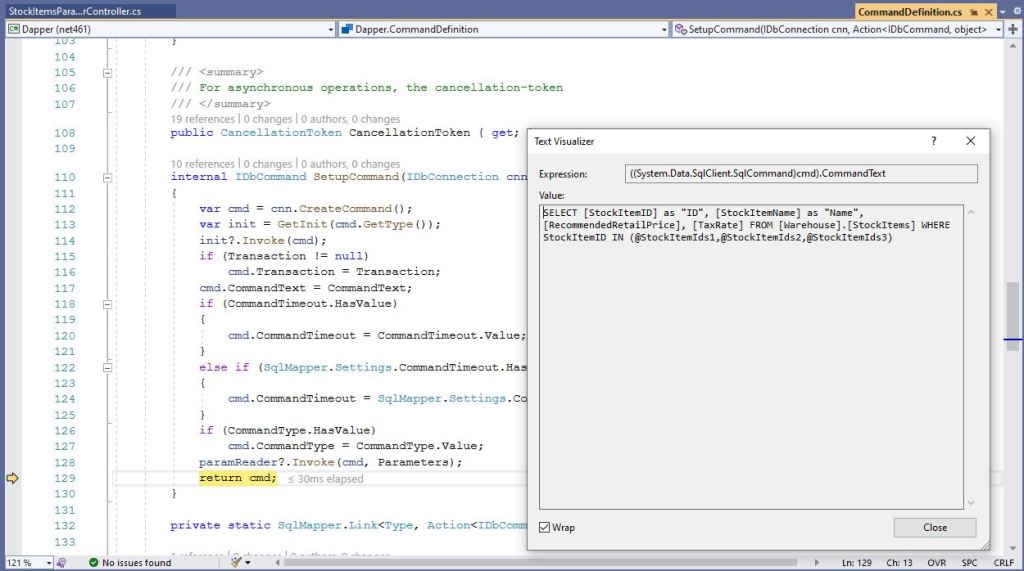

After single stepping through the Dapper source code I found where the array of StockTtems was getting mapped into a “generated” parameterised SQL statement.

Based on my customer’s experiences a “mix ‘and ‘n’ match” approach to parameterising Dapper queries looks like a reasonable approach.