Recovering from transient failures with ADO.Net RetryLogicProvider

This post is all about learning from failure, hopefully it will help someone else…

A while ago I wrote DapperTransient which uses Polly to retry SQLConnection and SQLCommand operations if the failure might be “transient”. My DapperTransient code wraps nearly all of the Dapper methods with a Polly RetryPolicy.ExecuteAsync.

public static Task<int> ExecuteWithRetryAsync(

this IDbConnection connection,

string sql,

object param = null,

IDbTransaction transaction = null,

int? commandTimeout = null,

CommandType? commandType = null) => RetryPolicy.ExecuteAsync(() => connection.ExecuteAsync(sql, param, transaction, commandTimeout, commandType));

One company I work for has a 10+year old VB.Net codebase that makes extensive use of ADO.Net calls which we moved to Azure Infrastructure as a Service(IaaS) a few years ago. Every so often they would get a cluster of ADO.Net exceptions when executing stored procedures in their Azure SQL database. While I was investigating how to retry transient failures without a major refactoring of the codebase I stumbled across SqlRetryLogicOption + TransientErrors, SqlRetryLogicBaseProvider and RetryLogicProvider which looked like a viable solution. At the time I also wondered if it would be possible to use the same approach with Dapper.

namespace devMobile.WebAPIDapper.Lists.Controllers

{

[ApiController]

[Route("api/[controller]")]

public class StockItemsRetryADONetController : ControllerBase

{

private readonly string connectionString;

private readonly ILogger<StockItemsRetryADONetController> logger;

// This is a bit nasty but sufficient for PoC

private readonly int NumberOfRetries = 3;

private readonly TimeSpan TimeBeforeNextExecution = TimeSpan.Parse("00:00:01");

private readonly TimeSpan MaximumInterval = TimeSpan.Parse("00:00:30");

private readonly List<int> TransientErrors = new List<int>()

{

49920, // Cannot process rquest. Too many operations in progress for subscription

49919, // Cannot process create or update request.Too many create or update operations in progress for subscription

49918, // Cannot process request. Not enough resources to process request.

41839, // Transaction exceeded the maximum number of commit dependencies.

41325, // The current transaction failed to commit due to a serializable validation failure.

41305, // The current transaction failed to commit due to a repeatable read validation failure.

41302, // The current transaction attempted to update a record that has been updated since the transaction started.

41301, // Dependency failure: a dependency was taken on another transaction that later failed to commit.

40613, // Database XXXX on server YYYY is not currently available. Please retry the connection later.

40501, // The service is currently busy. Retry the request after 10 seconds

40197, // The service has encountered an error processing your request. Please try again

20041, // Transaction rolled back. Could not execute trigger. Retry your transaction.

17197, // Login failed due to timeout; the connection has been closed. This error may indicate heavy server load.

14355, // The MSSQLServerADHelper service is busy. Retry this operation later.

11001, // Connection attempt failed

10936, // The request limit for the elastic pool has been reached.

10929, // The server is currently too busy to support requests.

10928, // The limit for the database is has been reached

10922, // Operation failed. Rerun the statement.

10060, // A network-related or instance-specific error occurred while establishing a connection to SQL Server.

10054, // A transport-level error has occurred when sending the request to the server.

10053, // A transport-level error has occurred when receiving results from the server.

9515, // An XML schema has been altered or dropped, and the query plan is no longer valid. Please rerun the query batch.

8651, // Could not perform the operation because the requested memory grant was not available in resource pool

8645, // A timeout occurred while waiting for memory resources to execute the query in resource pool, Rerun the query

8628, // A timeout occurred while waiting to optimize the query. Rerun the query.

4221, // Login to read-secondary failed due to long wait on 'HADR_DATABASE_WAIT_FOR_TRANSITION_TO_VERSIONING'. The replica is not available for login because row versions are missing for transactions that were in-flight when the replica was recycled

4060, // Cannot open database requested by the login. The login failed.

3966, // Transaction is rolled back when accessing version store. It was earlier marked as victim when the version store was shrunk due to insufficient space in tempdb. Retry the transaction.

3960, // Snapshot isolation transaction aborted due to update conflict. You cannot use snapshot isolation to access table directly or indirectly in database

3935, // A FILESTREAM transaction context could not be initialized. This might be caused by a resource shortage. Retry the operation.

1807, // Could not obtain exclusive lock on database 'model'. Retry the operation later.

1221, // The Database Engine is attempting to release a group of locks that are not currently held by the transaction. Retry the transaction.

1205, // Deadlock

1204, // The instance of the SQL Server Database Engine cannot obtain a LOCK resource at this time. Rerun your statement.

1203, // A process attempted to unlock a resource it does not own. Retry the transaction.

997, // A connection was successfully established with the server, but then an error occurred during the login process.

921, // Database has not been recovered yet. Wait and try again.

669, // The row object is inconsistent. Please rerun the query.

617, // Descriptor for object in database not found in the hash table during attempt to un-hash it. Rerun the query. If a cursor is involved, close and reopen the cursor.

601, // Could not continue scan with NOLOCK due to data movement.

233, // The client was unable to establish a connection because of an error during connection initialization process before login.

121, // The semaphore timeout period has expired.

64, // A connection was successfully established with the server, but then an error occurred during the login process.

20, // The instance of SQL Server you attempted to connect to does not support encryption.

};

...

}

After some experimentation the most reliable way I could reproduce a transient failure (usually SQL Error 11001-“An error has occurred while establishing a connection to the server”) was by modifying the database connection string or unplugging the network cable after a connection had been explicitly opened or command executed.

namespace devMobile.WebAPIDapper.Lists.Controllers

{

[ApiController]

[Route("api/[controller]")]

public class StockItemsRetryADONetController : ControllerBase

{

...

[HttpGet("Dapper")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetDapper()

{

IEnumerable<Model.StockItemListDtoV1> response = null;

SqlRetryLogicOption sqlRetryLogicOption = new SqlRetryLogicOption()

{

NumberOfTries = NumberOfRetries,

DeltaTime = TimeBeforeNextExecution,

MaxTimeInterval = MaximumInterval,

TransientErrors = TransientErrors,

//AuthorizedSqlCondition = x => string.IsNullOrEmpty(x) || Regex.IsMatch(x, @"^SELECT", RegexOptions.IgnoreCase),

};

SqlRetryLogicBaseProvider sqlRetryLogicProvider = SqlConfigurableRetryFactory.CreateFixedRetryProvider(sqlRetryLogicOption);

using (SqlConnection db = new SqlConnection(this.connectionString))

{

db.RetryLogicProvider = sqlRetryLogicProvider;

db.RetryLogicProvider.Retrying += new EventHandler<SqlRetryingEventArgs>(OnDapperRetrying);

await db.OpenAsync(); // Did explicitly so I could yank out the LAN cable.

response = await db.QueryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]", commandType: CommandType.Text);

}

return this.Ok(response);

}

protected void OnDapperRetrying(object sender, SqlRetryingEventArgs args)

{

logger.LogInformation("Dapper retrying for {RetryCount} times for {args.Delay.TotalMilliseconds:0.} mSec - Error code: {Number}", args.RetryCount, args.Delay.TotalMilliseconds, (args.Exceptions[0] as SqlException).Number);

}

...

}

}

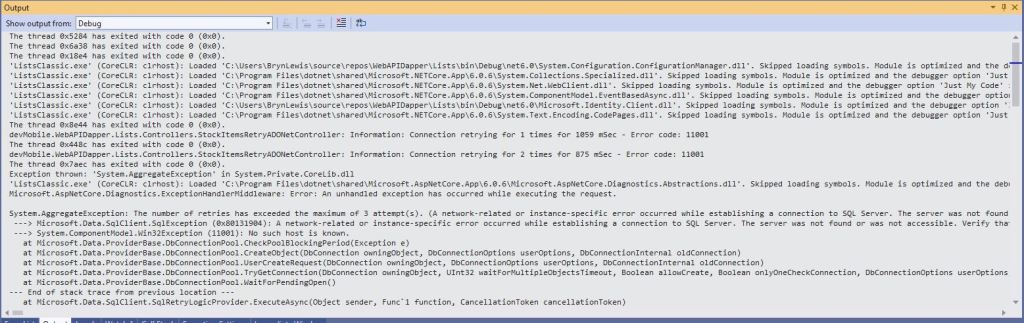

For my initial testing I used an invalid Azure SQL Database connection string and in the Visual Studio 2022 Debug output I could see retries.

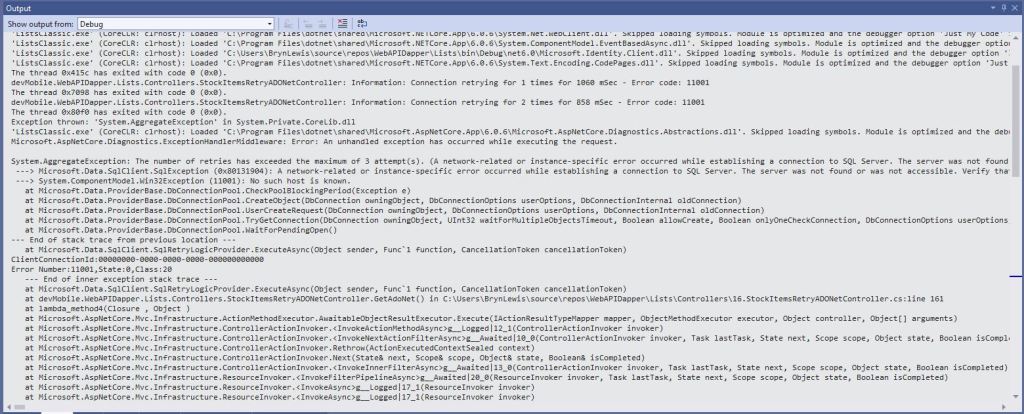

I then added an OpenAsync just before the Dapper query so I could open the database connection, pause the program with a breakpoint, unplug the LAN cable and then continue execution. The QueryAsync failed without any retries and modifying the AuthorizedSqlCondition didn’t seem change the way different SQL statement failures were handled.

There was limited documentation about how to use ADO.Net retry functionality so I hacked up another method to try and figure out what I had done wrong. The method uses the same SqlRetryLogicOption configuration for retrying connection and command failures.

namespace devMobile.WebAPIDapper.Lists.Controllers

{

[ApiController]

[Route("api/[controller]")]

public class StockItemsRetryADONetController : ControllerBase

{

...

[HttpGet("AdoNet")]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> GetAdoNet()

{

List<Model.StockItemListDtoV1> response = new List<Model.StockItemListDtoV1>();

// Both connection and command share same logic not really an issue for nasty demo

SqlRetryLogicOption sqlRetryLogicOption = new SqlRetryLogicOption()

{

NumberOfTries = NumberOfRetries,

DeltaTime = TimeBeforeNextExecution,

MaxTimeInterval = MaximumInterval,

TransientErrors = TransientErrors,

//AuthorizedSqlCondition = x => string.IsNullOrEmpty(x) || Regex.IsMatch(x, @"^SELECT", RegexOptions.IgnoreCase),

};

SqlRetryLogicBaseProvider sqlRetryLogicProvider = SqlConfigurableRetryFactory.CreateFixedRetryProvider(sqlRetryLogicOption);

// This ADO.Net is a bit overkill but just wanted to highlight ADO.Net vs. Dapper

using (SqlConnection sqlConnection = new SqlConnection(this.connectionString))

{

sqlConnection.RetryLogicProvider = sqlRetryLogicProvider;

sqlConnection.RetryLogicProvider.Retrying += new EventHandler<SqlRetryingEventArgs>(OnConnectionRetrying);

await sqlConnection.OpenAsync(); // Did explicitly so I could yank out the LAN cable.

using (SqlCommand sqlCommand = new SqlCommand())

{

sqlCommand.Connection = sqlConnection;

sqlCommand.CommandText = @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]";

sqlCommand.CommandType = CommandType.Text;

sqlCommand.RetryLogicProvider = sqlRetryLogicProvider;

sqlCommand.RetryLogicProvider.Retrying += new EventHandler<SqlRetryingEventArgs>(OnCommandRetrying);

// Over kill but makes really obvious

using (SqlDataReader sqlDataReader = await sqlCommand.ExecuteReaderAsync(CommandBehavior.CloseConnection))

{

while (await sqlDataReader.ReadAsync())

{

response.Add(new Model.StockItemListDtoV1()

{

Id = sqlDataReader.GetInt32("Id"),

Name = sqlDataReader.GetString("Name"),

RecommendedRetailPrice = sqlDataReader.GetDecimal("RecommendedRetailPrice"),

TaxRate = sqlDataReader.GetDecimal("TaxRate"),

});

}

}

};

}

return this.Ok(response);

}

protected void OnConnectionRetrying(object sender, SqlRetryingEventArgs args)

{

logger.LogInformation("Connection retrying for {RetryCount} times for {args.Delay.TotalMilliseconds:0.} mSec - Error code: {Number}", args.RetryCount, args.Delay.TotalMilliseconds, (args.Exceptions[0] as SqlException).Number);

}

protected void OnCommandRetrying(object sender, SqlRetryingEventArgs args)

{

logger.LogInformation("Command retrying for {RetryCount} times for {args.Delay.TotalMilliseconds:0.} mSec - Error code: {Number}", args.RetryCount, args.Delay.TotalMilliseconds, (args.Exceptions[0] as SqlException).Number);

}

}

}

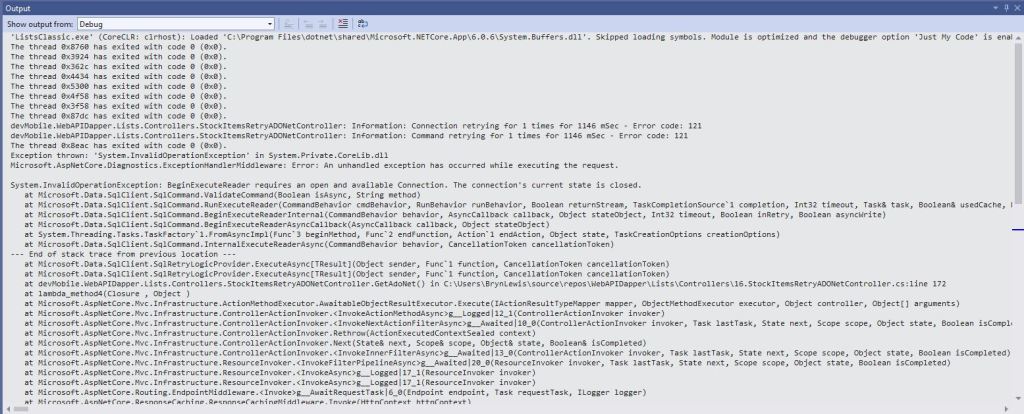

I also added two RetryLogicProvider.Retrying handlers one for SQLConnection and the other for SQLCommand so I could see what was being retried.

The number of retries when I unplugged the LAN cable wasn’t quite what I was expecting….

I didn’t fully understand the differences between System.Data.Sqlclient and Microsoft.Data.Sqlclient so I downloaded the source code for Dapper and starting hacking. My approach was to modify the Dapper CommandDefinition struct so a caller could pass in a SqlRetryLogicBaseProvider instance.

namespace Dapper

{

/// <summary>

/// Represents the key aspects of a sql operation

/// </summary>

public struct CommandDefinition

{

internal static CommandDefinition ForCallback(object parameters)

{

if (parameters is DynamicParameters)

{

return new CommandDefinition(parameters);

}

else

{

return default;

}

}

internal void OnCompleted()

{

(Parameters as SqlMapper.IParameterCallbacks)?.OnCompleted();

}

/// <summary>

/// The command (sql or a stored-procedure name) to execute

/// </summary>

public string CommandText { get; }

/// <summary>

/// The parameters associated with the command

/// </summary>

public object Parameters { get; }

/// <summary>

/// The active transaction for the command

/// </summary>

public IDbTransaction Transaction { get; }

/// <summary>

/// The effective timeout for the command

/// </summary>

public int? CommandTimeout { get; }

/// <summary>

/// The type of command that the command-text represents

/// </summary>

public CommandType? CommandType { get; }

/// <summary>

/// Should data be buffered before returning?

/// </summary>

public bool Buffered => (Flags & CommandFlags.Buffered) != 0;

/// <summary>

///

/// </summary>

public SqlRetryLogicBaseProvider SqlRetryLogicProvider { get; }

/// <summary>

/// Should the plan for this query be cached?

/// </summary>

internal bool AddToCache => (Flags & CommandFlags.NoCache) == 0;

/// <summary>

/// Additional state flags against this command

/// </summary>

public CommandFlags Flags { get; }

/// <summary>

/// Can async queries be pipelined?

/// </summary>

public bool Pipelined => (Flags & CommandFlags.Pipelined) != 0;

/// <summary>

/// Initialize the command definition

/// </summary>

/// <param name="commandText">The text for this command.</param>

/// <param name="parameters">The parameters for this command.</param>

/// <param name="transaction">The transaction for this command to participate in.</param>

/// <param name="commandTimeout">The timeout (in seconds) for this command.</param>

/// <param name="commandType">The <see cref="CommandType"/> for this command.</param>

/// <param name="flags">The behavior flags for this command.</param>

/// <param name="sqlRetryLogicProvider">Retry strategy for this command.</param>

/// <param name="cancellationToken">The cancellation token for this command.</param>

public CommandDefinition(string commandText, object parameters = null, IDbTransaction transaction = null, int? commandTimeout = null,

CommandType? commandType = null, CommandFlags flags = CommandFlags.Buffered

, SqlRetryLogicBaseProvider sqlRetryLogicProvider = null

, CancellationToken cancellationToken = default

)

{

CommandText = commandText;

Parameters = parameters;

Transaction = transaction;

CommandTimeout = commandTimeout;

CommandType = commandType;

Flags = flags;

SqlRetryLogicProvider = sqlRetryLogicProvider;

CancellationToken = cancellationToken;

}

...

}

This didn’t end well, as the Dapper library extends System.Data.IDbConnection which doesn’t “natively” support retry logic. Several hours lost from my life I now understand a bit more about the differences between System.Data.Sqlclient and Microsoft.Data.Sqlclient.