Introduction

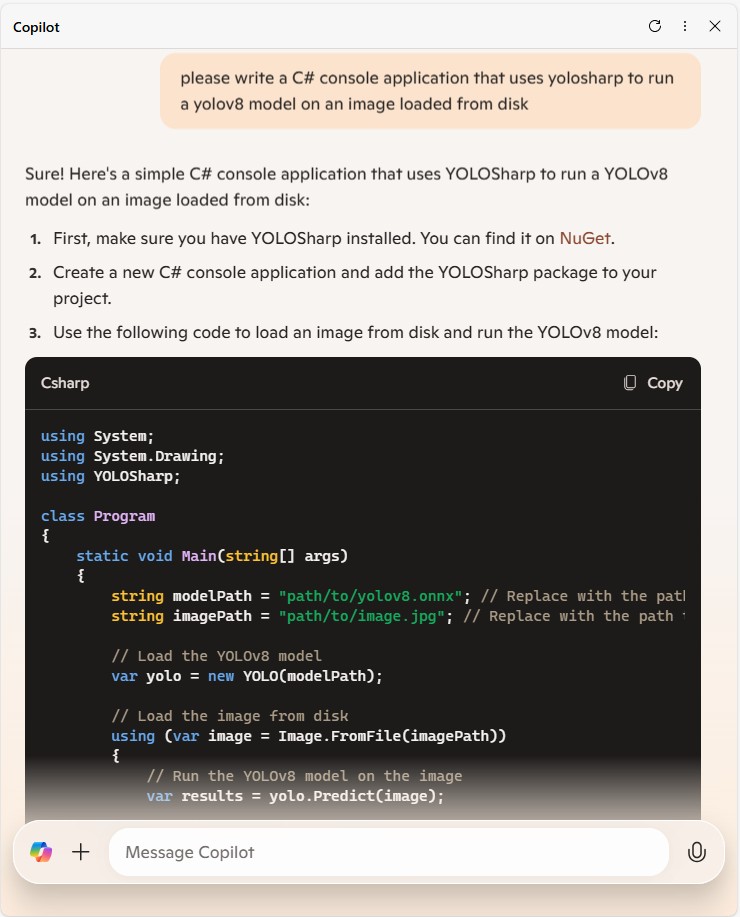

Using Copilot to generate the code to retrieve an image from a security camera went surprisingly well so next step was something a bit more complex. For this post I have used Copilot prompts to generate code which uses Ultralytics YoloV8 and Compunet YoloSharp for object detection, object classification, pose estimation, and instance segmentation

using System;

using System.Drawing;

using YOLOSharp;

class Program

{

static void Main(string[] args)

{

string modelPath = "path/to/yolov8.onnx"; // Replace with the path to your YOLOv8 model

string imagePath = "path/to/image.jpg"; // Replace with the path to your image

// Load the YOLOv8 model

var yolo = new YOLO(modelPath);

// Load the image from disk

using (var image = Image.FromFile(imagePath))

{

// Run the YOLOv8 model on the image

var results = yolo.Predict(image);

// Display the results

foreach (var result in results)

{

Console.WriteLine($"Label: {result.Label}, Confidence: {result.Confidence}, BoundingBox: {result.BoundingBox}");

}

}

}

}

In this example:

Replace path/to/yolov8.onnx with the actual path to your YOLOv8 model file.

Replace path/to/image.jpg with the actual path to your image file.

This application loads the YOLOv8 model using YOLOSharp, loads an image from disk, runs the model on the image, and displays the results, including the label, confidence, and bounding box for each detected object.

Happy coding! 🚀

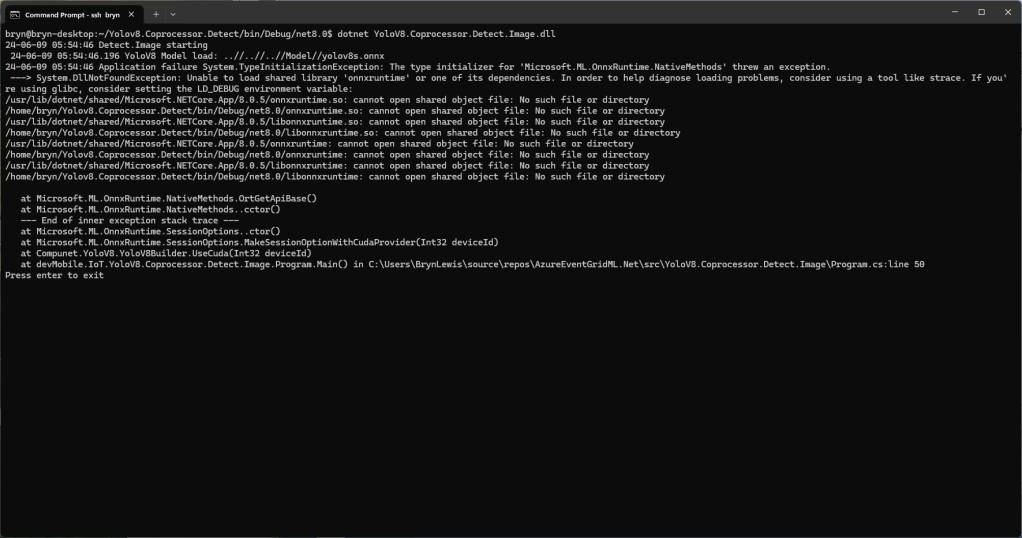

Copilot had “assumed” I meant object detection(reasonable) with YoloSharp and the code didn’t compile.

Object Detection

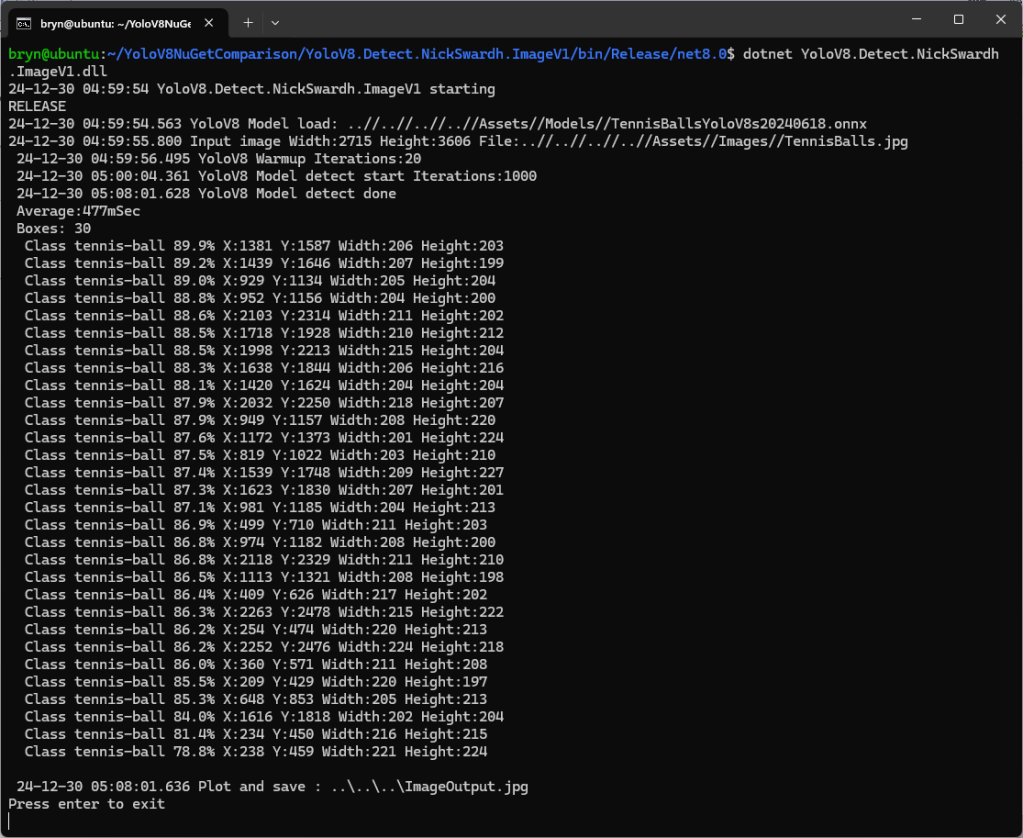

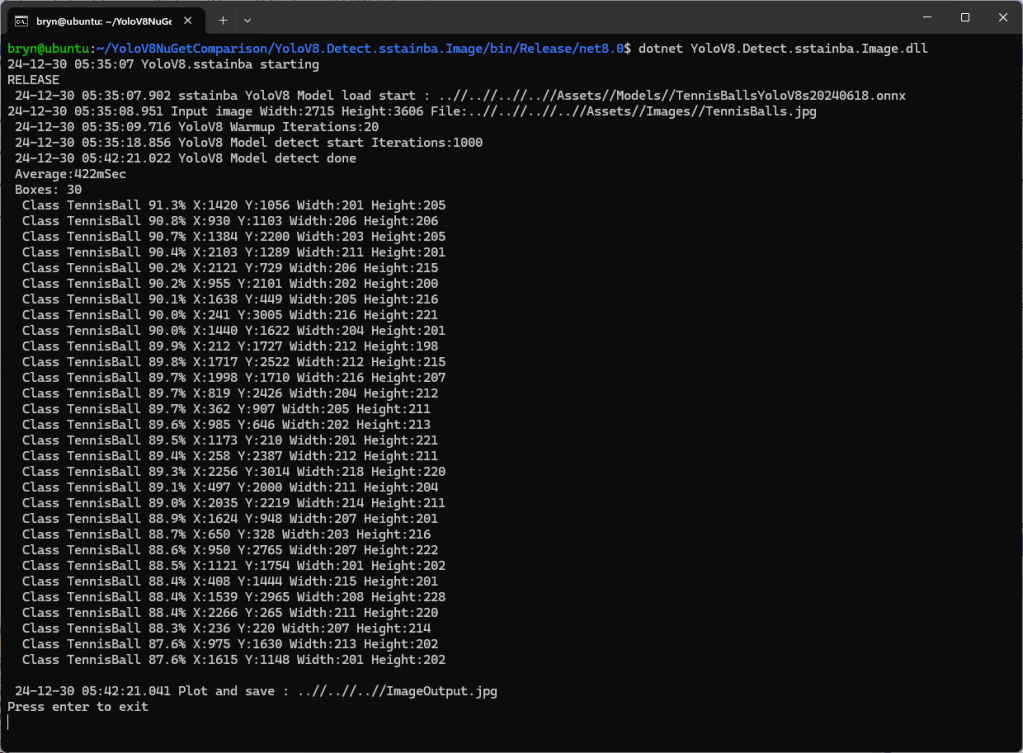

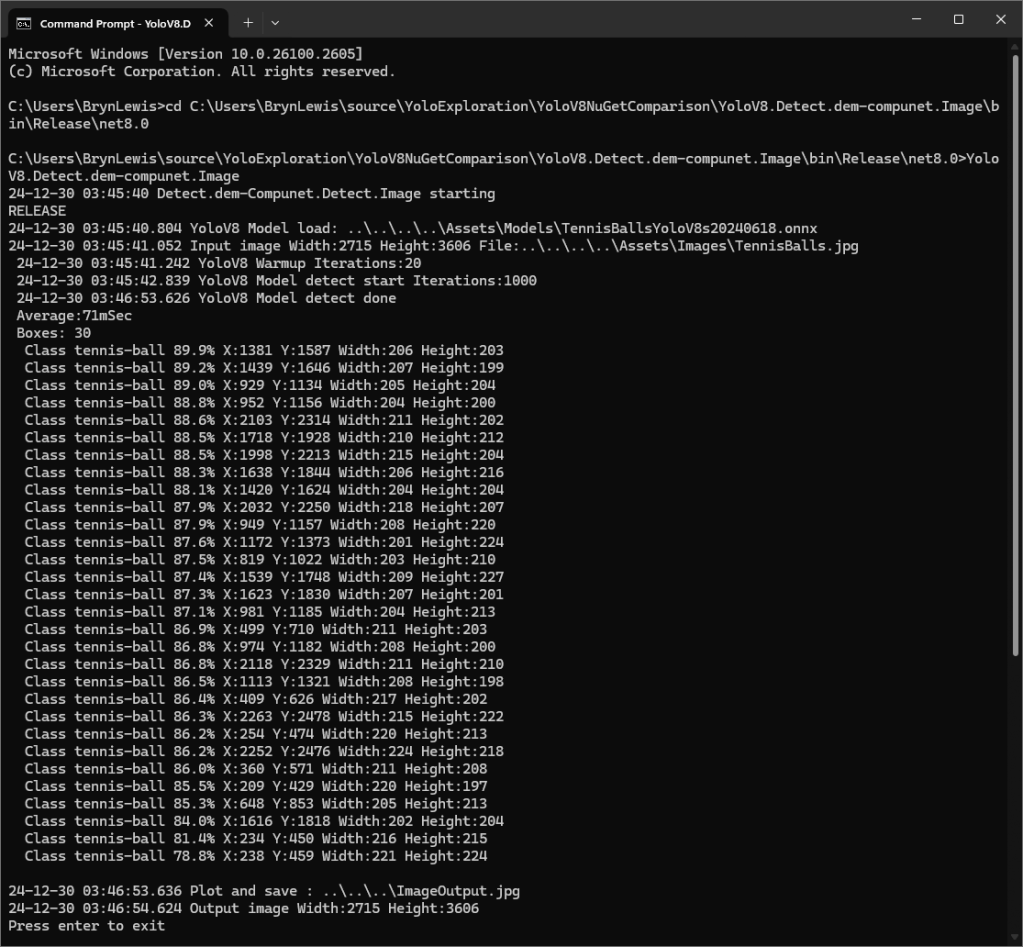

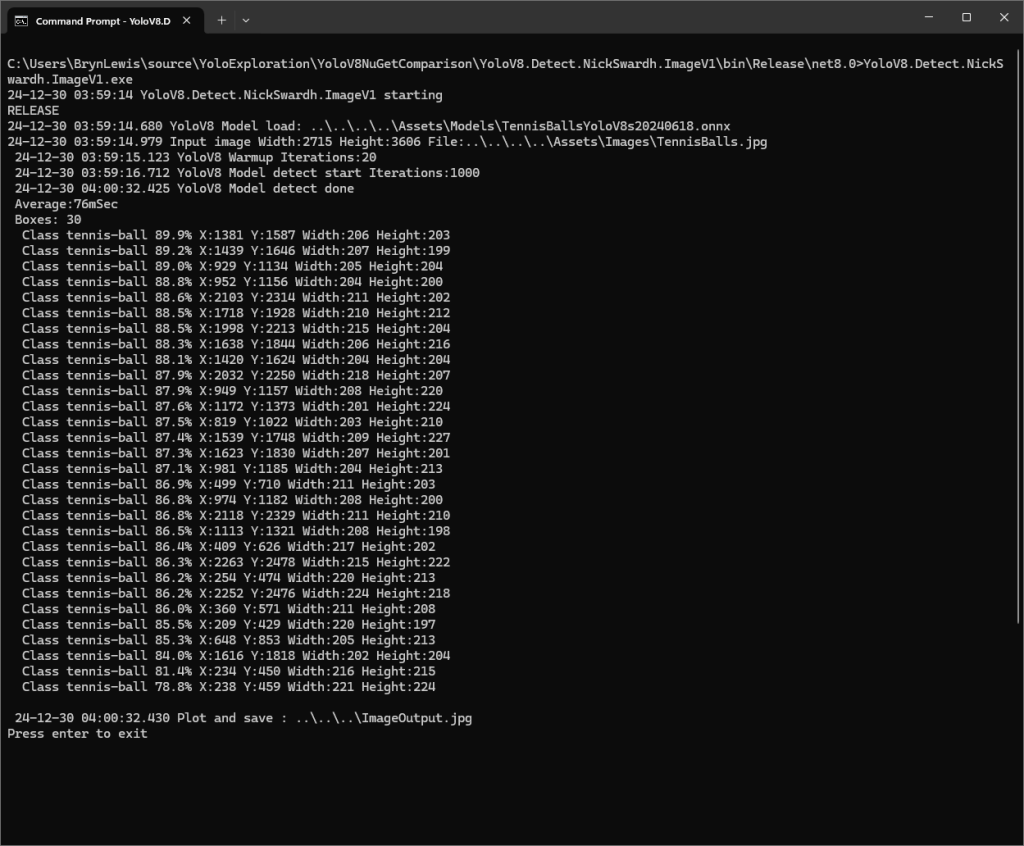

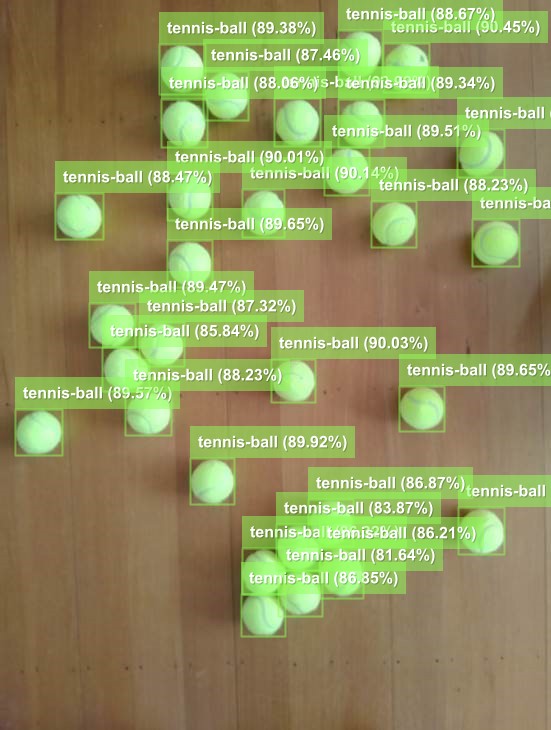

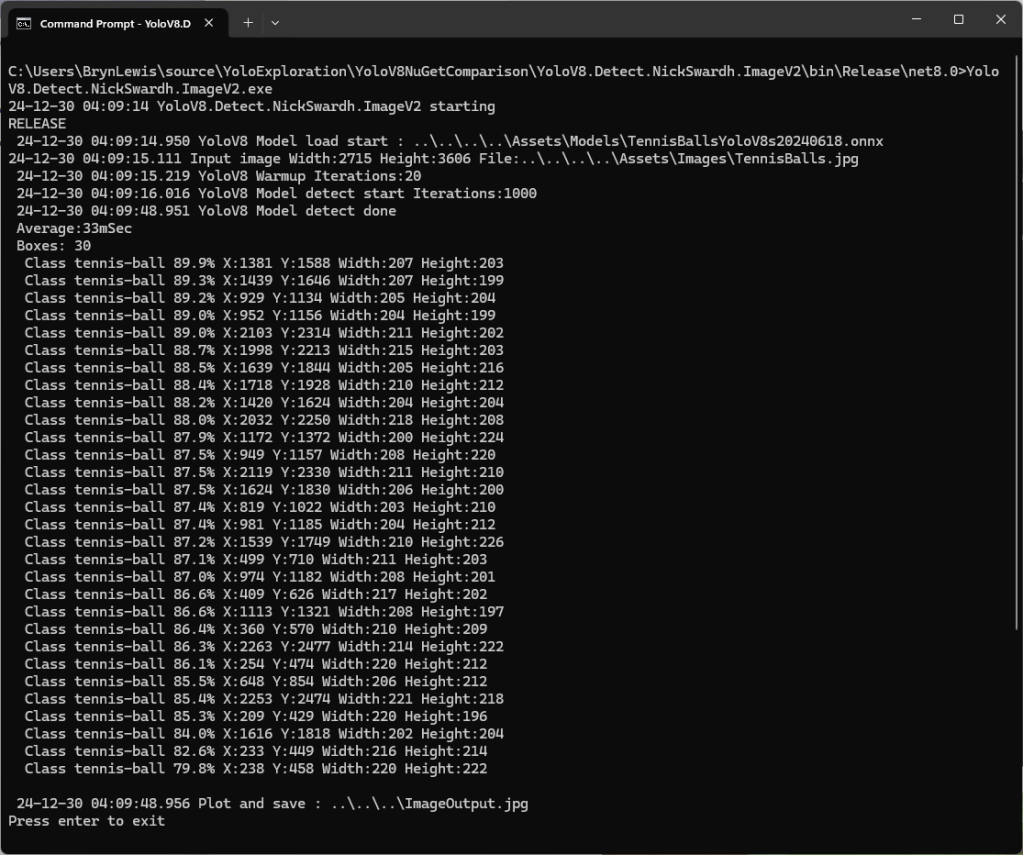

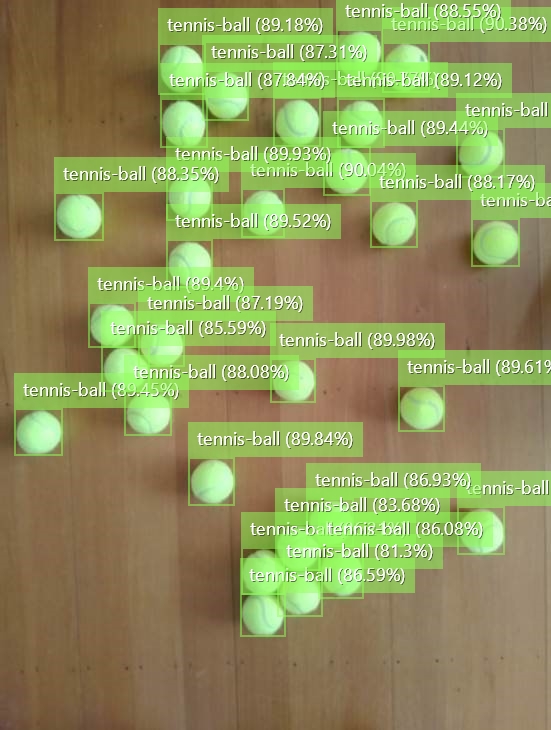

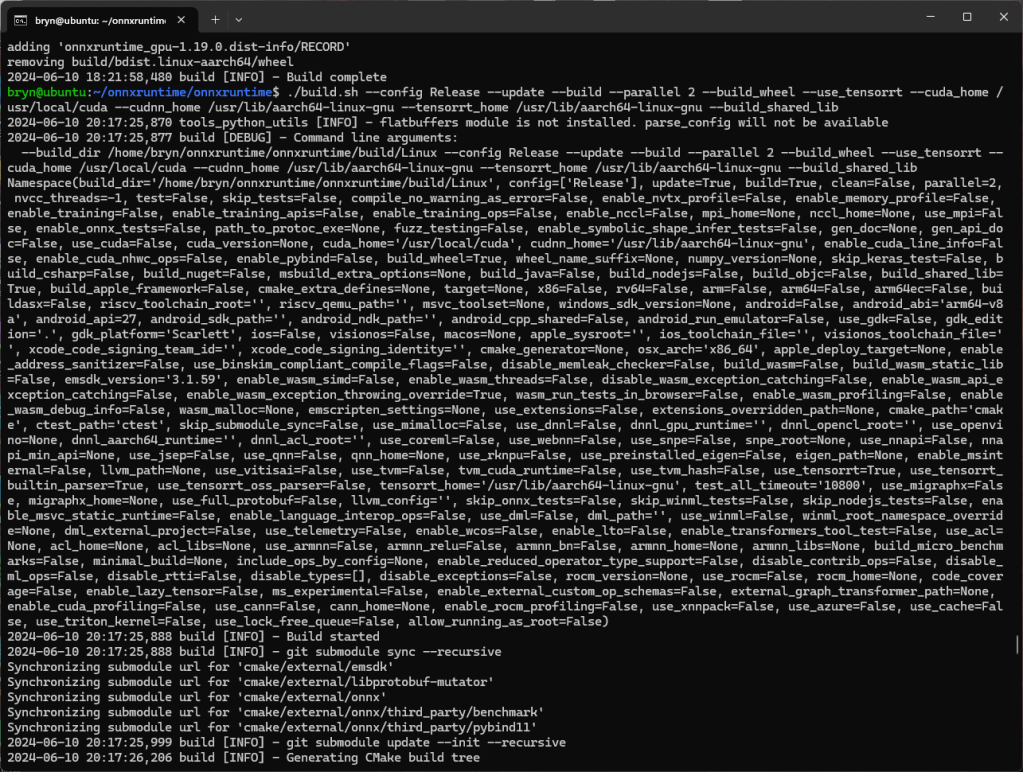

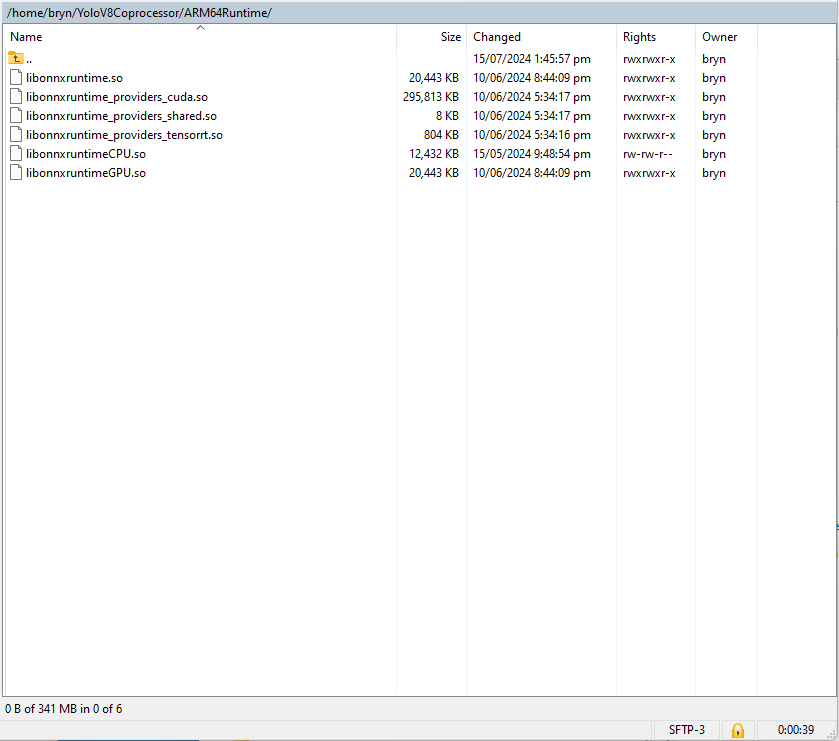

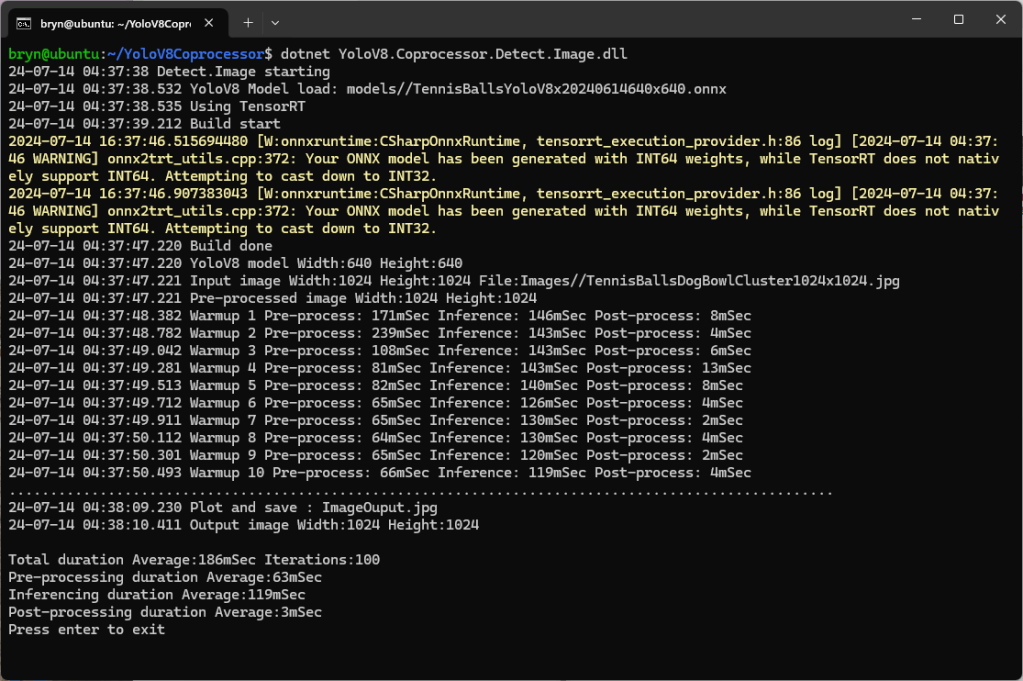

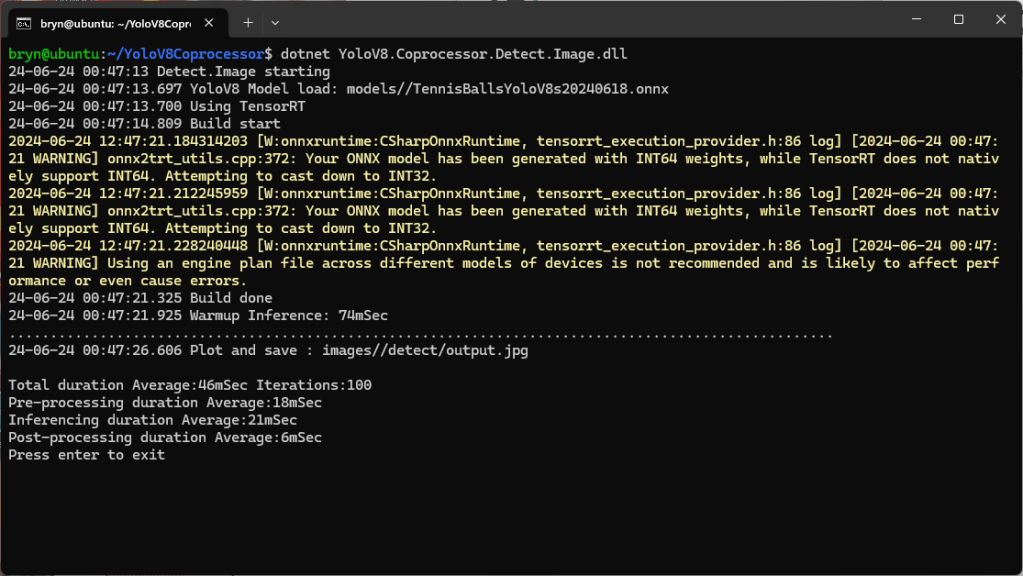

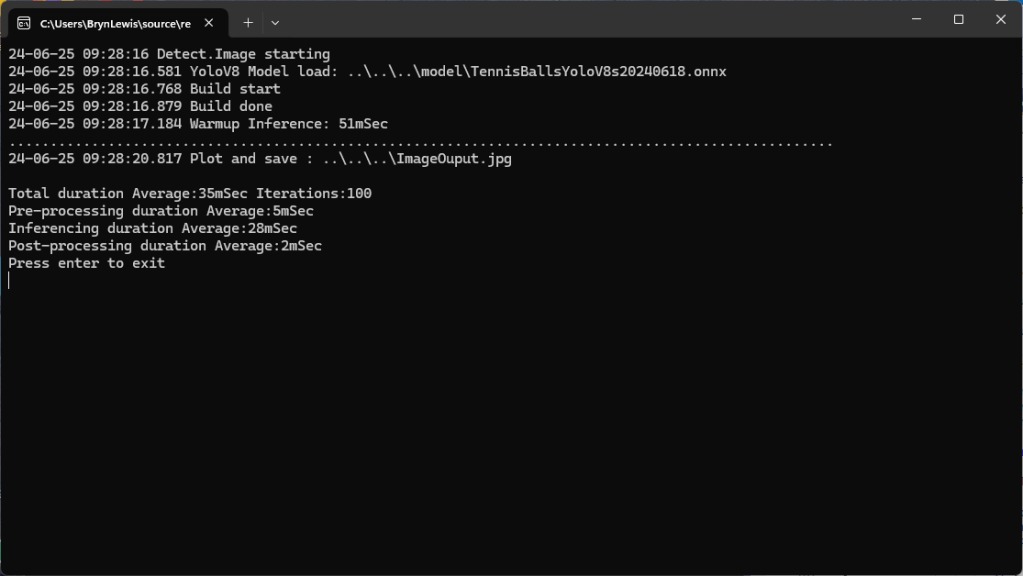

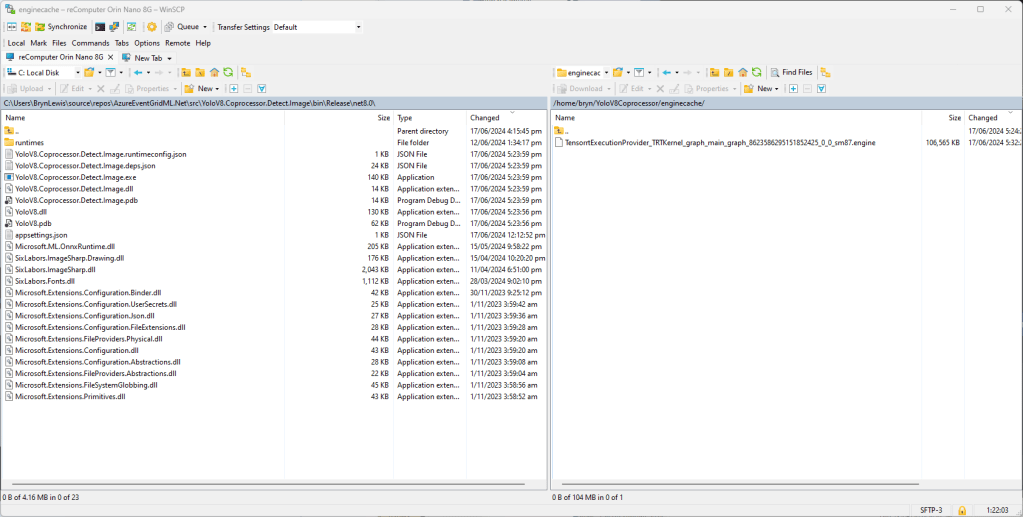

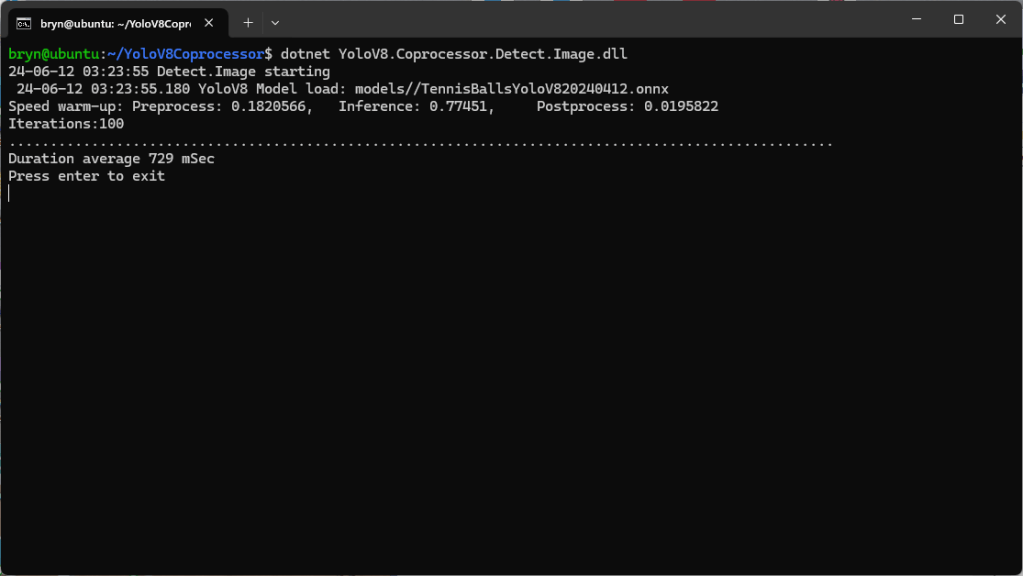

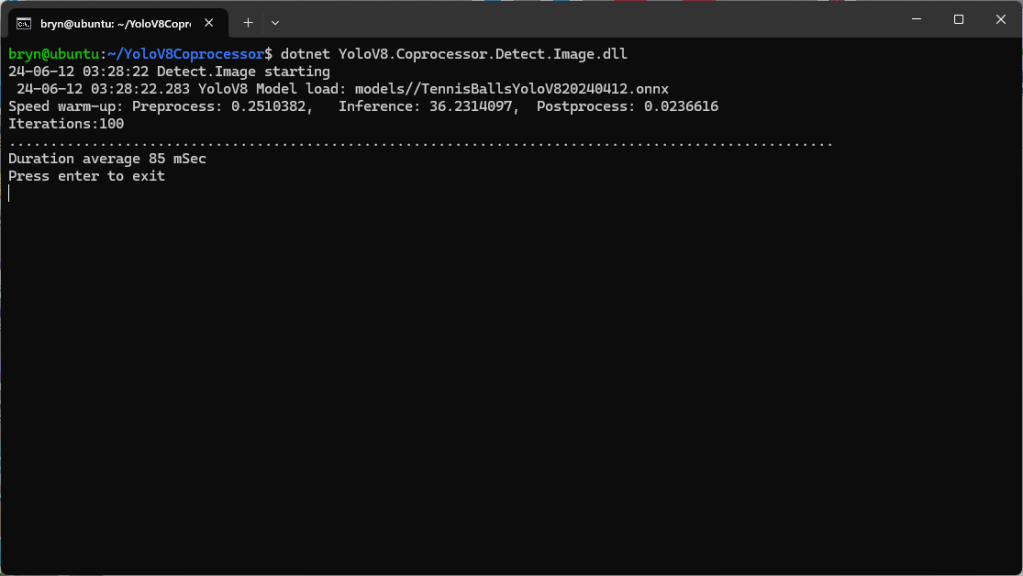

After some minor fixes and shifting the configuration to an appsettings file the application compiled.

using Compunet.YoloSharp;

using Microsoft.Extensions.Configuration;

using SixLabors.ImageSharp;

class Program

{

static void Main(string[] args)

{

Model.ApplicationSettings applicationSettings;

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} 0.3.CameraHTTPClient starting");

#if RELEASE

Console.WriteLine("RELEASE");

#else

Console.WriteLine("DEBUG");

#endif

// load the app settings into configuration

var configuration = new ConfigurationBuilder()

.AddJsonFile("appsettings.json", false, true)

.Build();

applicationSettings = configuration.GetSection("ApplicationSettings").Get<Model.ApplicationSettings>();

// Load the YOLOv8 model

var yolo = new YoloPredictor(applicationSettings.ModelPath);

// Load the image from disk

using (var image = Image.Load(applicationSettings.ImagePath))

{

// Run the YOLOv8 model on the image

var results = yolo.Detect(image);

// Display the results

foreach (var result in results)

{

Console.WriteLine($"Label: {result.Name}, Confidence: {result.Confidence}, BoundingBox: {result.Bounds}");

}

}

}

}

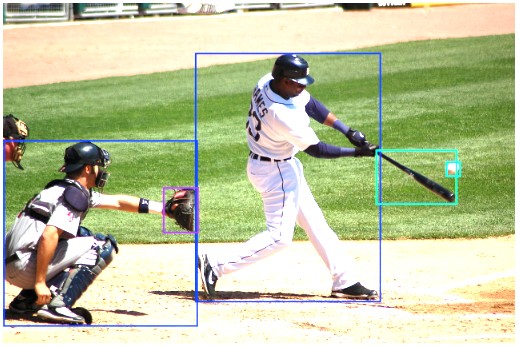

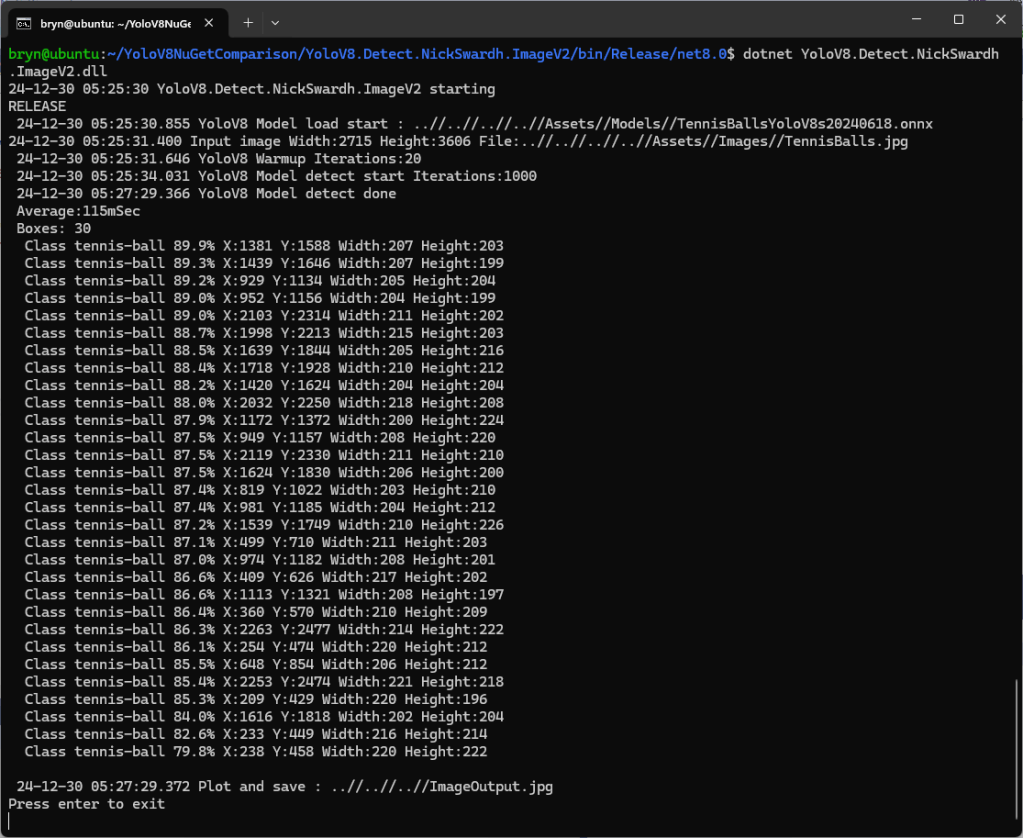

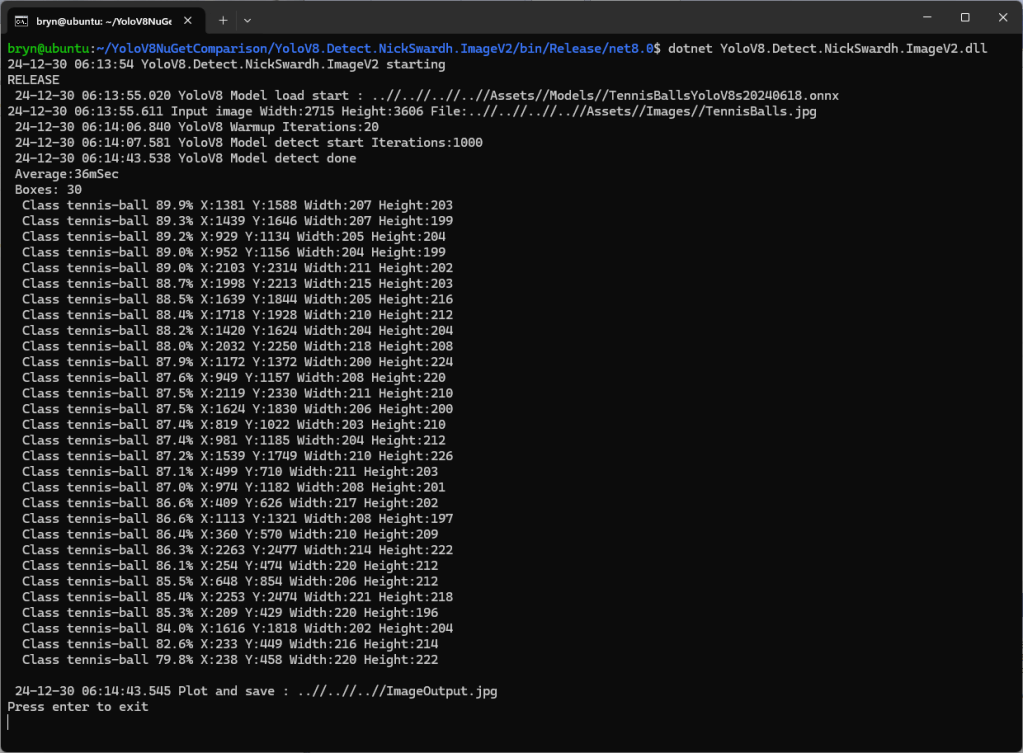

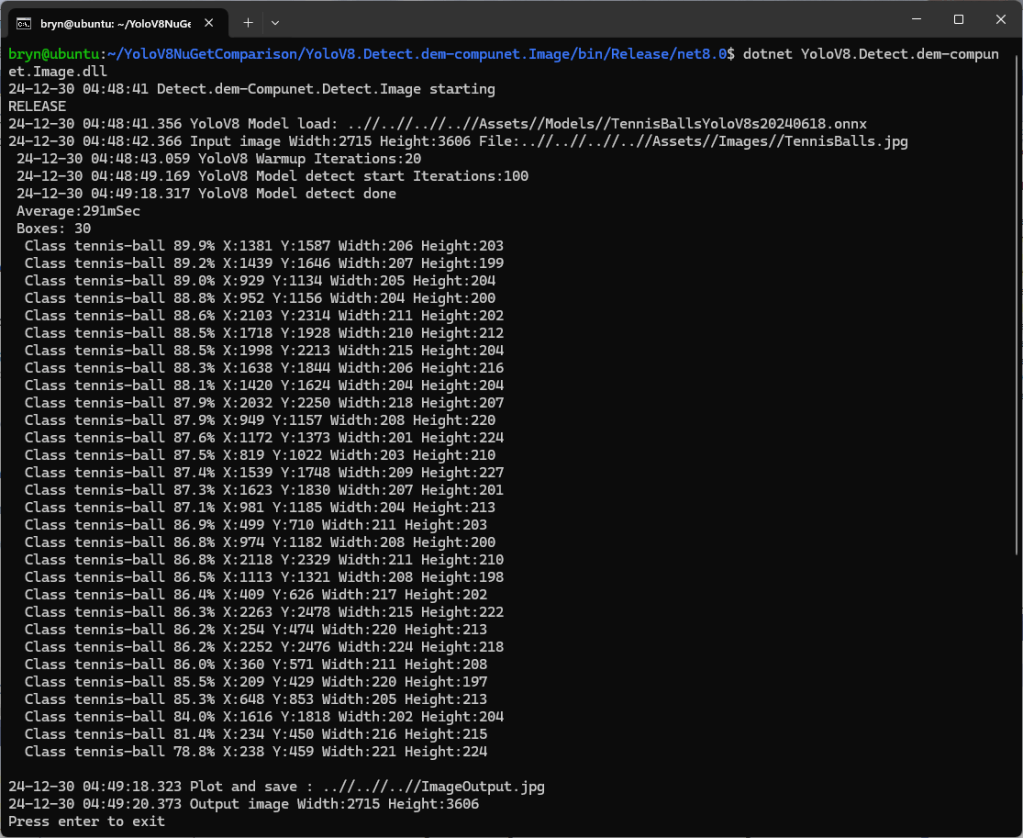

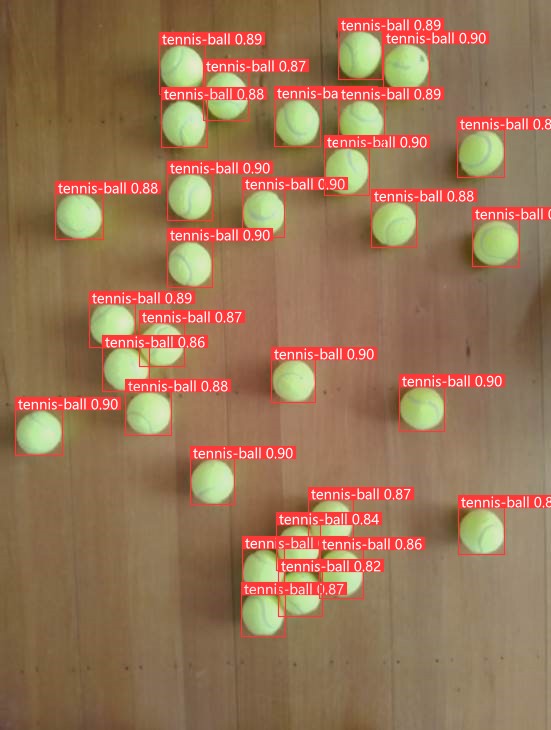

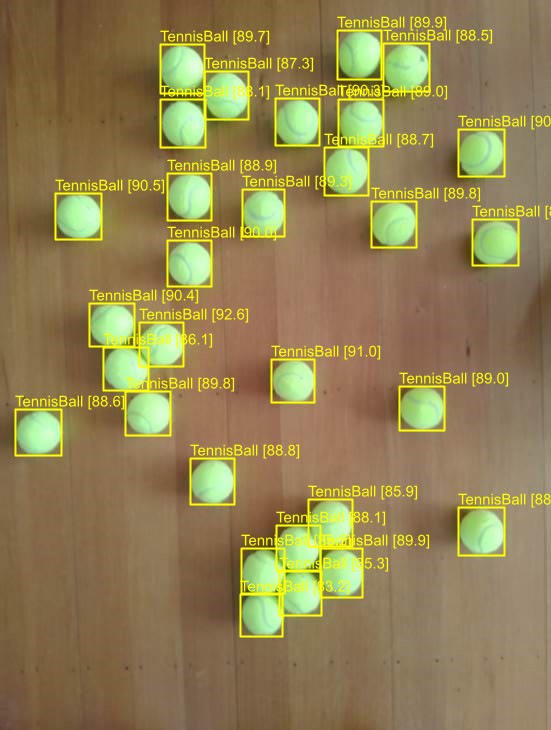

I tested the implementation with sample “sports” image from the YoloSharp Github repository

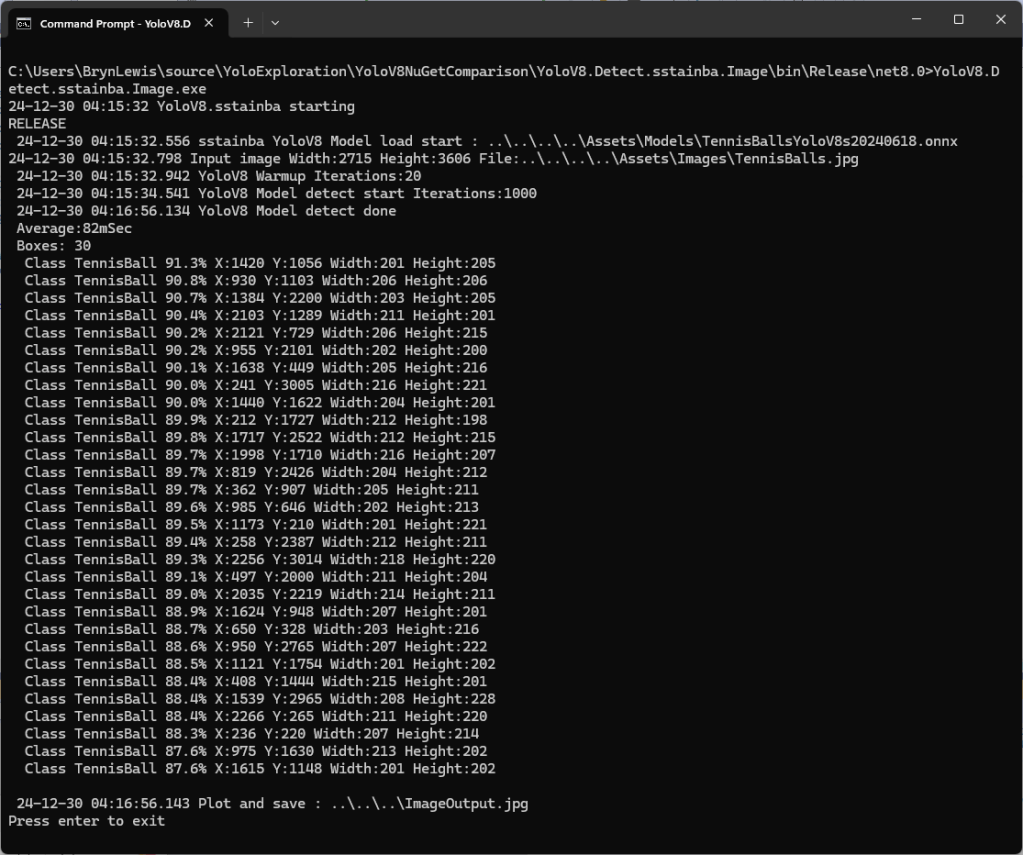

The console application output looked reasonable

I manually compared the output of the console application with the Ultralytics Hub model preview tab and the results looked close enough.

Pose

My Copilot prompt

please write a C# console application that uses Compunet YoloSharp to run a yolov8 pose model on an image loaded from disk

using Compunet.YoloSharp;

using Microsoft.Extensions.Configuration;

using SixLabors.ImageSharp;

class Program

{

static void Main(string[] args)

{

Model.ApplicationSettings applicationSettings;

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} 0.3.CameraHTTPClient starting");

#if RELEASE

Console.WriteLine("RELEASE");

#else

Console.WriteLine("DEBUG");

#endif

// load the app settings into configuration

var configuration = new ConfigurationBuilder()

.AddJsonFile("appsettings.json", false, true)

.Build();

applicationSettings = configuration.GetSection("ApplicationSettings").Get<Model.ApplicationSettings>();

// Load the YOLOv8 pose model

var yolo = new YoloPredictor(applicationSettings.ModelPath);

// Load the image from disk

using (var image = Image.Load(applicationSettings.ImagePath))

{

// Run the YOLOv8 pose model on the image

var results = yolo.Pose(image);

// Display the results

foreach (var result in results)

{

Console.WriteLine($"Label: {result.Name.Name}, Confidence: {result.Confidence}, BoundingBox: {result.Bounds}");

Console.WriteLine("Keypoints:");

foreach (var keypoint in result)

{

Console.WriteLine($" - {keypoint.Point}");

}

}

}

}

}

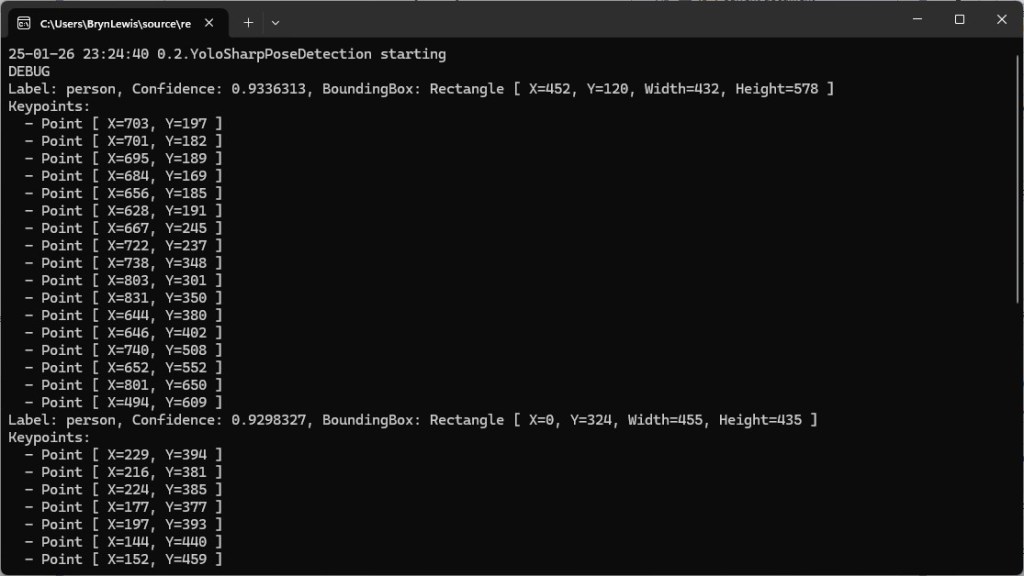

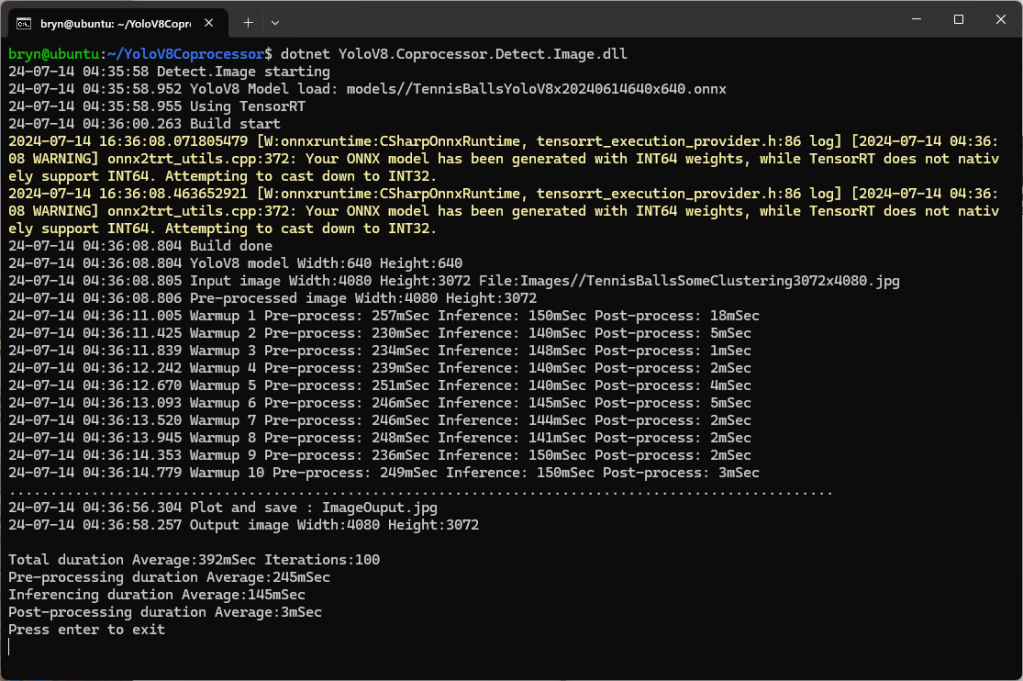

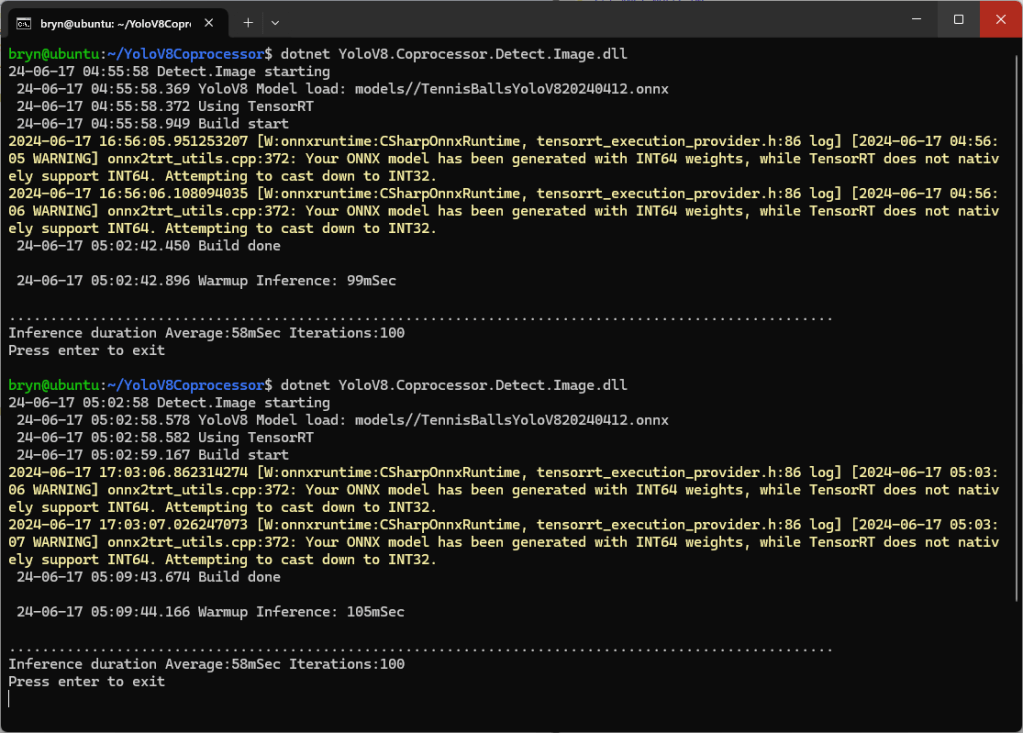

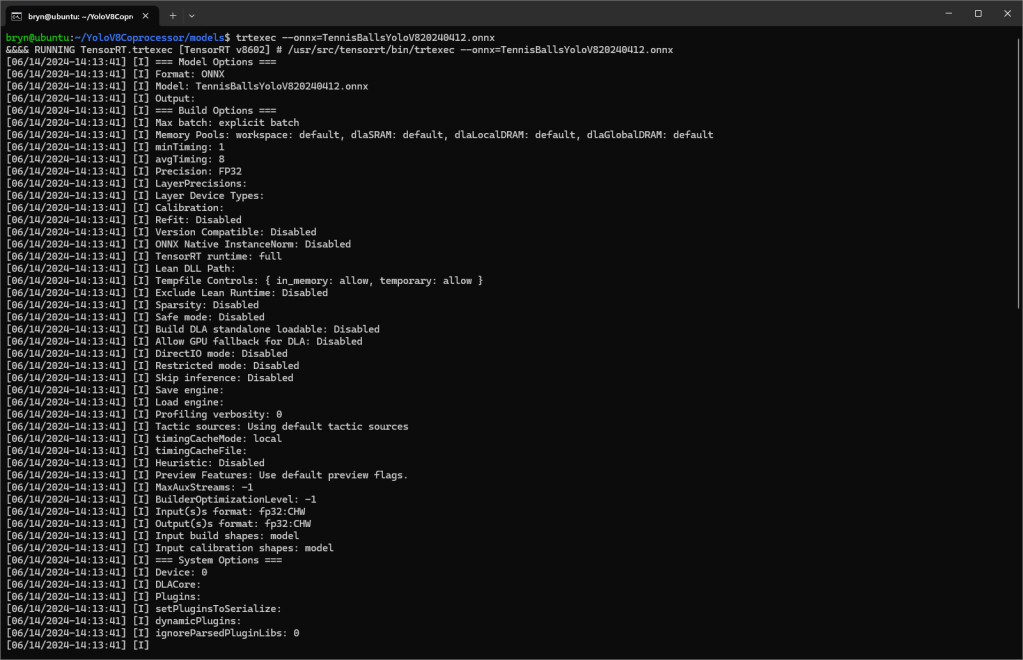

After some minor fixes and shifting the configuration to an appsettings file the application compiled. I tested the implementation with sample “sports” image from the YoloSharp Github repository

The console application output looked reasonable

I manually compared the output of the console application with the Ultralytics Hub model preview tab and the results were reasonable

Classification

My Copilot prompt

please write a C# console application that uses Compunet YoloSharp to run a yolov8 pose model on an image loaded from disk

using Compunet.YoloSharp;

using Microsoft.Extensions.Configuration;

using SixLabors.ImageSharp;

class Program

{

static void Main(string[] args)

{

Model.ApplicationSettings applicationSettings;

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} 0.3.CameraHTTPClient starting");

#if RELEASE

Console.WriteLine("RELEASE");

#else

Console.WriteLine("DEBUG");

#endif

// load the app settings into configuration

var configuration = new ConfigurationBuilder()

.AddJsonFile("appsettings.json", false, true)

.Build();

applicationSettings = configuration.GetSection("ApplicationSettings").Get<Model.ApplicationSettings>();

// Load the YOLOv8 classification model

var yolo = new YoloPredictor(applicationSettings.ModelPath);

// Load the image from disk

using (var image = Image.Load(applicationSettings.ImagePath))

{

// Run the YOLOv8 classification model on the image

var results = yolo.Classify(image);

// Display the results

foreach (var result in results)

{

Console.WriteLine($"Label: {result.Name.Name}, Confidence: {result.Confidence}");

}

}

}

}

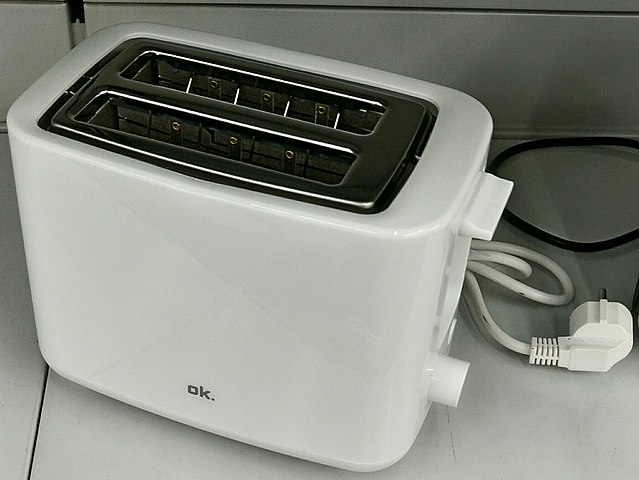

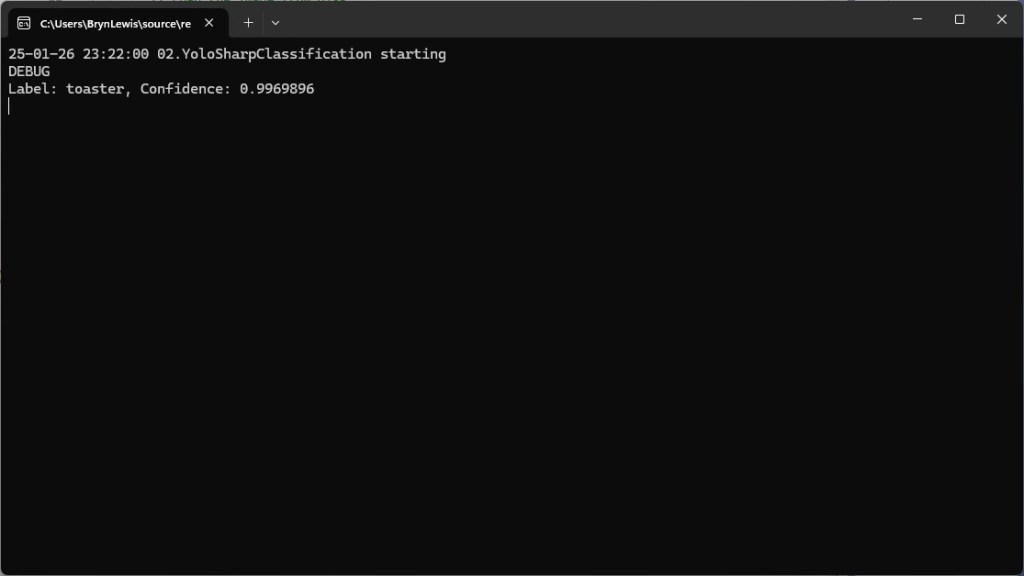

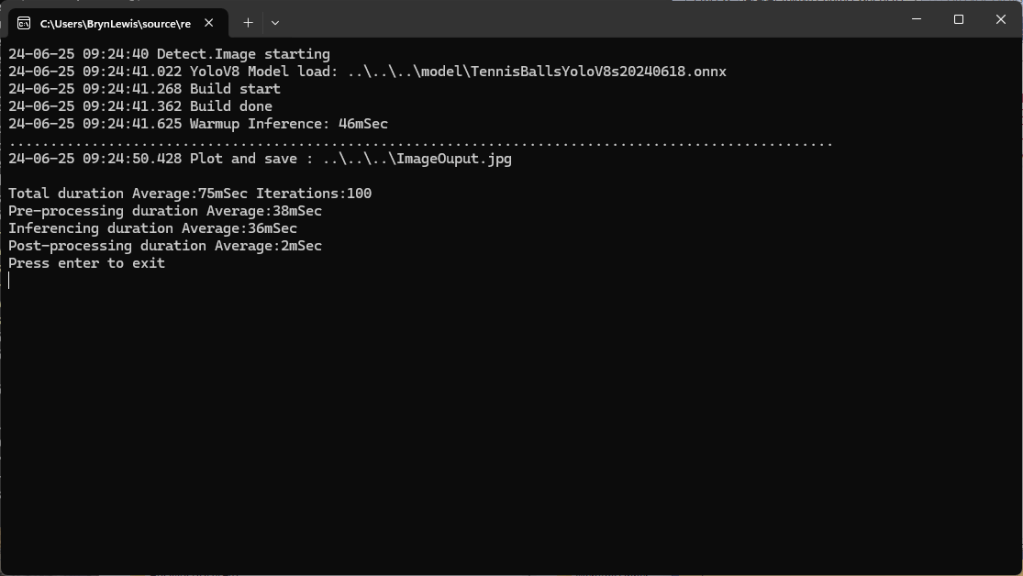

After some minor fixes and shifting the configuration to an appsettings file the application compiled. I tested the implementation with sample “toaster” image from the YoloSharp Github repository

The console application output looked reasonable

I’m pretty confident the input image was a toaster.

Summary

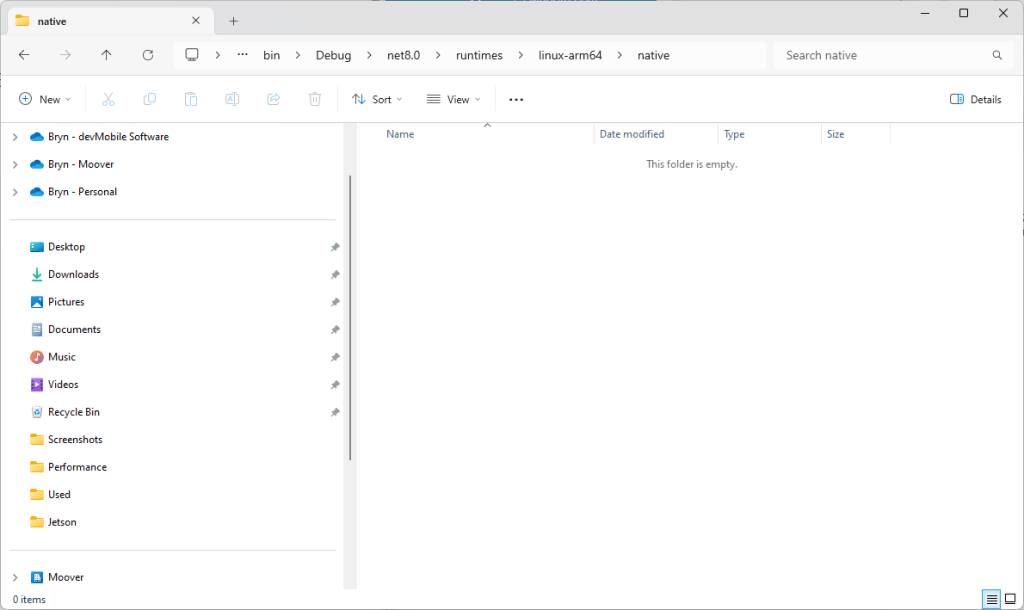

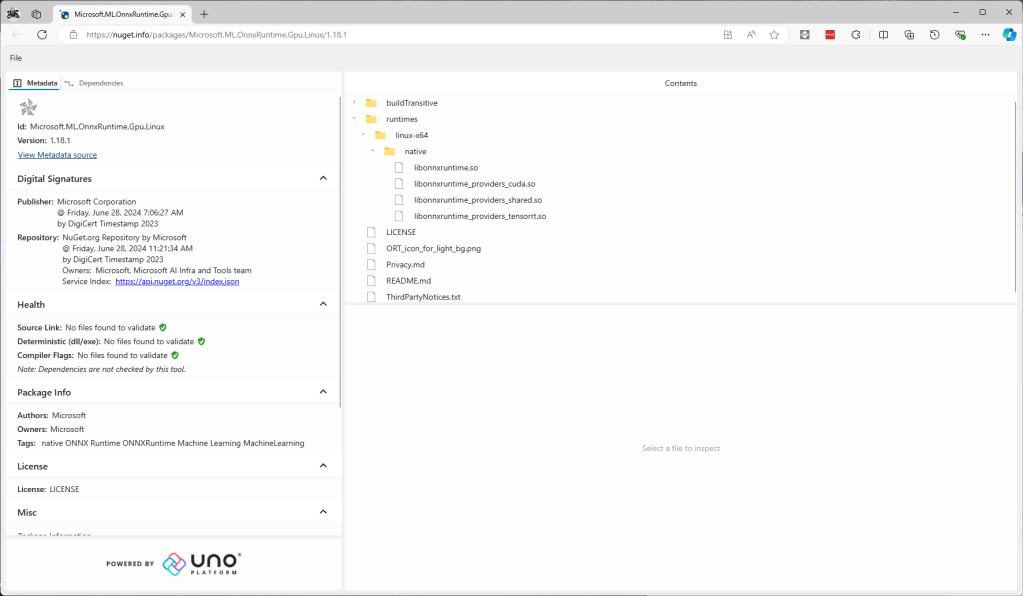

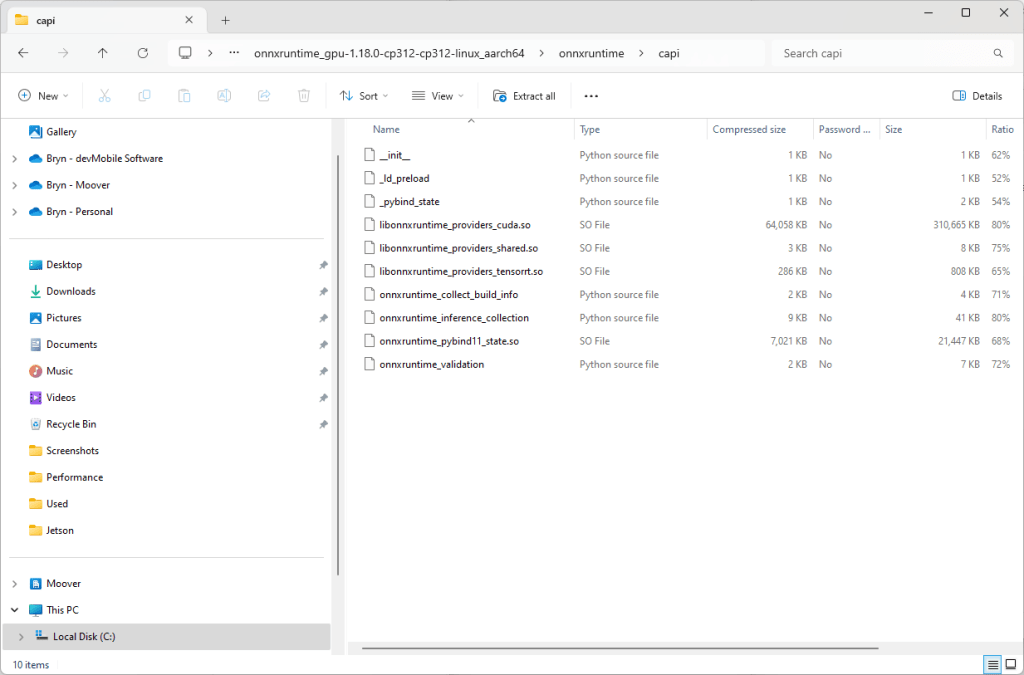

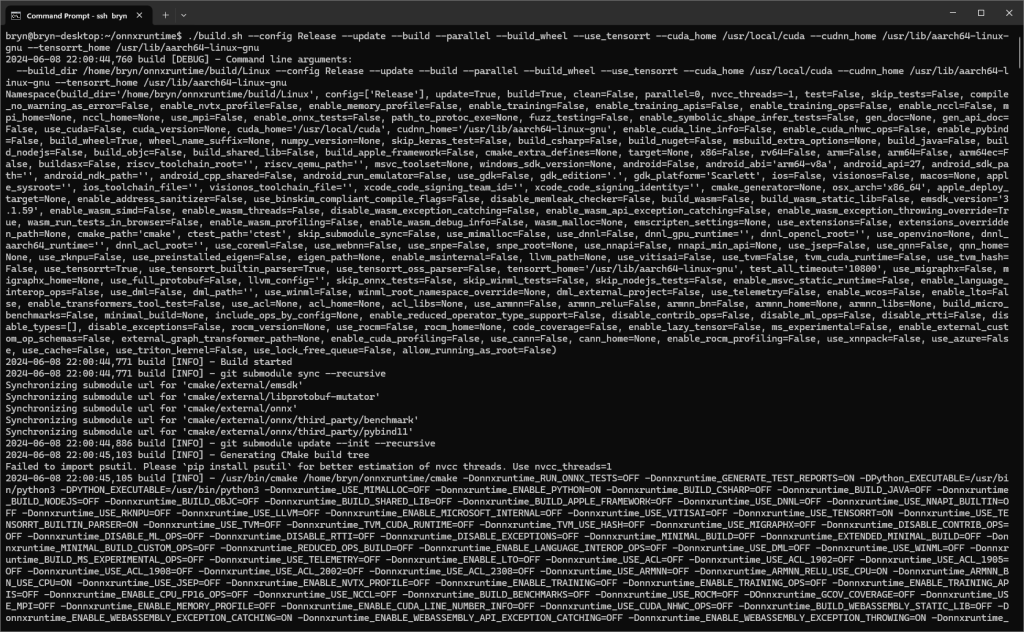

The Copilot prompts to generate code which uses Ultralytics YoloV8 and Compunet YoloSharp and may have produced better code with some “prompt engineering”. Using Visual Studio intellisense the generated code was easy to fix.

The Copilot generated code in this post is not suitable for production