This project is another reworked version of on my ML.Net YoloV5 + Camera + GPIO on ARM64 Raspberry PI which supports only the uploading of camera and marked up images to Azure Storage.

My backyard test-rig consists of a Unv IPC675LFW Pan Tilt Zoom(PTZ) Security Camera, Power over Ethernet(PoE) module, and a Raspberry Pi 4B 8G.

The application can be compiled with Raspberry PI V2 Camera or Unv Security Camera (The security camera configuration may work for other cameras/vendors).

The appsetings.json file has configuration options for the Azure Storage Account, DeviceID (Used for the Azure Blob storage container name), the list of object classes of interest (based on the YoloV5 image classes) , and the image blob storage file names (used to “bucket” images).

{

"Logging": {

"LogLevel": {

"Default": "Information",

"Microsoft": "Warning",

"Microsoft.Hosting.Lifetime": "Information"

}

},

"Application": {

"DeviceId": "edgecamera",

"ImageTimerDue": "0.00:00:15",

"ImageTimerPeriod": "0.00:00:30",

"ImageCameraFilepath": "ImageCamera.jpg",

"ImageMarkedUpFilepath": "ImageMarkedup.jpg",

"ImageCameraUpload": true,

"ImageMarkedupUpload": true,

"YoloV5ModelPath": "YoloV5/yolov5s.onnx",

"PredicitionScoreThreshold": 0.7,

"PredictionLabelsOfInterest": [

"bicycle",

"person",

"car"

],

"OutputImageMarkup": true

},

"SecurityCamera": {

"CameraUrl": "",

"CameraUserName": "",

"CameraUserPassword": ""

},

"RaspberryPICamera": {

"ProcessWaitForExit": 1000,

"Rotation": 180

},

"AzureStorage": {

"ConnectionString": "FhisIsNotTheConnectionStringYouAreLookingFor",

"ImageCameraFilenameFormat": "{0:yyyyMMdd}/camera/{0:HHmmss}.jpg",

"ImageMarkedUpFilenameFormat": "{0:yyyyMMdd}/markedup/{0:HHmmss}.jpg"

}

}

Part of this refactor was injecting(DI) the logging and configuration dependencies.

public class Program

{

public static void Main(string[] args)

{

CreateHostBuilder(args).Build().Run();

}

public static IHostBuilder CreateHostBuilder(string[] args) =>

Host.CreateDefaultBuilder(args)

.ConfigureServices((hostContext, services) =>

{

services.Configure<ApplicationSettings>(hostContext.Configuration.GetSection("Application"));

services.Configure<SecurityCameraSettings>(hostContext.Configuration.GetSection("SecurityCamera"));

services.Configure<RaspberryPICameraSettings>(hostContext.Configuration.GetSection("RaspberryPICamera"));

services.Configure<AzureStorageSettings>(hostContext.Configuration.GetSection("AzureStorage"));

})

.ConfigureLogging(logging =>

{

logging.ClearProviders();

logging.AddSimpleConsole(c => c.TimestampFormat = "[HH:mm:ss.ff]");

})

.UseSystemd()

.ConfigureServices((hostContext, services) =>

{

services.AddHostedService<Worker>();

});

}

}

}

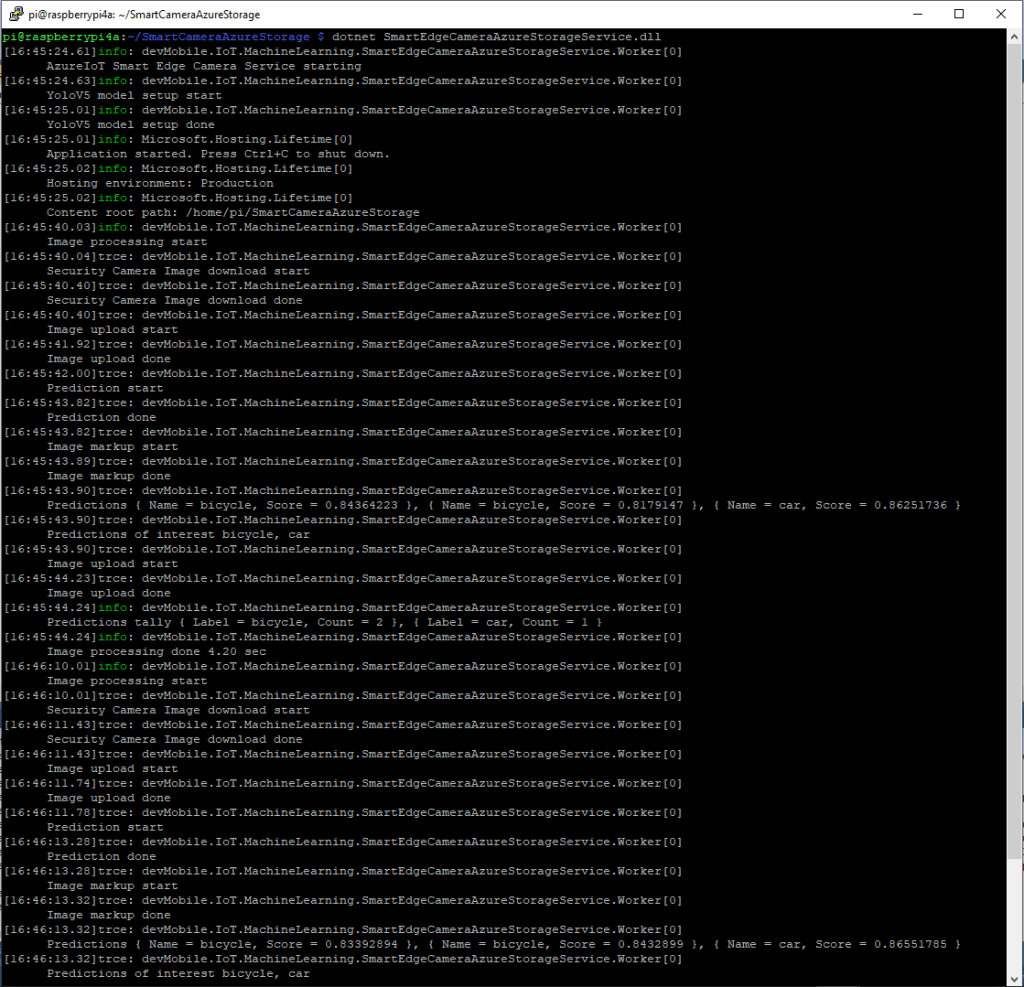

After the You Only Look Once(YOLOV5)+ML.Net+Open Neural Network Exchange(ONNX) plumbing has loaded a timer with a configurable due time and period is started.

private async void ImageUpdateTimerCallback(object state)

{

DateTime requestAtUtc = DateTime.UtcNow;

// Just incase - stop code being called while photo already in progress

if (_cameraBusy)

{

return;

}

_cameraBusy = true;

_logger.LogInformation("Image processing start");

try

{

#if CAMERA_RASPBERRY_PI

RaspberryPIImageCapture();

#endif

#if CAMERA_SECURITY

SecurityCameraImageCapture();

#endif

if (_applicationSettings.ImageCameraUpload)

{

await AzureStorageImageUpload(requestAtUtc, _applicationSettings.ImageCameraFilepath,

azureStorageSettings.ImageCameraFilenameFormat);

}

List<YoloPrediction> predictions;

using (Image image = Image.FromFile(_applicationSettings.ImageCameraFilepath))

{

_logger.LogTrace("Prediction start");

predictions = _scorer.Predict(image);

_logger.LogTrace("Prediction done");

OutputImageMarkup(image, predictions, _applicationSettings.ImageMarkedUpFilepath);

}

if (_logger.IsEnabled(LogLevel.Trace))

{

_logger.LogTrace("Predictions {0}", predictions.Select(p => new { p.Label.Name, p.Score }));

}

var predictionsOfInterest = predictions.Where(p => p.Score > _applicationSettings.PredicitionScoreThreshold).Select(c => c.Label.Name).Intersect(_applicationSettings.PredictionLabelsOfInterest, StringComparer.OrdinalIgnoreCase);

if (_logger.IsEnabled(LogLevel.Trace))

{

_logger.LogTrace("Predictions of interest {0}", predictionsOfInterest.ToList());

}

if (_applicationSettings.ImageMarkedupUpload && predictionsOfInterest.Any())

{

await AzureStorageImageUpload(requestAtUtc, _applicationSettings.ImageMarkedUpFilepath, _azureStorageSettings.ImageMarkedUpFilenameFormat);

}

var predictionsTally = predictions.Where(p => p.Score >= _applicationSettings.PredicitionScoreThreshold)

.GroupBy(p => p.Label.Name)

.Select(p => new

{

Label = p.Key,

Count = p.Count()

});

if (_logger.IsEnabled(LogLevel.Information))

{

_logger.LogInformation("Predictions tally {0}", predictionsTally.ToList());

}

}

catch (Exception ex)

{

_logger.LogError(ex, "Camera image download, post procesing, image upload, or telemetry failed");

}

finally

{

_cameraBusy = false;

}

TimeSpan duration = DateTime.UtcNow - requestAtUtc;

_logger.LogInformation("Image processing done {0:f2} sec", duration.TotalSeconds);

}

In the ImageUpdateTimerCallback method a camera image is captured (by my Raspberry Pi Camera Module 2 or IPC675LFW Security Camera) and written to the local file system.

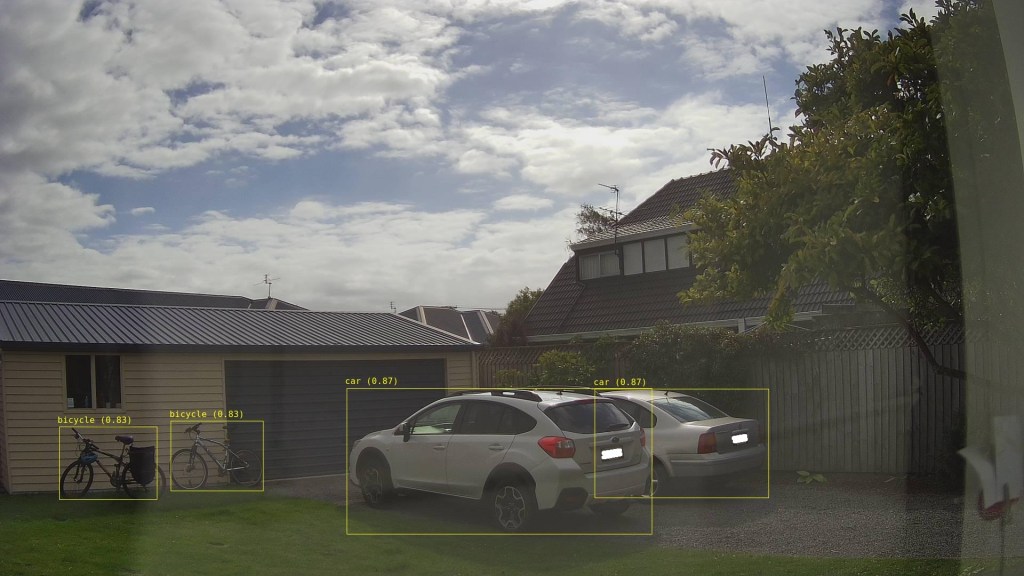

The MentalStack YoloV5 model ML.Net support library processes the camera image on the local filesystem. The prediction output (can be inspected with Netron) is parsed generating list of objects that have been detected, their Minimum Bounding Rectangle(MBR) and class.

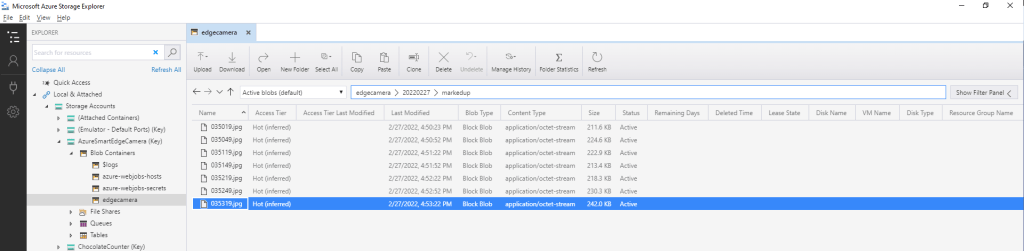

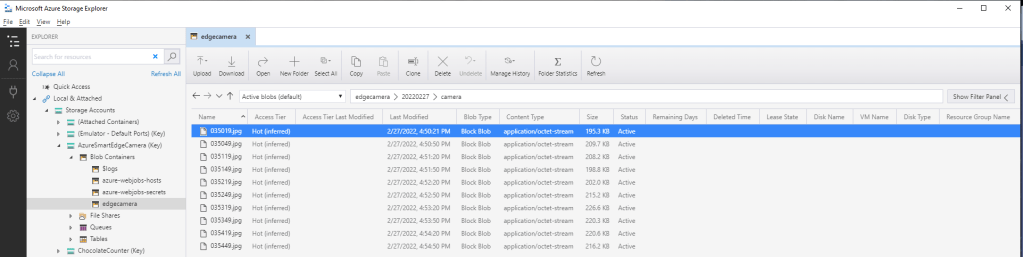

The list of predictions is post processed with a Language Integrated Query(LINQ) which filters out predictions with a score below a configurable threshold(PredicitionScoreThreshold) and returns a count of each class. If this list intersects with the configurable PredictionLabelsOfInterest a marked up image is uploaded to Azure Storage.

The current implementation is quite limited, the camera image upload, object detection and image upload if there are objects of interest is implemented in a single timer callback. I’m considering implementing two timers one for the uploading of camera images (time lapse camera) and the other for running the object detection process and uploading marked up images.

Marked up images are uploaded if any of the objects detected (with a score greater than PredicitionScoreThreshold) is in the PredictionLabelsOfInterest. I’m considering adding a PredicitionScoreThreshold and minimum count for individual prediction classes, and optionally marked up image upload only when the list of objects detected has changed.