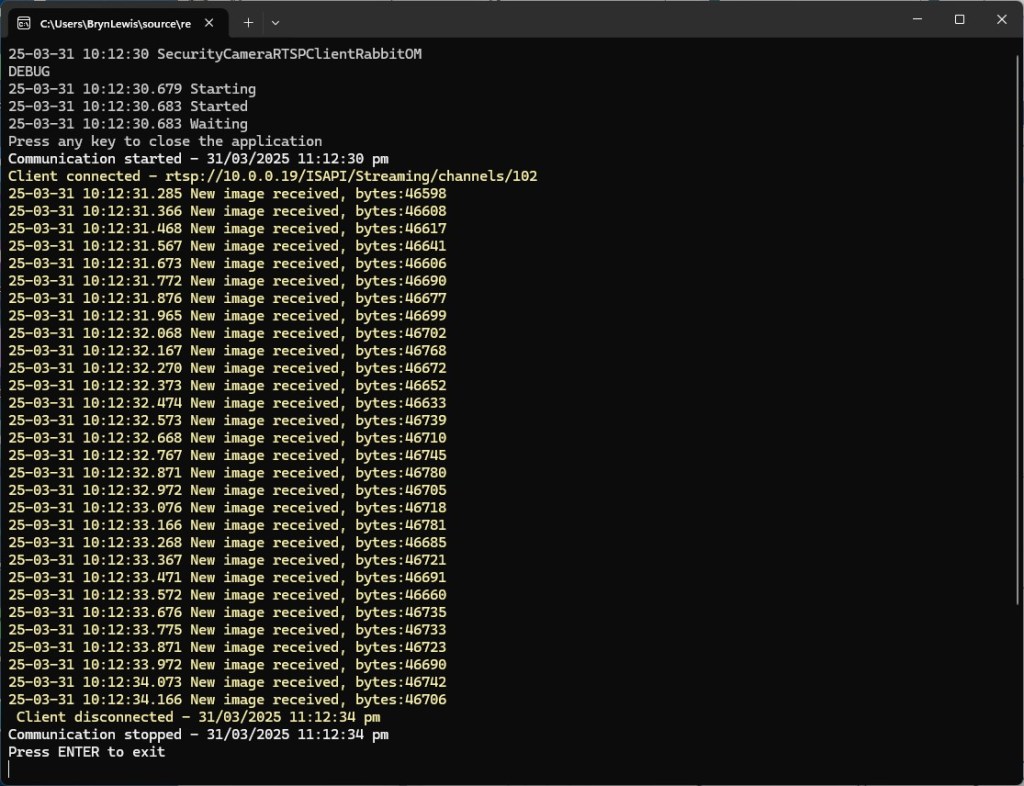

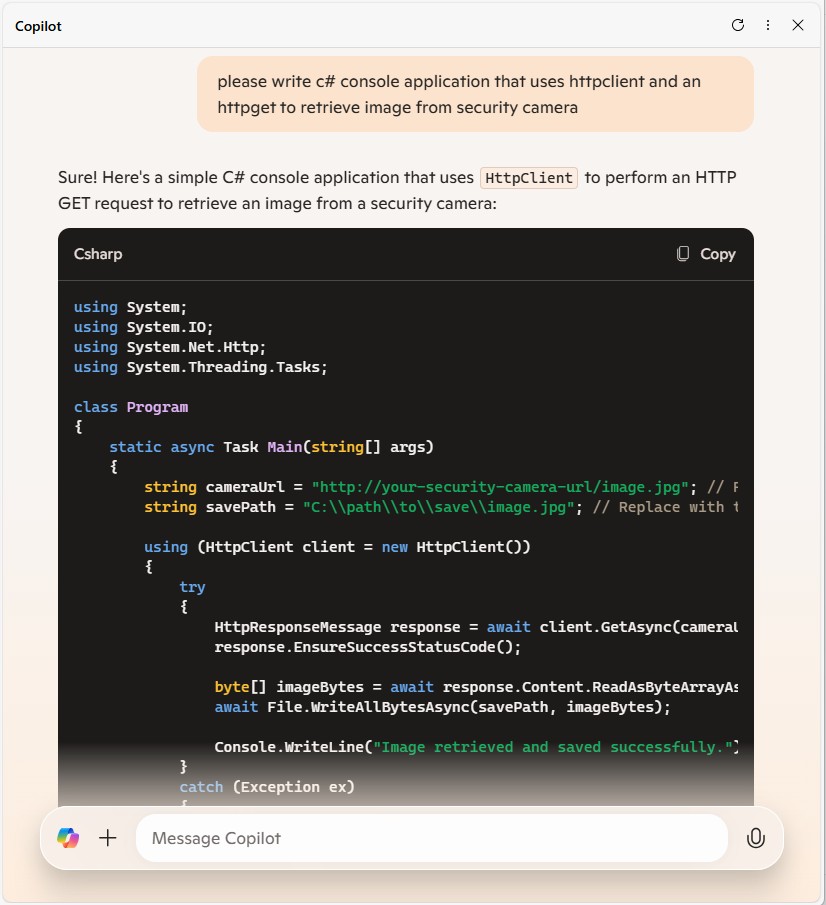

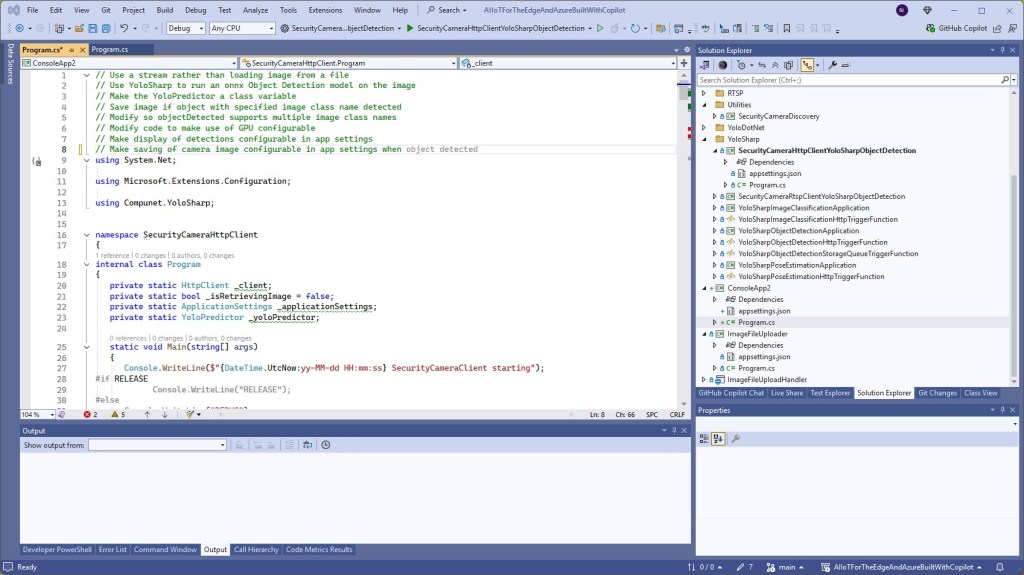

When I started with the Security Camera HTTP code and added code to process the images with Ultralytics Object Detection model I found the order of the prompts could make a difference. My first attempt at adding YoloSharp to the SecurityCameraHttpClient application with Github Copilot didn’t go well and needed some “human intervention”. When I thought more about the order of the prompts the adding the same functionality went a lot better.

// Use a stream rather than loading image from a file

// Use YoloSharp to run an onnx Object Detection model on the image

// Make the YoloPredictor a class variable

// Save image if object with specified image class name detected

// Modify so objectDetected supports multiple image class names

// Modify code to make use of GPU configurable

// Make display of detections configurable in app settings

// Make saving of image configurable in app settings

internal class Program

{

private static HttpClient _client;

private static bool _isRetrievingImage = false;

private static ApplicationSettings _applicationSettings;

private static YoloPredictor _yoloPredictor;

static void Main(string[] args)

{

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} SecurityCameraClient starting");

#if RELEASE

Console.WriteLine("RELEASE");

#else

Console.WriteLine("DEBUG");

#endif

var configuration = new ConfigurationBuilder()

.AddJsonFile("appsettings.json", false, true)

.AddUserSecrets<Program>()

.Build();

_applicationSettings = configuration.GetSection("ApplicationSettings").Get<ApplicationSettings>();

// Initialize YoloPredictor with GPU configuration

_yoloPredictor = new YoloPredictor(_applicationSettings.OnnxModelPath, new YoloPredictorOptions()

{

UseCuda = _applicationSettings.UseCuda, // Configurable GPU usage

});

using (HttpClientHandler handler = new HttpClientHandler { Credentials = new NetworkCredential(_applicationSettings.Username, _applicationSettings.Password) })

using (_client = new HttpClient(handler))

using (var timer = new Timer(async _ => await RetrieveImageAsync(), null, _applicationSettings.TimerDue, _applicationSettings.TimerPeriod))

{

Console.WriteLine("Press any key to exit...");

Console.ReadKey();

}

}

private static async Task RetrieveImageAsync()

{

if (_isRetrievingImage) return;

_isRetrievingImage = true;

try

{

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss.fff} SecurityCameraClient download starting");

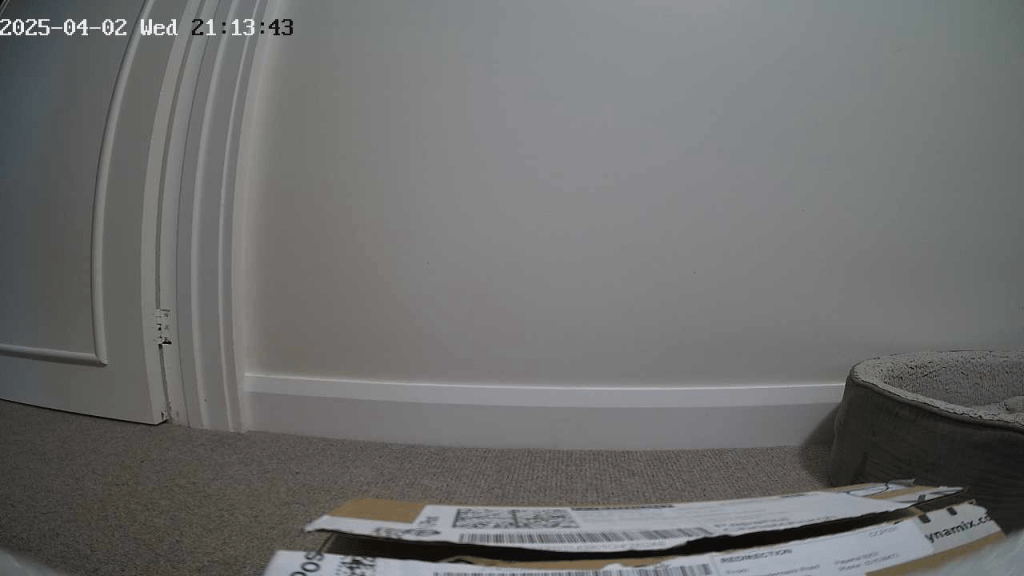

HttpResponseMessage response = await _client.GetAsync(_applicationSettings.CameraUrl);

response.EnsureSuccessStatusCode();

using (Stream imageStream = await response.Content.ReadAsStreamAsync())

{

var detections = _yoloPredictor.Detect(imageStream);

bool objectDetected = false;

foreach (var detection in detections)

{

if (_applicationSettings.LogDetections) // Check if logging detections is enabled

{

Console.WriteLine($"Detected {detection.Name.Name} with confidence {detection.Confidence}");

}

if (_applicationSettings.ClassNames.Contains(detection.Name.Name))

{

objectDetected = true;

}

}

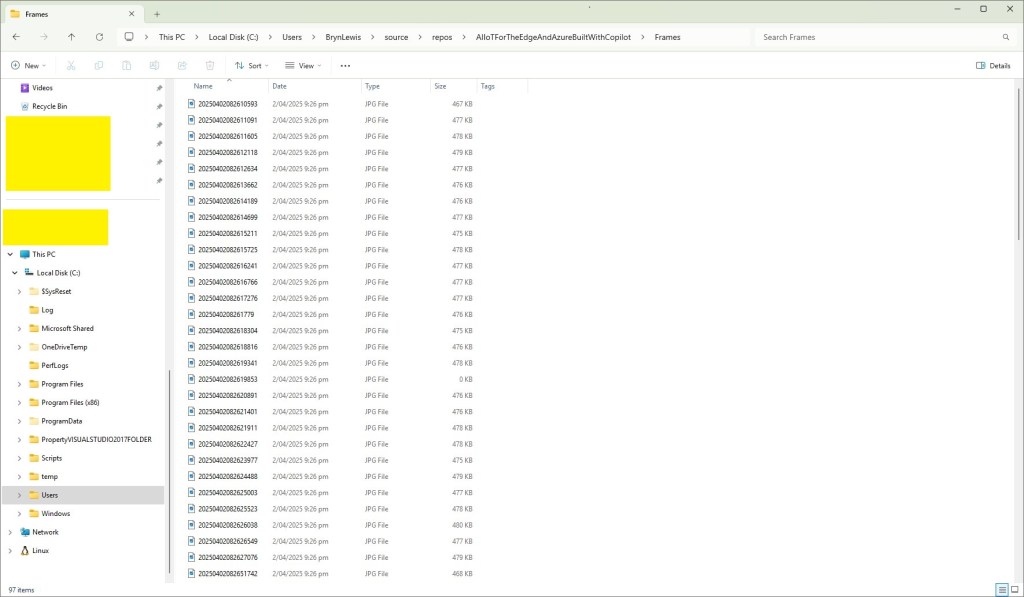

if (objectDetected && _applicationSettings.SaveImage) // Check if saving images is enabled

{

string savePath = string.Format(_applicationSettings.SavePath, DateTime.UtcNow);

using (FileStream fileStream = new FileStream(savePath, FileMode.Create, FileAccess.Write, FileShare.None))

{

imageStream.Position = 0;

await imageStream.CopyToAsync(fileStream);

}

}

}

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss.fff} SecurityCameraClient download done");

}

catch (Exception ex)

{

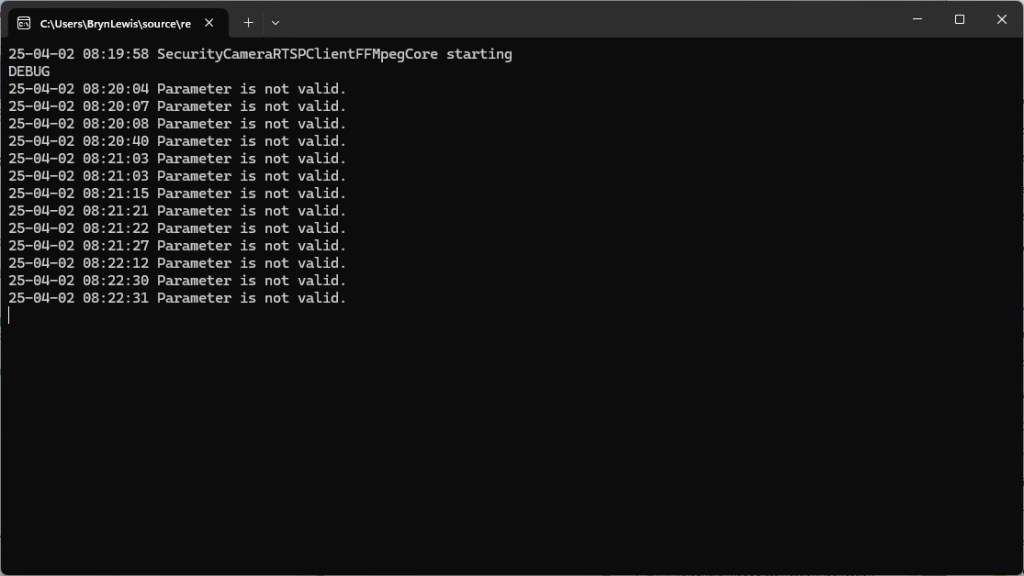

Console.WriteLine($"An error occurred: {ex.Message}");

}

finally

{

_isRetrievingImage = false;

}

}

}

public class ApplicationSettings

{

public string CameraUrl { get; set; } = "";

public string SavePath { get; set; } = "";

public string Username { get; set; } = "";

public string Password { get; set; } = "";

public TimeSpan TimerDue { get; set; } = TimeSpan.Zero;

public TimeSpan TimerPeriod { get; set; } = TimeSpan.Zero;

public string OnnxModelPath { get; set; } = "";

public bool UseCuda { get; set; } = false; // Configurable GPU usage

public List<string> ClassNames { get; set; } //= new List<string>();

public bool LogDetections { get; set; } = false; // Configurable logging of detections

public bool SaveImage { get; set; } = false; // Configurable saving of images

}

}

The interactions Visual Studio IntelliSense with the GitHub Copilot prompts was interesting.

I wonder if this is because Visual Studio Intellisense has local context, whereas Github Copilot has “cloud” context.

It took a couple of failed attempts to find the best order, which I think would reduce over time.

The Copilot generated code in this post is not suitable for production