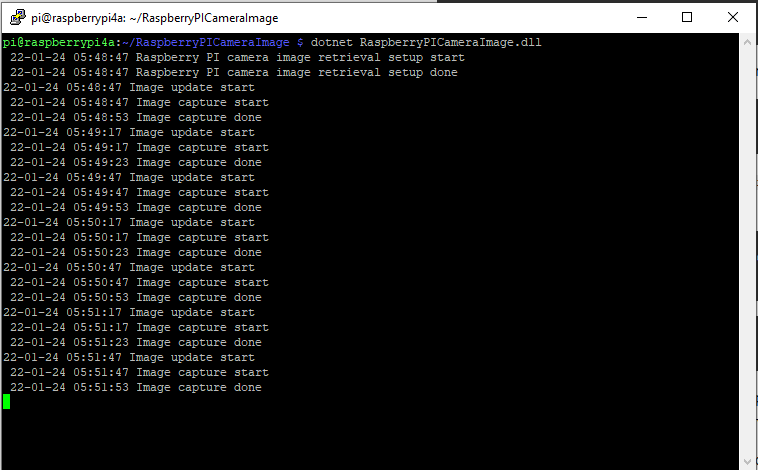

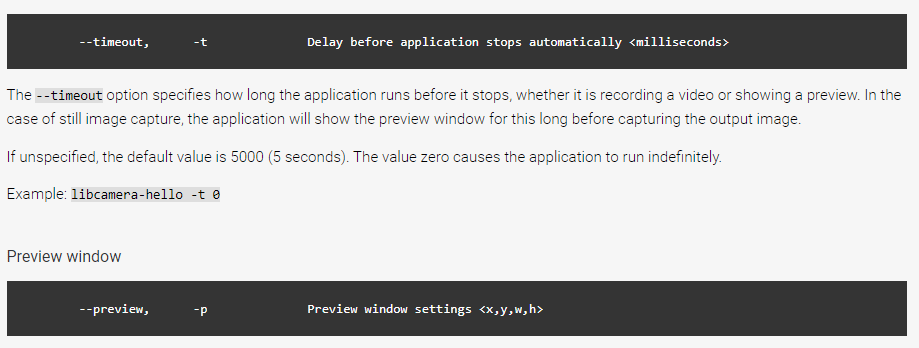

The image capture process was taking about 5 seconds which a bit longer than I was expecting.

libcamera-jpeg -o rotated.jpg --rotation 180The libcamera-jpeg program has a lot of command line parameters.

pi@raspberrypi4a:~ $ libcamera-jpeg --help

Valid options are:

-h [ --help ] [=arg(=1)] (=0) Print this help message

--version [=arg(=1)] (=0) Displays the build version number

-v [ --verbose ] [=arg(=1)] (=0) Output extra debug and diagnostics

-c [ --config ] [=arg(=config.txt)] Read the options from a file. If no filename is specified, default to

config.txt. In case of duplicate options, the ones provided on the command line

will be used. Note that the config file must only contain the long form

options.

--info-text arg (=#%frame (%fps fps) exp %exp ag %ag dg %dg)

Sets the information string on the titlebar. Available values:

%frame (frame number)

%fps (framerate)

%exp (shutter speed)

%ag (analogue gain)

%dg (digital gain)

%rg (red colour gain)

%bg (blue colour gain)

%focus (focus FoM value)

%aelock (AE locked status)

--width arg (=0) Set the output image width (0 = use default value)

--height arg (=0) Set the output image height (0 = use default value)

-t [ --timeout ] arg (=5000) Time (in ms) for which program runs

-o [ --output ] arg Set the output file name

--post-process-file arg Set the file name for configuring the post-processing

--rawfull [=arg(=1)] (=0) Force use of full resolution raw frames

-n [ --nopreview ] [=arg(=1)] (=0) Do not show a preview window

-p [ --preview ] arg (=0,0,0,0) Set the preview window dimensions, given as x,y,width,height e.g. 0,0,640,480

-f [ --fullscreen ] [=arg(=1)] (=0) Use a fullscreen preview window

--qt-preview [=arg(=1)] (=0) Use Qt-based preview window (WARNING: causes heavy CPU load, fullscreen not

supported)

--hflip [=arg(=1)] (=0) Request a horizontal flip transform

--vflip [=arg(=1)] (=0) Request a vertical flip transform

--rotation arg (=0) Request an image rotation, 0 or 180

--roi arg (=0,0,0,0) Set region of interest (digital zoom) e.g. 0.25,0.25,0.5,0.5

--shutter arg (=0) Set a fixed shutter speed

--analoggain arg (=0) Set a fixed gain value (synonym for 'gain' option)

--gain arg Set a fixed gain value

--metering arg (=centre) Set the metering mode (centre, spot, average, custom)

--exposure arg (=normal) Set the exposure mode (normal, sport)

--ev arg (=0) Set the EV exposure compensation, where 0 = no change

--awb arg (=auto) Set the AWB mode (auto, incandescent, tungsten, fluorescent, indoor, daylight,

cloudy, custom)

--awbgains arg (=0,0) Set explict red and blue gains (disable the automatic AWB algorithm)

--flush [=arg(=1)] (=0) Flush output data as soon as possible

--wrap arg (=0) When writing multiple output files, reset the counter when it reaches this

number

--brightness arg (=0) Adjust the brightness of the output images, in the range -1.0 to 1.0

--contrast arg (=1) Adjust the contrast of the output image, where 1.0 = normal contrast

--saturation arg (=1) Adjust the colour saturation of the output, where 1.0 = normal and 0.0 =

greyscale

--sharpness arg (=1) Adjust the sharpness of the output image, where 1.0 = normal sharpening

--framerate arg (=30) Set the fixed framerate for preview and video modes

--denoise arg (=auto) Sets the Denoise operating mode: auto, off, cdn_off, cdn_fast, cdn_hq

--viewfinder-width arg (=0) Width of viewfinder frames from the camera (distinct from the preview window

size

--viewfinder-height arg (=0) Height of viewfinder frames from the camera (distinct from the preview window

size)

--tuning-file arg (=-) Name of camera tuning file to use, omit this option for libcamera default

behaviour

--lores-width arg (=0) Width of low resolution frames (use 0 to omit low resolution stream

--lores-height arg (=0) Height of low resolution frames (use 0 to omit low resolution stream

-q [ --quality ] arg (=93) Set the JPEG quality parameter

-x [ --exif ] arg Add these extra EXIF tags to the output file

--timelapse arg (=0) Time interval (in ms) between timelapse captures

--framestart arg (=0) Initial frame counter value for timelapse captures

--datetime [=arg(=1)] (=0) Use date format for output file names

--timestamp [=arg(=1)] (=0) Use system timestamps for output file names

--restart arg (=0) Set JPEG restart interval

-k [ --keypress ] [=arg(=1)] (=0) Perform capture when ENTER pressed

-s [ --signal ] [=arg(=1)] (=0) Perform capture when signal received

--thumb arg (=320:240:70) Set thumbnail parameters as width:height:quality

-e [ --encoding ] arg (=jpg) Set the desired output encoding, either jpg, png, rgb, bmp or yuv420

-r [ --raw ] [=arg(=1)] (=0) Also save raw file in DNG format

--latest arg Create a symbolic link with this name to most recent saved file

--immediate [=arg(=1)] (=0) Perform first capture immediately, with no preview phase

pi@raspberrypi4a:~ $

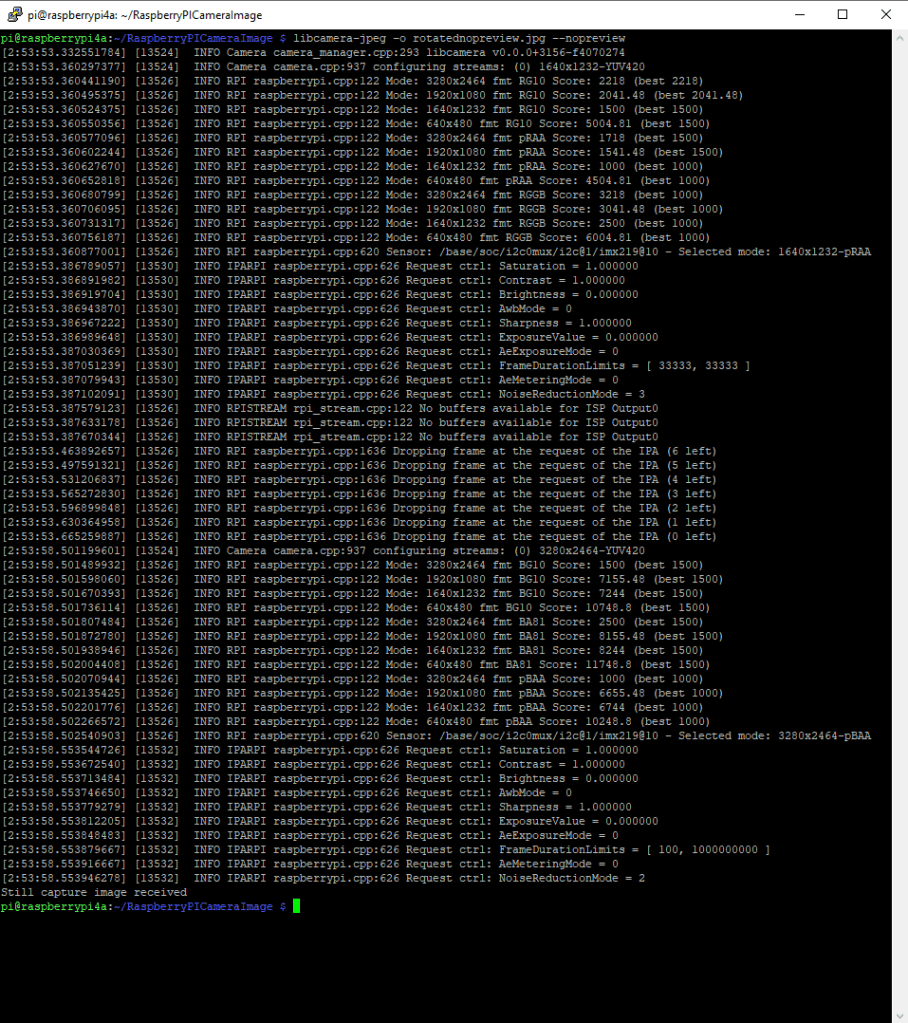

My libcamera-jpeg application is run “headless” so I tried turning off the image preview functionality.

libcamera-jpeg -o rotatednopreview.jpg --nopreviewWhen I ran libcamera-jpeg in a console windows or my application this didn’t appear to make any noticeable difference.

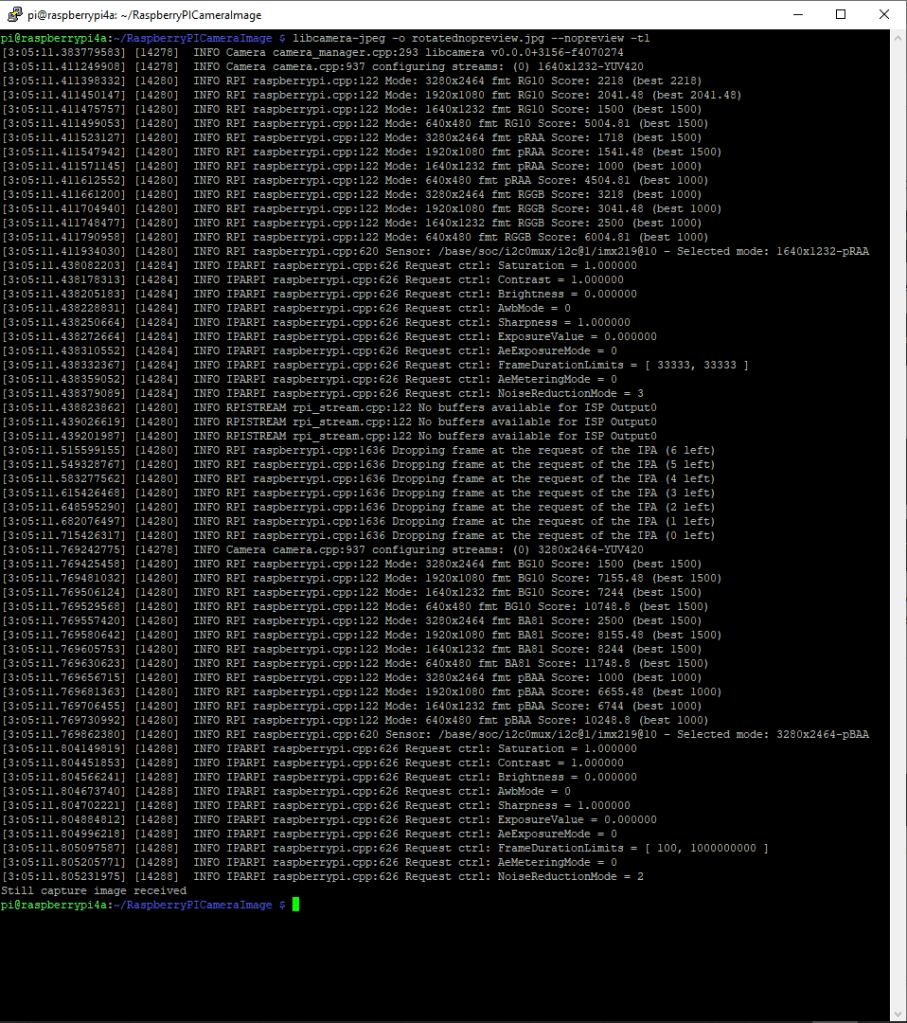

I then had another look at the libcamera-jpeg command line parameters to see if any looked useful for reducing the time that it took to take a save an image and this one caught my attention.

I had assumed the delay was related to how long the preview window was displayed.

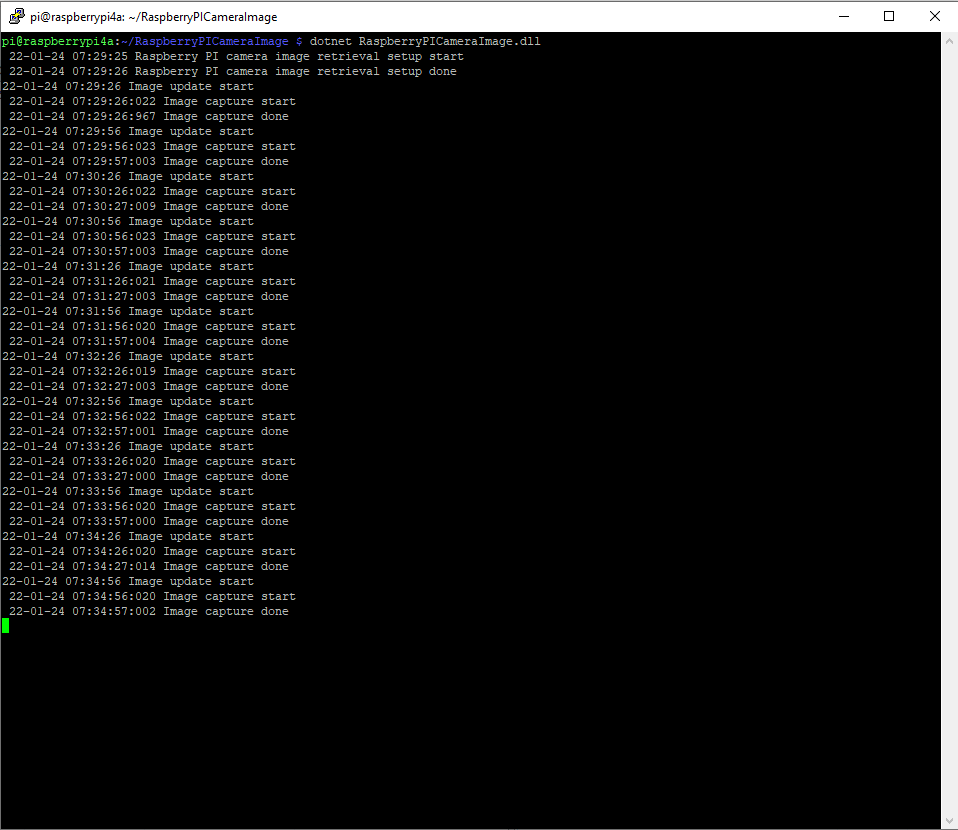

I modified the application (V5) then ran it from the command line and the time reduced to less than a second.

private static void ImageUpdateTimerCallback(object state)

{

try

{

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} Image update start");

// Just incase - stop code being called while photo already in progress

if (_cameraBusy)

{

return;

}

Console.WriteLine($" {DateTime.UtcNow:yy-MM-dd HH:mm:ss} Image capture start");

using (Process process = new Process())

{

process.StartInfo.FileName = @"libcamera-jpeg";

// V1 it works

//process.StartInfo.Arguments = $"-o {_applicationSettings.ImageFilenameLocal}";

// V3a Image right way up

//process.StartInfo.Arguments = $"-o {_applicationSettings.ImageFilenameLocal} --vflip --hflip";

// V3b Image right way up

//process.StartInfo.Arguments = $"-o {_applicationSettings.ImageFilenameLocal} --rotation 180";

// V4 Image no preview

//process.StartInfo.Arguments = $"-o {_applicationSettings.ImageFilenameLocal} --rotation 180 --nopreview";

// V5 Image no preview, no timeout

process.StartInfo.Arguments = $"-o {_applicationSettings.ImageFilenameLocal} --nopreview -t1 --rotation 180";

//process.StartInfo.RedirectStandardOutput = true;

// V2 No diagnostics

process.StartInfo.RedirectStandardError = true;

//process.StartInfo.UseShellExecute = false;

//process.StartInfo.CreateNoWindow = true;

process.Start();

if (!process.WaitForExit(10000) || (process.ExitCode != 0))

{

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} Image update failure {process.ExitCode}");

}

}

Console.WriteLine($" {DateTime.UtcNow:yy-MM-dd HH:mm:ss} Image capture done");

}

catch (Exception ex)

{

Console.WriteLine($"{DateTime.UtcNow:yy-MM-dd HH:mm:ss} Image update error {ex.Message}");

}

finally

{

_cameraBusy = false;

}

}

The image capture process now takes less that a second which is much better (but not a lot less than retrieving an image from one of my security cameras).