Logging and the start of simplification

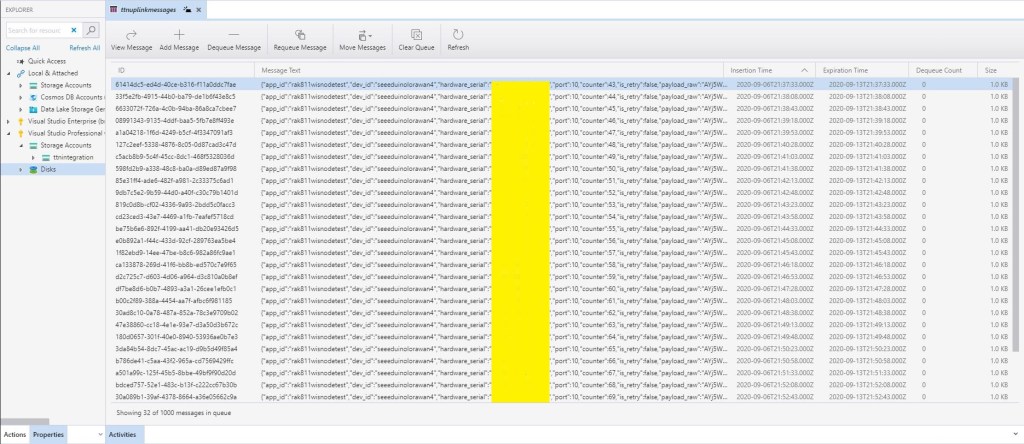

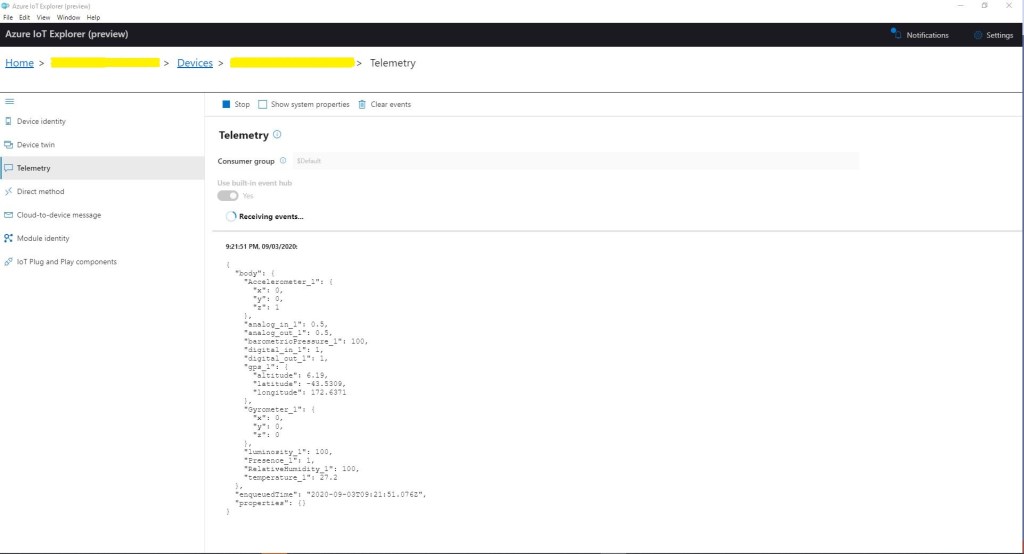

While testing the processing of queued The Things Network(TTN) uplink messages I had noticed that some of the Azure Application Insights events from my Log4Net setup were missing. I could see the MessagesProcessed counter was correct but there weren’t enough events.

public static class UplinkMessageProcessor

{

const string RunTag = "Log4Net001";

static readonly ILog log = log4net.LogManager.GetLogger(System.Reflection.MethodBase.GetCurrentMethod().DeclaringType);

static readonly ConcurrentDictionary<string, PayloadV5> DevicesSeen = new ConcurrentDictionary<string, PayloadV5>();

static int ConcurrentThreadCount = 0;

static int MessagesProcessed = 0;

[FunctionName("UplinkMessageProcessor")]

public static void Run([QueueTrigger("%UplinkQueueName%", Connection = "AzureStorageConnectionString")] string myQueueItem, Microsoft.Azure.WebJobs.ExecutionContext executionContext)

{

try

{

var logRepository = LogManager.GetRepository(Assembly.GetEntryAssembly());

XmlConfigurator.Configure(logRepository, new FileInfo(Path.Combine(executionContext.FunctionAppDirectory, "log4net.config")));

PayloadV5 payloadMessage = (PayloadV5)JsonSerializer.Deserialize(myQueueItem, typeof(PayloadV5));

PayloadV5 payload = (PayloadV5)DevicesSeen.GetOrAdd(payloadMessage.dev_id, payloadMessage);

Interlocked.Increment(ref ConcurrentThreadCount);

Interlocked.Increment(ref MessagesProcessed);

log.Info($"{MessagesProcessed} {RunTag} DevEui:{payload.dev_id} Threads:{ConcurrentThreadCount} First:{payload.metadata.time} Current:{payloadMessage.metadata.time} PayloadRaw:{payload.payload_raw}");

Thread.Sleep(2000);

Interlocked.Decrement(ref ConcurrentThreadCount);

}

catch (Exception ex)

{

log.Error("Processing of Uplink message failed", ex);

throw;

}

}

}

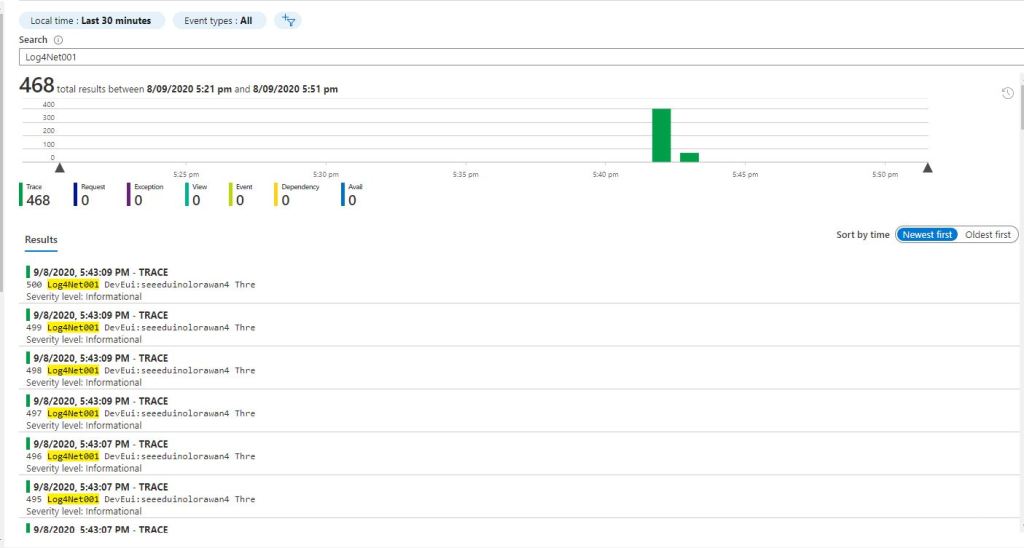

I assume the missing events were because I wasn’t “flushing“ at the end of the Run method. There was also a lot of “plumbing” code (including loading configuration files) to setup Log4Net.

I then built another Azure function using the Application Insights API

public static class UplinkMessageProcessor

{

const string RunTag = "Insights001";

static readonly ConcurrentDictionary<string, PayloadV5> DevicesSeen = new ConcurrentDictionary<string, PayloadV5>();

static int ConcurrentThreadCount = 0;

static int MessagesProcessed = 0;

[FunctionName("UplinkMessageProcessor")]

public static void Run([QueueTrigger("%UplinkQueueName%", Connection = "AzureStorageConnectionString")] string myQueueItem, Microsoft.Azure.WebJobs.ExecutionContext executionContext)

{

using (TelemetryConfiguration telemetryConfiguration = TelemetryConfiguration.CreateDefault())

{

TelemetryClient telemetryClient = new TelemetryClient(telemetryConfiguration);

try

{

PayloadV5 payloadMessage = (PayloadV5)JsonSerializer.Deserialize(myQueueItem, typeof(PayloadV5));

PayloadV5 payload = (PayloadV5)DevicesSeen.GetOrAdd(payloadMessage.dev_id, payloadMessage);

Interlocked.Increment(ref ConcurrentThreadCount);

Interlocked.Increment(ref MessagesProcessed);

telemetryClient.TrackEvent($"{MessagesProcessed} {RunTag} DevEui:{payload.dev_id} Threads:{ConcurrentThreadCount} First:{payload.metadata.time} Current:{payloadMessage.metadata.time} PayloadRaw:{payload.payload_raw}");

Thread.Sleep(2000);

Interlocked.Decrement(ref ConcurrentThreadCount);

}

catch (Exception ex)

{

telemetryClient.TrackException(ex);

throw;

}

}

}

}

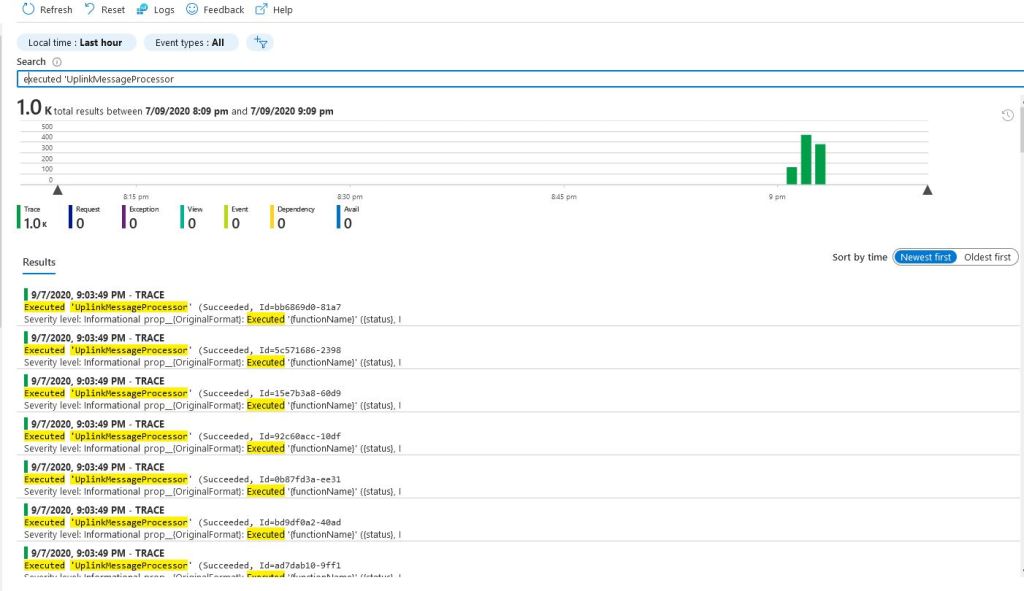

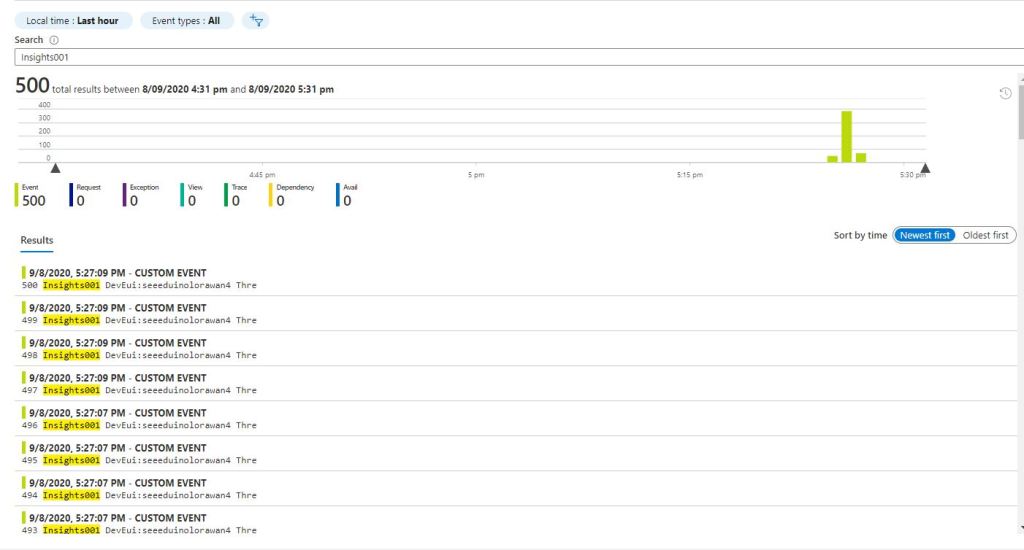

Application Insights API in Application Insights Event viewer

I assume there were no missing events because the using statement was “flushing” every time the Run method completed. There was still a bit of “plumbing” code and which it would be good to get rid of.

When I generated Azure Function stubs there was an ILogger parameter which the Dependency Injection (DI) infrastructure setup.

public static class UplinkMessageProcessor

{

const string RunTag = "Logger002";

static readonly ConcurrentDictionary<string, PayloadV5> DevicesSeen = new ConcurrentDictionary<string, PayloadV5>();

static int ConcurrentThreadCount = 0;

static int MessagesProcessed = 0;

[FunctionName("UplinkMessageProcessor")]

public static void Run([QueueTrigger("%UplinkQueueName%", Connection = "AzureStorageConnectionString")] string myQueueItem, ILogger log)

{

try

{

PayloadV5 payloadMessage = (PayloadV5)JsonSerializer.Deserialize(myQueueItem, typeof(PayloadV5));

PayloadV5 payload = (PayloadV5)DevicesSeen.GetOrAdd(payloadMessage.dev_id, payloadMessage);

Interlocked.Increment(ref ConcurrentThreadCount);

Interlocked.Increment(ref MessagesProcessed);

log.LogInformation($"{MessagesProcessed} {RunTag} DevEui:{payload.dev_id} Threads:{ConcurrentThreadCount} First:{payload.metadata.time} Current:{payloadMessage.metadata.time} PayloadRaw:{payload.payload_raw}");

Thread.Sleep(2000);

Interlocked.Decrement(ref ConcurrentThreadCount);

}

catch (Exception ex)

{

log.LogError(ex,"Processing of Uplink message failed");

throw;

}

}

}

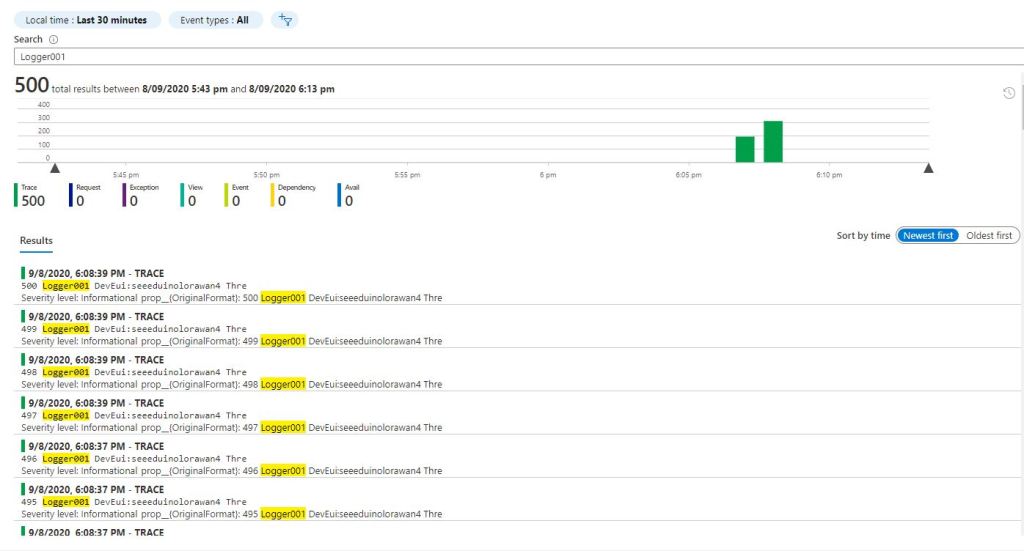

This implementation had even less code and all the messages were visible in the Azure Application Insights event viewer.

While built the Proof of Concept(PoC) implementations I added the configurable “runtag” so I could search for the messages relating to a session in the Azure Application Insights event viewer. The queue name and storage account were “automagically” loaded by the runtime which also reduced the amount of code.

[QueueTrigger("%UplinkQueueName%", Connection = "AzureStorageConnectionString")]

At this point I had minimised the amount and complexity of the code required to process messages in the ttnuplinkmessages queue. Reducing the amount of “startup” required should make my QueueTrigger Azure function faster. But there was still a lot of boilerplate code for serialising the body of the message which added complexity.

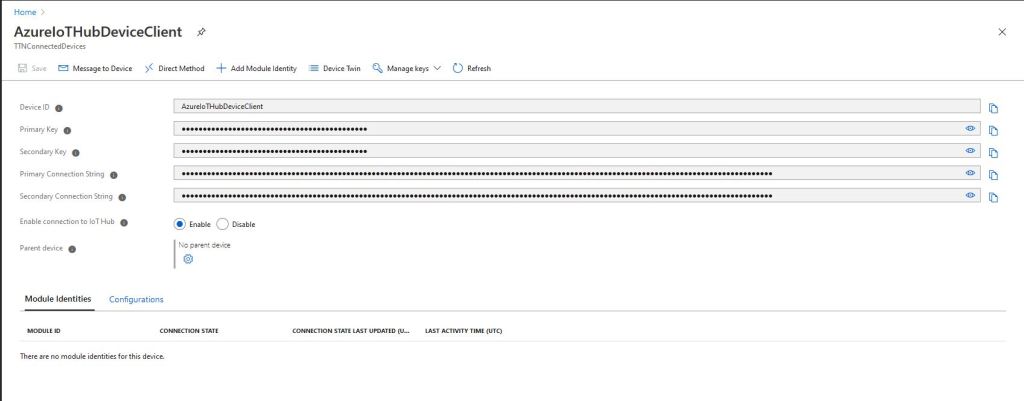

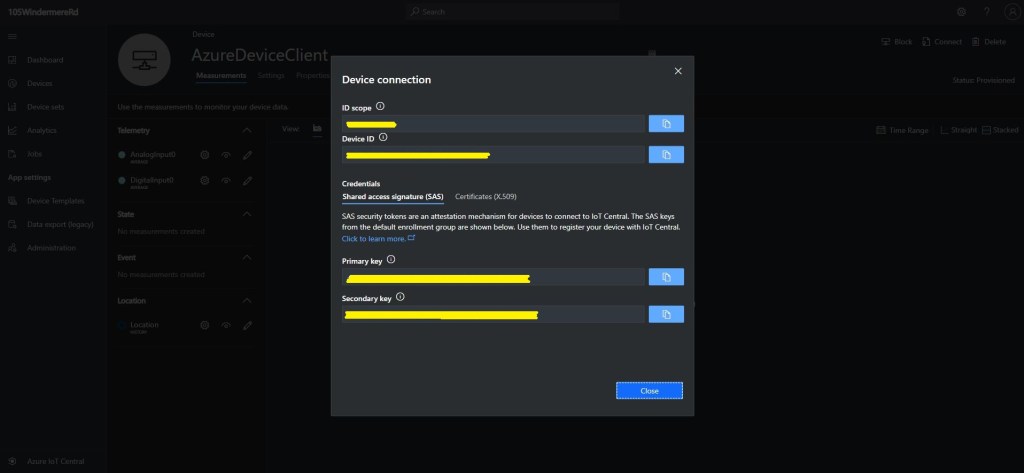

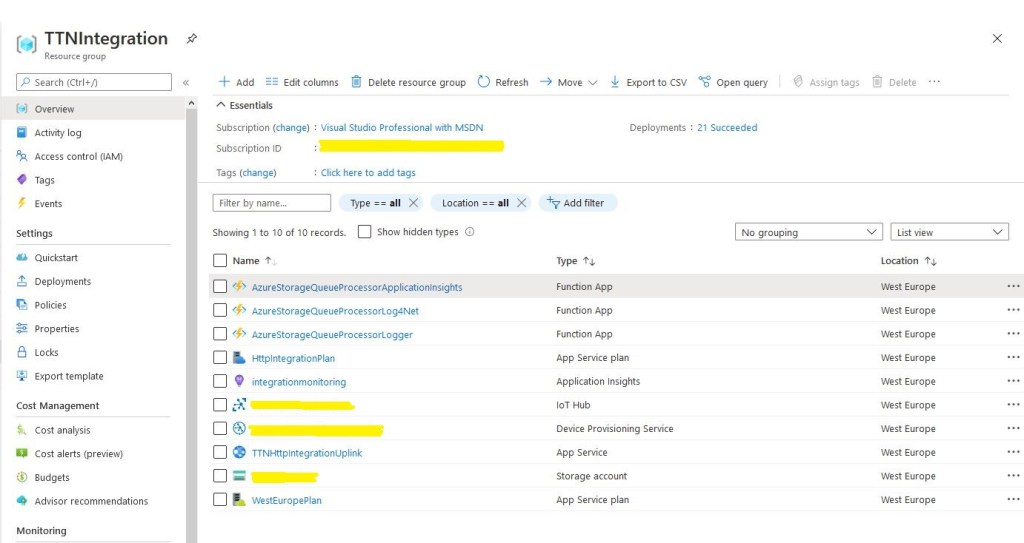

At this point I realised I had a lot of code across multiple projects which had helped me breakdown the problem into manageable chunks but didn’t add a lot of value.