So far, to keep the code really obvious (tends to be more verbose) I have limited the use Dependency Injection(DI). I have been “injecting” an instance of an IConfiguration interface then retrieving the database connection string and other configuration. This isn’t a great approach as the database connection string name is in multiple files etc.

public CustomerController(IConfiguration configuration, ILogger<CustomerController> logger)

{

this.connectionString = configuration.GetConnectionString("WorldWideImportersDatabase");

this.logger = logger;

}

I wanted to explore the impact(s) of different DI approaches on ADO.Net connection pooling. With the system idle I used exec sp_who to see how many connections there were to my SQL Azure database.

Dapper Context approach

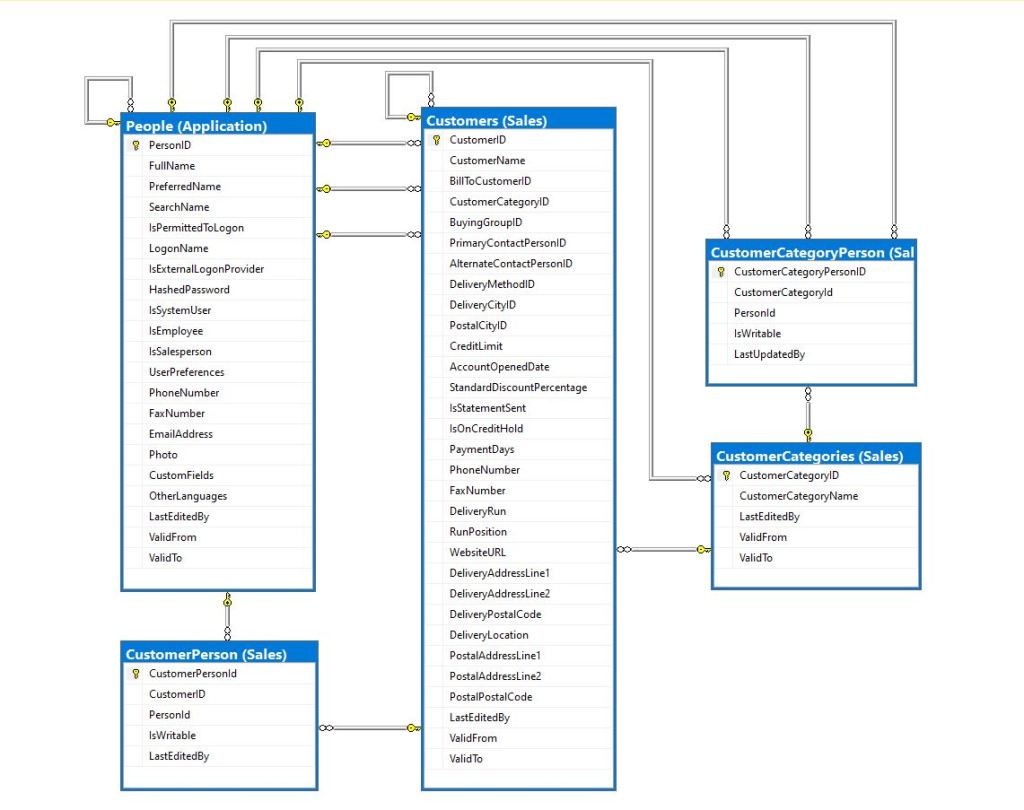

The application I’m currently working on uses a Command and Query Responsibility Segregation(CQRS) like approach. The application is largely “read only” so we have replicas of the database to improve the performance of queries hence the ConnectionReadCreate and ConnectionWriteCreate methods.

namespace devMobile.WebAPIDapper.ListsDIBasic

{

using System.Data;

using System.Data.SqlClient;

using Microsoft.Extensions.Configuration;

public interface IDapperContext

{

public IDbConnection ConnectionCreate();

public IDbConnection ConnectionCreate(string connectionStringName);

public IDbConnection ConnectionReadCreate();

public IDbConnection ConnectionWriteCreate();

}

public class DapperContext : IDapperContext

{

private readonly IConfiguration _configuration;

public DapperContext(IConfiguration configuration)

{

_configuration = configuration;

}

public IDbConnection ConnectionCreate()

{

return new SqlConnection(_configuration.GetConnectionString("default"));

}

public IDbConnection ConnectionCreate(string connectionStringName)

{

return new SqlConnection(_configuration.GetConnectionString(connectionStringName));

}

public IDbConnection ConnectionReadCreate()

{

return new SqlConnection(_configuration.GetConnectionString("default-read"));

}

public IDbConnection ConnectionWriteCreate()

{

return new SqlConnection(_configuration.GetConnectionString("default-write"));

}

}

}

I have experimented with how the IDapperContext context is constructed in the application startup with builder.Services.AddSingleton, builder.Services.AddScopedand and builder.Services.AddTransient.

public class Program

{

public static void Main(string[] args)

{

var builder = WebApplication.CreateBuilder(args);

// Add services to the container.

//builder.Services.AddSingleton<IDapperContext>(s => new DapperContext(builder.Configuration));

//builder.Services.AddTransient<IDapperContext>(s => new DapperContext(builder.Configuration));

//builder.Services.AddScoped<IDapperContext>(s => new DapperContext(builder.Configuration));

builder.Services.AddControllers();

var app = builder.Build();

// Configure the HTTP request pipeline.

app.UseHttpsRedirection();

app.MapControllers();

app.Run();

}

}

Then in the StockItems controller the IDapperContext interface implementation is used to create an IDbConnection for Dapper operations to use. I also added “WAITFOR DELAY ’00:00:02″ to the query to extend the duration of the requests.

[ApiController]

[Route("api/[controller]")]

public class StockItemsController : ControllerBase

{

private readonly ILogger<StockItemsController> logger;

private readonly IDapperContext dapperContext;

public StockItemsController(ILogger<StockItemsController> logger, IDapperContext dapperContext)

{

this.logger = logger;

this.dapperContext = dapperContext;

}

[HttpGet]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> Get()

{

IEnumerable<Model.StockItemListDtoV1> response;

using (IDbConnection db = dapperContext.ConnectionCreate())

{

//response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]", commandType: CommandType.Text);

response = await db.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]; WAITFOR DELAY '00:00:02'", commandType: CommandType.Text);

}

return this.Ok(response);

}

...

}

I ran a stress testing application which simulated 50 concurrent users. When the stress test rig was stopped all the connections in the pool were closed after roughly 5 minutes.

Nasty approach

I then tried using DI to create a new connection for each request using builder.Services.AddScoped

public class Program

{

public static void Main(string[] args)

{

var builder = WebApplication.CreateBuilder(args);

// Add services to the container.

//builder.Services.AddSingleton<IDbConnection>(s => new SqlConnection(builder.Configuration.GetConnectionString("default")));

//builder.Services.AddScoped<IDbConnection>(s => new SqlConnection(builder.Configuration.GetConnectionString("default")));

//builder.Services.AddTransient<IDbConnection>(s => new SqlConnection(builder.Configuration.GetConnectionString("default")));

builder.Services.AddControllers();

var app = builder.Build();

app.UseHttpsRedirection();

app.MapControllers();

app.Run();

}

}

The code in the get method was reduced. I also added “WAITFOR DELAY ’00:00:02″ to the query to extend the duration of the requests.

public class StockItemsController : ControllerBase

{

private readonly ILogger<StockItemsController> logger;

private readonly IDbConnection dbConnection;

public StockItemsController(ILogger<StockItemsController> logger, IDbConnection dbConnection)

{

this.logger = logger;

this.dbConnection = dbConnection;

}

[HttpGet]

public async Task<ActionResult<IEnumerable<Model.StockItemListDtoV1>>> Get()

{

// return this.Ok(await dbConnection.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]; WAITFOR DELAY '00:00:02';", commandType: CommandType.Text));

return this.Ok(await dbConnection.QueryWithRetryAsync<Model.StockItemListDtoV1>(sql: @"SELECT [StockItemID] as ""ID"", [StockItemName] as ""Name"", [RecommendedRetailPrice], [TaxRate] FROM [Warehouse].[StockItems]", commandType: CommandType.Text));

}

...

}

With the stress test rig running the number of active connections was roughly the same as the DapperContext based implementation.

I don’t like this approach so will stick with DapperContext