Introduction

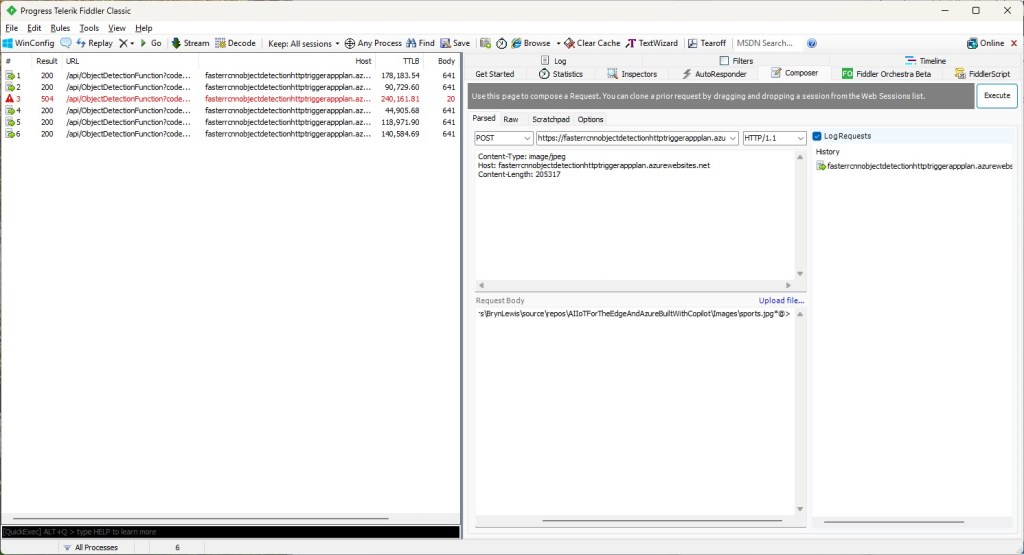

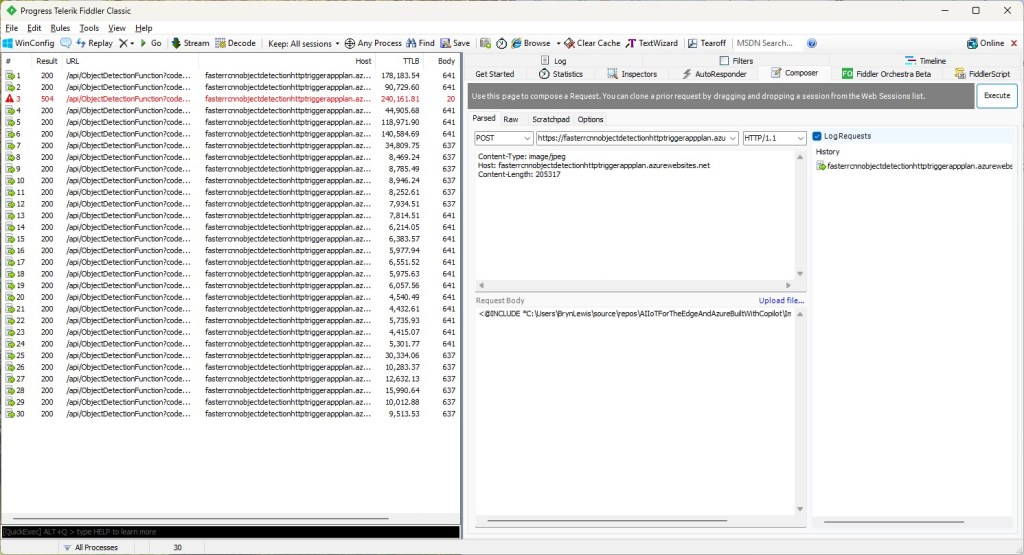

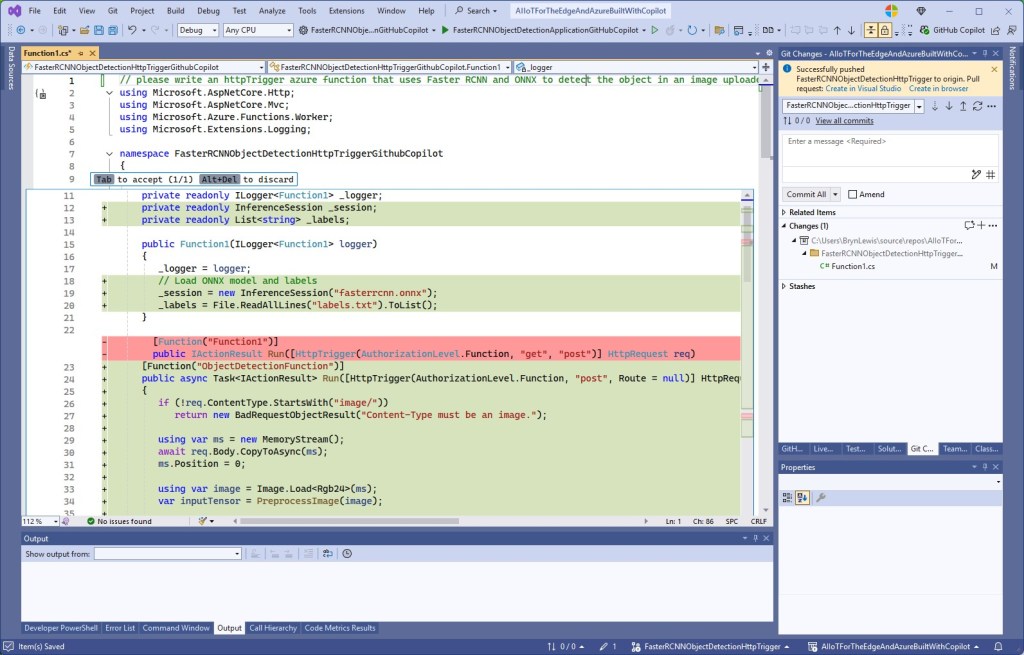

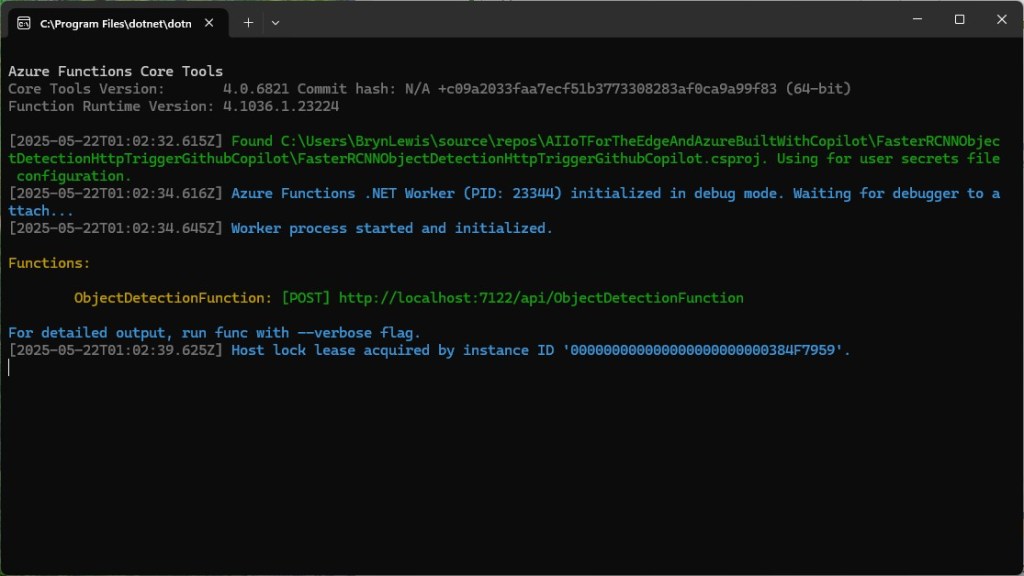

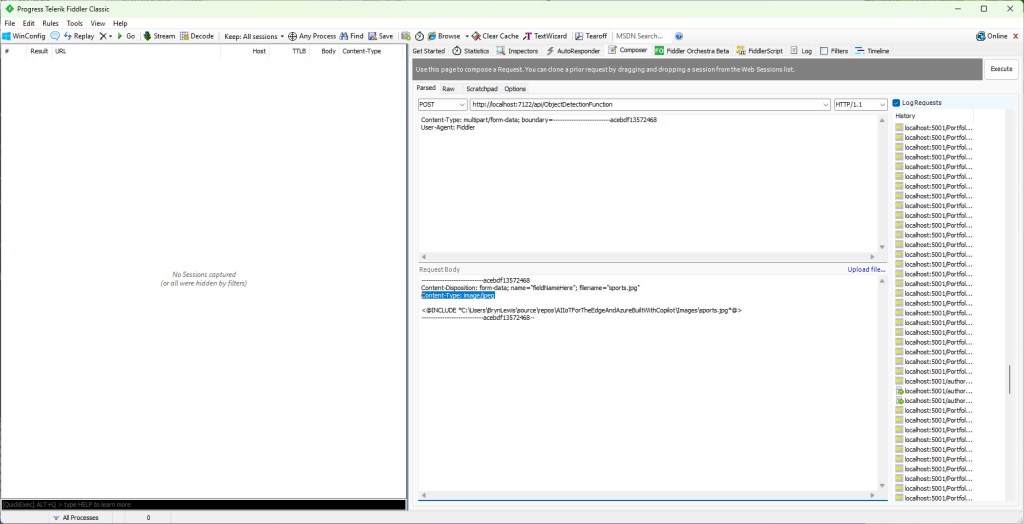

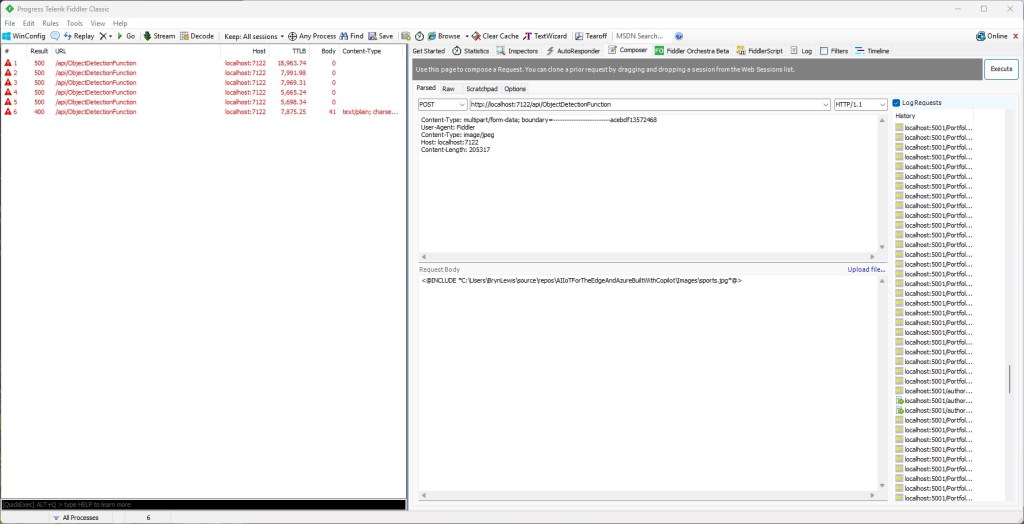

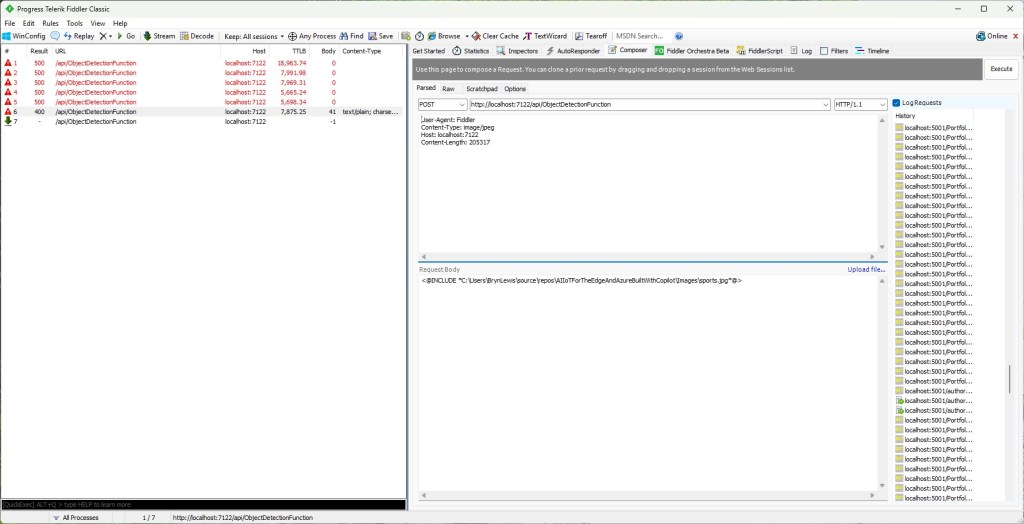

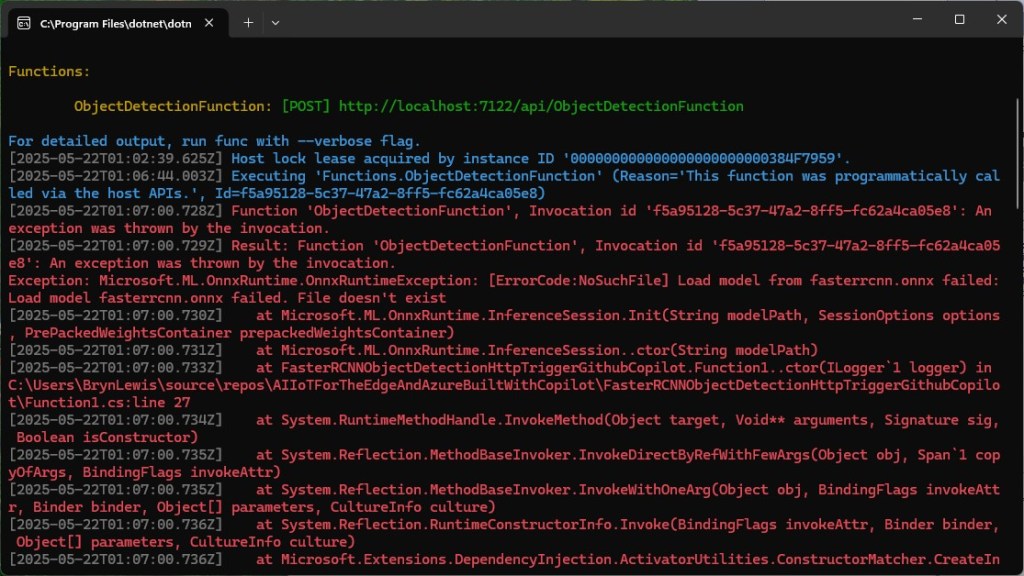

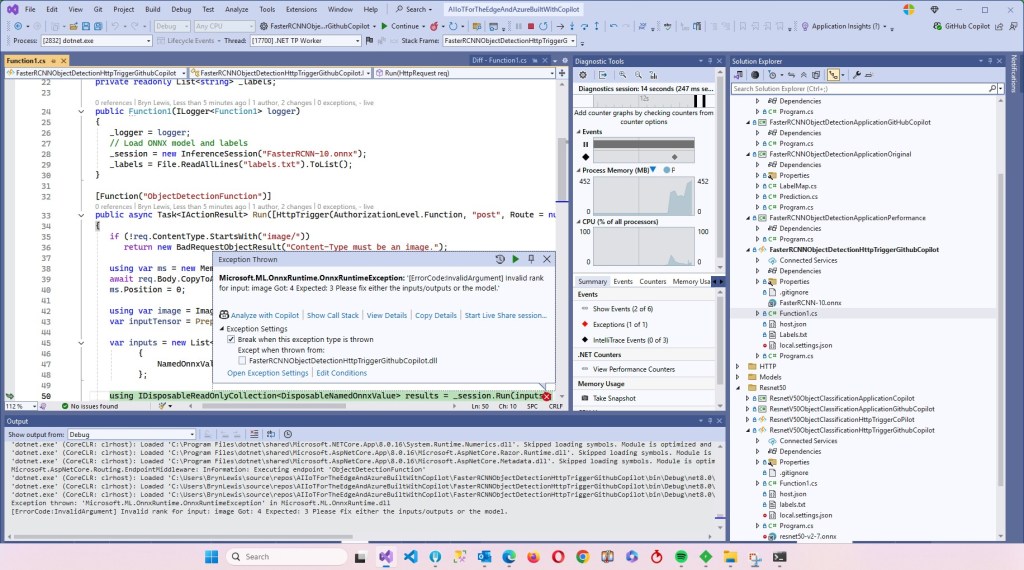

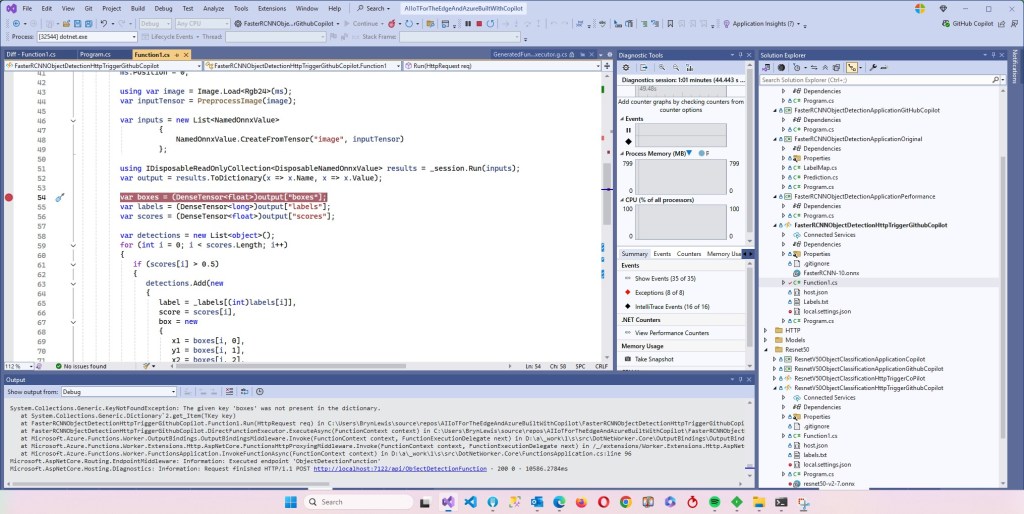

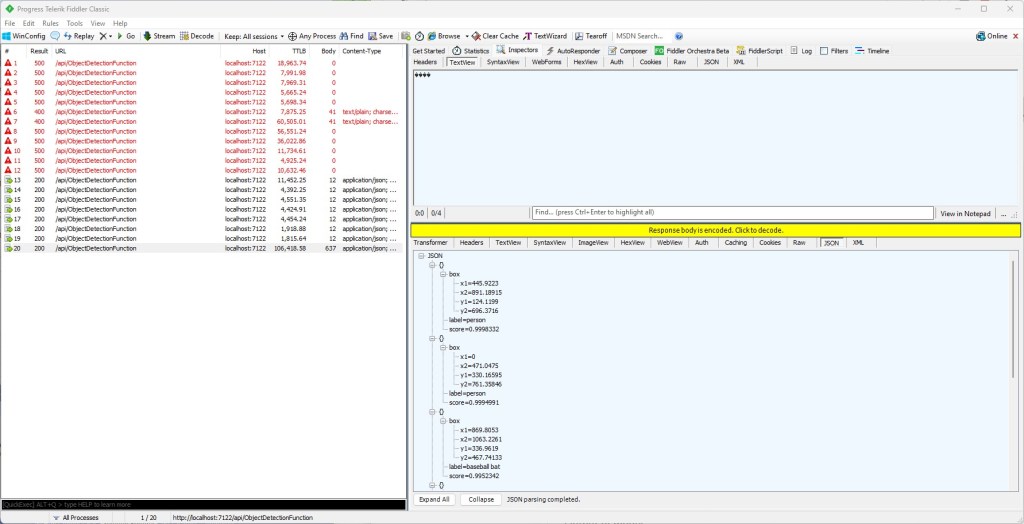

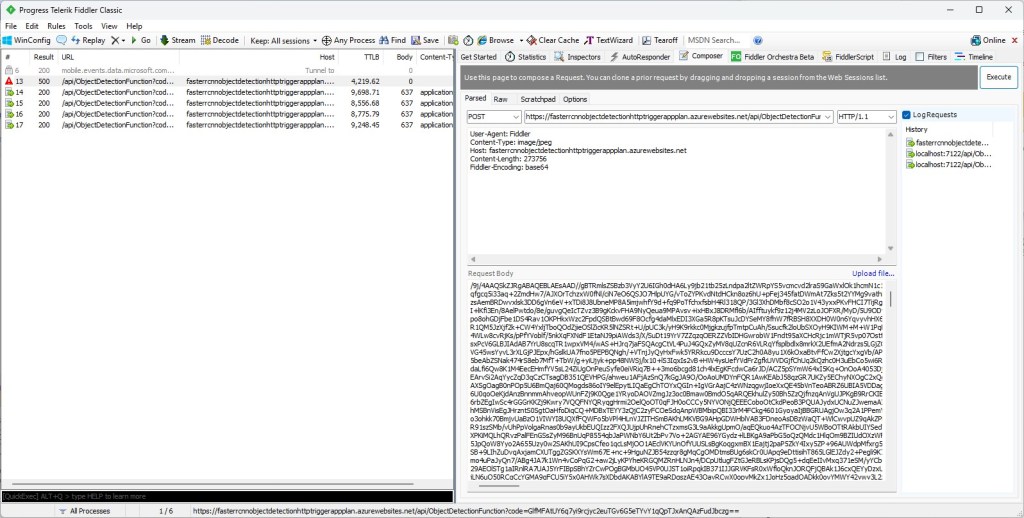

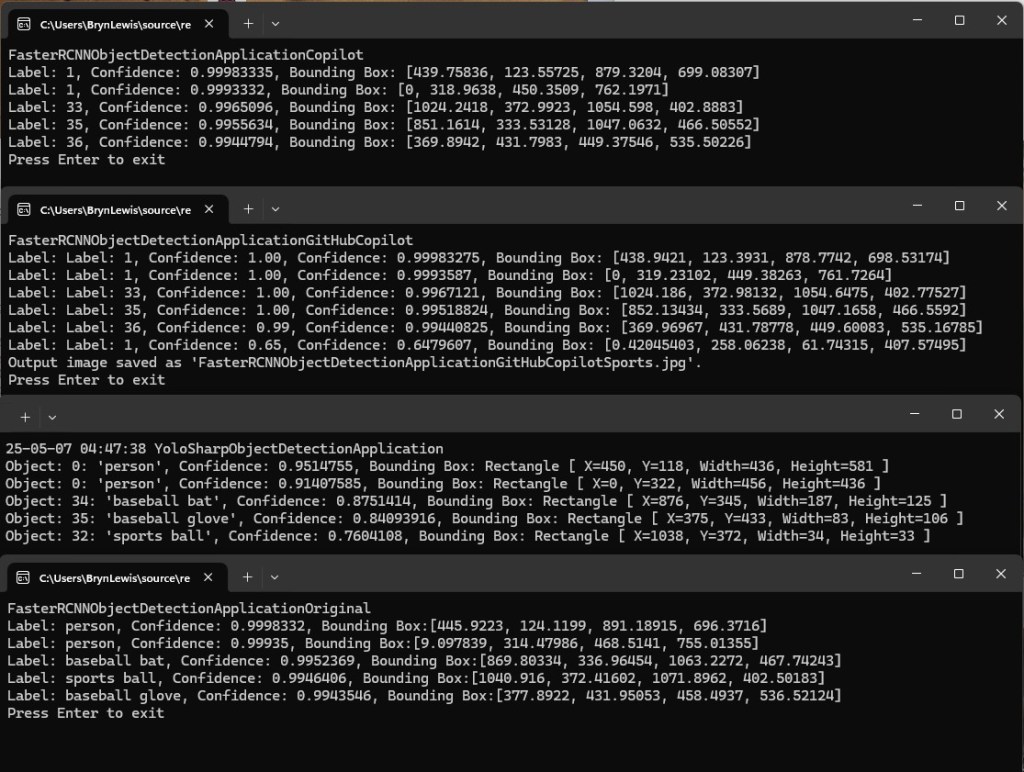

While testing the FasterRCNNObjectDetectionHttpTrigger function with Telerik Fiddler Classic and my “standard” test image I noticed the response bodies were different sizes.

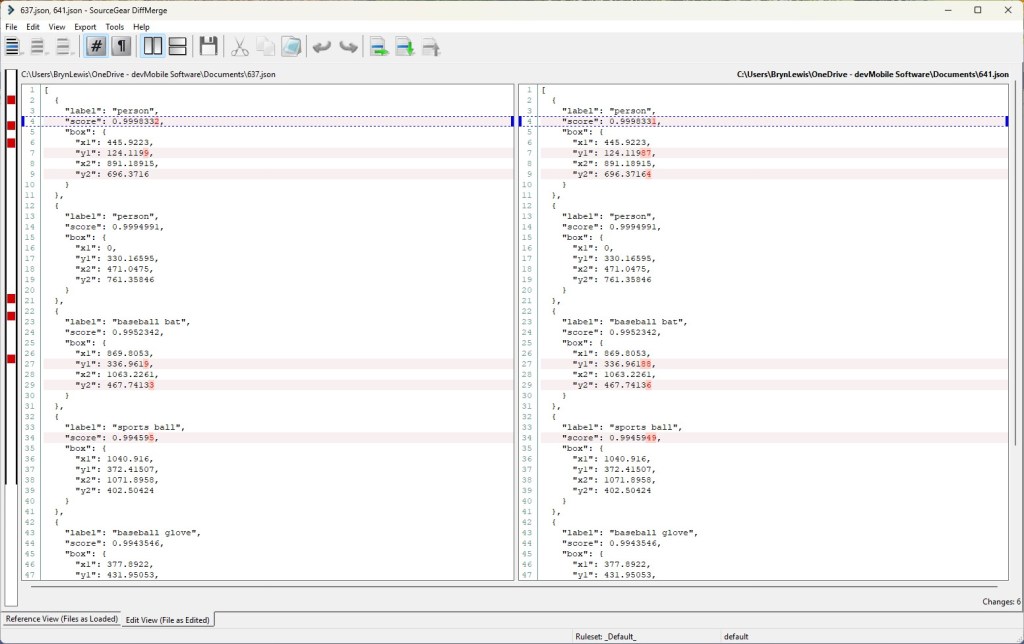

Initially the application plan was an S1 SKU (1 vCPU 1.75G RAM)

The output JSON was 641 bytes

[

{

"label": "person",

"score": 0.9998331,

"box": {

"x1": 445.9223, "y1": 124.11987, "x2": 891.18915, "y2": 696.37164

}

},

{

"label": "person",

"score": 0.9994991,

"box": {

"x1": 0, "y1": 330.16595, "x2": 471.0475, "y2": 761.35846

}

},

{

"label": "baseball bat",

"score": 0.9952342,

"box": { "x1": 869.8053, "y1": 336.96188, "x2": 1063.2261, "y2": 467.74136

}

},

{

"label": "sports ball",

"score": 0.9945949,

"box": { "x1": 1040.916, "y1": 372.41507, "x2": 1071.8958, "y2": 402.50424

}

},

{

"label": "baseball glove",

"score": 0.9943546,

"box": {

"x1": 377.8922, "y1": 431.95053, "x2": 458.4937, "y2": 536.52124

}

},

{

"label": "person",

"score": 0.51779467,

"box": {

"x1": 0, "y1": 239.91418, "x2": 60.342667, "y2": 397.17004

}

}

]

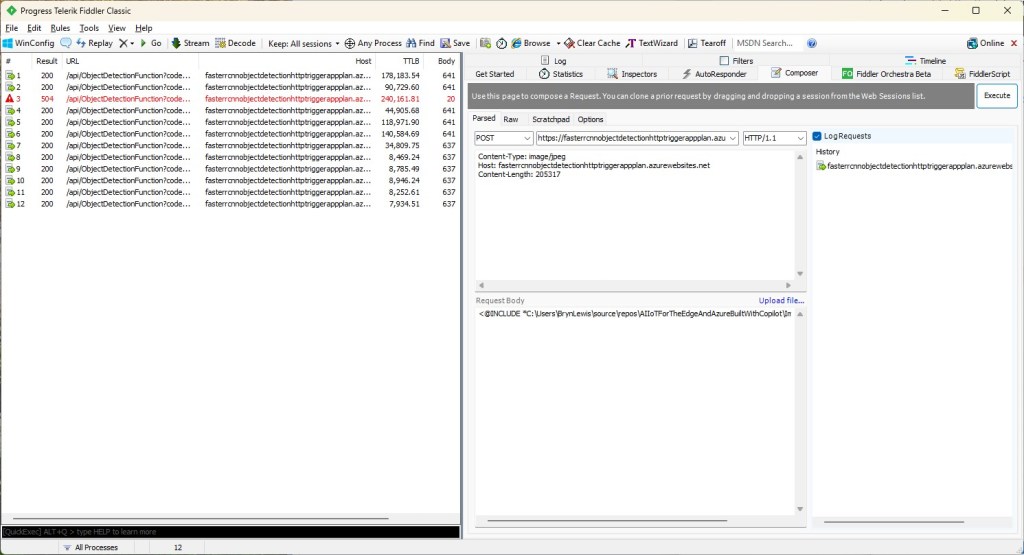

The application plan was scaled to a Premium v3 P0V3 (1 vCPU 4G RAM)

The output JSON was 637 bytes

[

{

"label": "person",

"score": 0.9998332,

"box": {

"x1": 445.9223, "y1": 124.1199, "x2": 891.18915, "y2": 696.3716

}

},

{

"label": "person",

"score": 0.9994991,

"box": { "x1": 0, "y1": 330.16595, "x2": 471.0475, "y2": 761.35846

}

},

{

"label": "baseball bat",

"score": 0.9952342,

"box": {

"x1": 869.8053, "y1": 336.9619, "x2": 1063.2261, "y2": 467.74133

}

},

{

"label": "sports ball",

"score": 0.994595,

"box": {

"x1": 1040.916, "y1": 372.41507, "x2": 1071.8958, "y2": 402.50424

}

},

{

"label": "baseball glove",

"score": 0.9943546,

"box": {

"x1": 377.8922, "y1": 431.95053, "x2": 458.4937, "y2": 536.52124

}

},

{

"label": "person",

"score": 0.51779467,

"box": {

"x1": 0, "y1": 239.91418, "x2": 60.342667, "y2": 397.17004

}

}

]

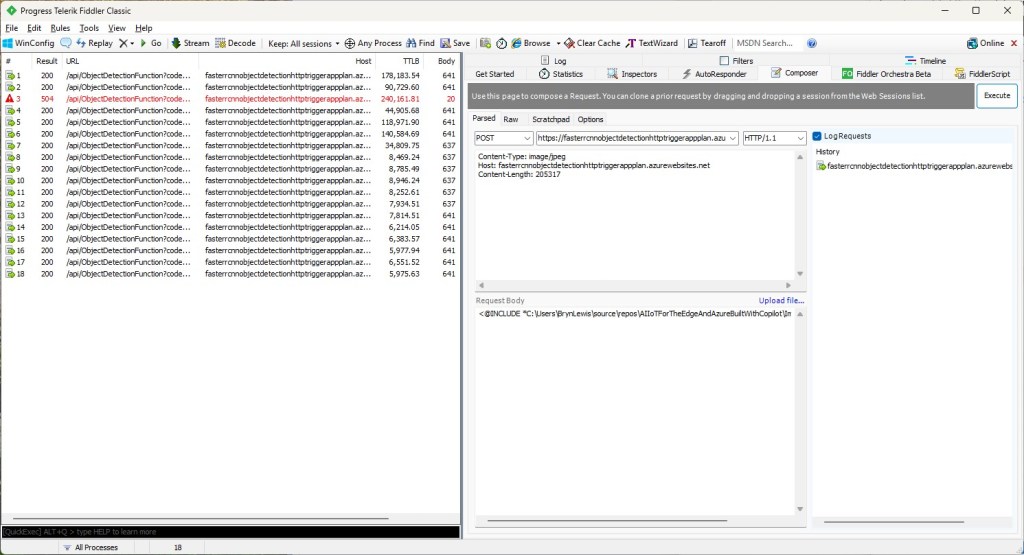

The application plan was scaled to Premium v3 P1V3 (2 vCPU 8G RAM)

The output JSON was 641 bytes

[

{

"label": "person",

"score": 0.9998331,

"box": {

"x1": 445.9223, "y1": 124.11987, "x2": 891.18915, "y2": 696.37164

}

},

{

"label": "person",

"score": 0.9994991,

"box": {

"x1": 0, "y1": 330.16595, "x2": 471.0475, "y2": 761.35846

}

},

{

"label": "baseball bat",

"score": 0.9952342,

"box": {

"x1": 869.8053, "y1": 336.96188, "x2": 1063.2261, "y2": 467.74136

}

},

{

"label": "sports ball",

"score": 0.9945949,

"box": {

"x1": 1040.916, "y1": 372.41507, "x2": 1071.8958, "y2": 402.50424

}

},

{

"label": "baseball glove",

"score": 0.9943546,

"box": {

"x1": 377.8922, "y1": 431.95053, "x2": 458.4937, "y2": 536.52124

}

},

{

"label": "person",

"score": 0.51779467,

"box": {

"x1": 0, "y1": 239.91418, "x2": 60.342667, "y2": 397.17004

}

}

]

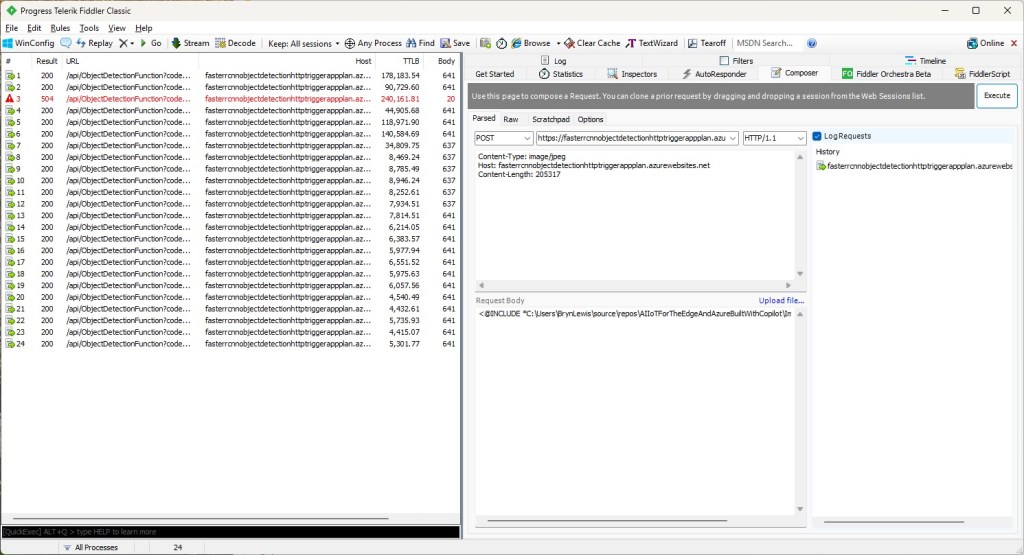

The application plan was scaled to a Premium v3 P2V3 (4 vCPU 16G RAM)

The output JSON was 641 bytes

[

{

"label": "person",

"score": 0.9998331,

"box": {

"x1": 445.9223, "y1": 124.11987, "x2": 891.18915, "y2": 696.37164

}

},

{

"label": "person",

"score": 0.9994991,

"box": {

"x1": 0, "y1": 330.16595, "x2": 471.0475, "y2": 761.35846

}

},

{

"label": "baseball bat",

"score": 0.9952342,

"box": {

"x1": 869.8053, "y1": 336.96188, "x2": 1063.2261, "y2": 467.74136

}

},

{

"label": "sports ball",

"score": 0.9945949,

"box": {

"x1": 1040.916, "y1": 372.41507, "x2": 1071.8958, "y2": 402.50424

}

},

{

"label": "baseball glove",

"score": 0.9943546,

"box": {

"x1": 377.8922, "y1": 431.95053, "x2": 458.4937, "y2": 536.52124 }

},

{

"label": "person",

"score": 0.51779467,

"box": {

"x1": 0, "y1": 239.91418, "x2": 60.342667, "y2": 397.17004

}

}

]

The application plan was scaled to a Premium v2 P1V2 (1vCPU 3.5G)

The output JSON was 637 bytes

[

{

"label": "person",

"score": 0.9998332,

"box": {

"x1": 445.9223, "y1": 124.1199, "x2": 891.18915, "y2": 696.3716

}

},

{

"label": "person",

"score": 0.9994991,

"box": {

"x1": 0, "y1": 330.16595, "x2": 471.0475, "y2": 761.35846

}

},

{

"label": "baseball bat",

"score": 0.9952342,

"box": {

"x1": 869.8053, "y1": 336.9619, "x2": 1063.2261, "y2": 467.74133

}

},

{

"label": "sports ball",

"score": 0.994595,

"box": {

"x1": 1040.916, "y1": 372.41507, "x2": 1071.8958, "y2": 402.50424

}

},

{

"label": "baseball glove",

"score": 0.9943546,

"box": {

"x1": 377.8922, "y1": 431.95053, "x2": 458.4937, "y2": 536.52124

}

},

{

"label": "person",

"score": 0.51779467,

"box": {

"x1": 0, "y1": 239.91418, "x2": 60.342667, "y2": 397.17004

}

}

]

Summary

The differences between the 637 & 641were small

Not certain why this could happen currently best guess is memory pressure.